SLIDE 1

moment generating functions

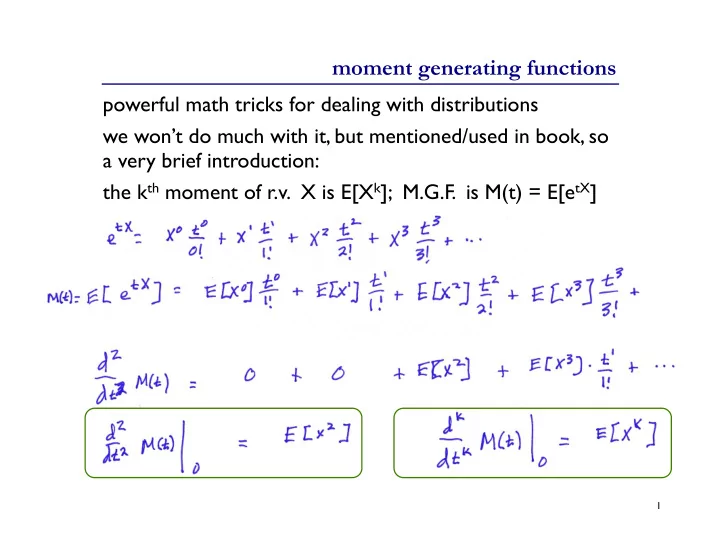

powerful math tricks for dealing with distributions we won’t do much with it, but mentioned/used in book, so a very brief introduction: the kth moment of r.v. X is E[Xk]; M.G.F. is M(t) = E[etX]

1

moment generating functions powerful math tricks for dealing with - - PowerPoint PPT Presentation

moment generating functions powerful math tricks for dealing with distributions we wont do much with it, but mentioned/used in book, so a very brief introduction: the k th moment of r.v. X is E[X k ]; M.G.F. is M(t) = E[e tX ] 1 the law

1

2

3

4

5

http://en.wikipedia.org/wiki/Law_of_large_numbers

6

7

8

9

10

11

12

13

14

15