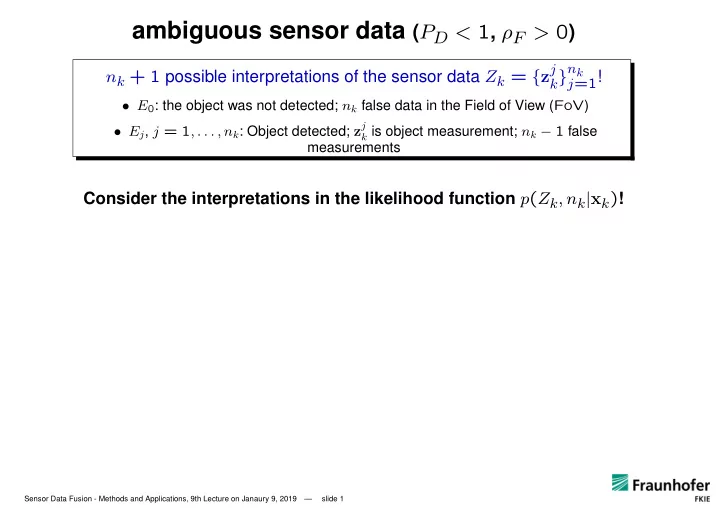

ambiguous sensor data (PD < 1, ρF > 0)

nk + 1 possible interpretations of the sensor data Zk = {zj

k}nk j=1!

- E0: the object was not detected; nk false data in the Field of View (FoV)

- Ej, j = 1, . . . , nk: Object detected; zj

k is object measurement; nk − 1 false

measurements

Consider the interpretations in the likelihood function p(Zk, nk|xk)!

Sensor Data Fusion - Methods and Applications, 9th Lecture on Janaury 9, 2019 — slide 1