Lessons learned from the theory and practice of change detection

Mich` ele Basseville IRISA / CNRS, Rennes, France michele.basseville@irisa.fr -- http://www.irisa.fr/sisthem/

1

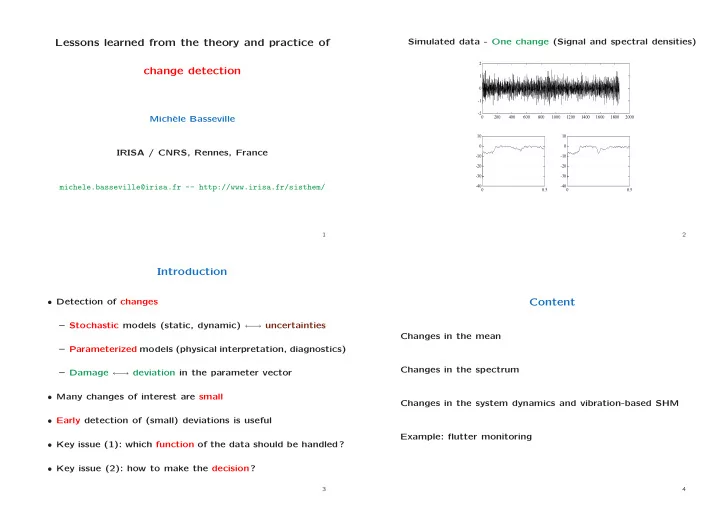

Simulated data - One change (Signal and spectral densities)

- 2

- 1

1 2 200 400 600 800 1000 1200 1400 1600 1800 2000

- 40

- 30

- 20

- 10

10 0.5

- 40

- 30

- 20

- 10

10 0.5

2

Introduction

- Detection of changes

– Stochastic models (static, dynamic) ← → uncertainties – Parameterized models (physical interpretation, diagnostics) – Damage ← → deviation in the parameter vector

- Many changes of interest are small

- Early detection of (small) deviations is useful

- Key issue (1): which function of the data should be handled?

- Key issue (2): how to make the decision?