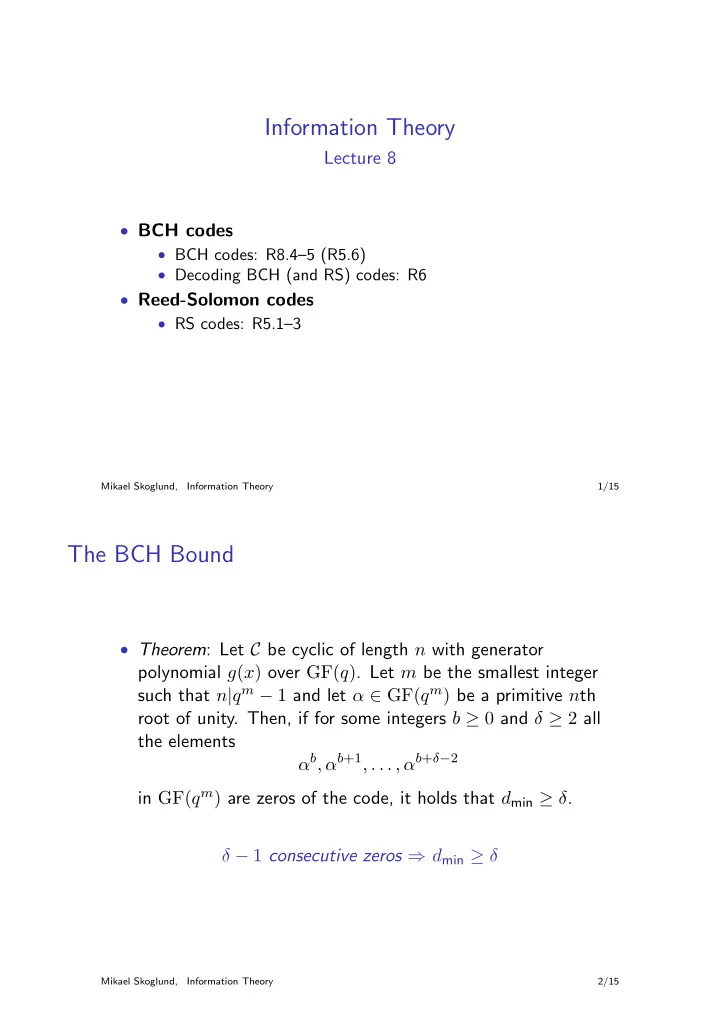

Information Theory

Lecture 8

- BCH codes

- BCH codes: R8.4–5 (R5.6)

- Decoding BCH (and RS) codes: R6

- Reed-Solomon codes

- RS codes: R5.1–3

Mikael Skoglund, Information Theory 1/15

The BCH Bound

- Theorem: Let C be cyclic of length n with generator

polynomial g(x) over GF(q). Let m be the smallest integer such that n|qm − 1 and let α ∈ GF(qm) be a primitive nth root of unity. Then, if for some integers b ≥ 0 and δ ≥ 2 all the elements αb, αb+1, . . . , αb+δ−2 in GF(qm) are zeros of the code, it holds that dmin ≥ δ. δ − 1 consecutive zeros ⇒ dmin ≥ δ

Mikael Skoglund, Information Theory 2/15