SLIDE 1 Image Domain Gridding

Sebastiaan van der Tol, Bram Veenboer

Overview

brief recap of imaging, gridding, Wprojection and Aprojection motivation for a new algorithm image domain gridding accuracy performance demo conclusions

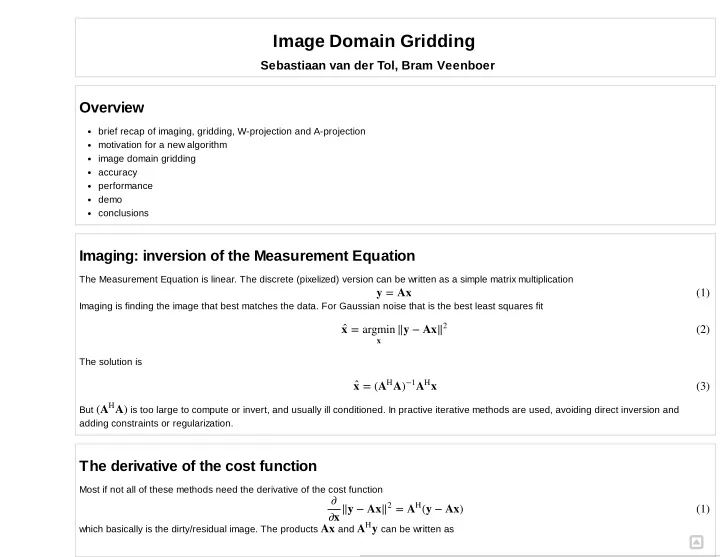

Imaging: inversion of the Measurement Equation

The Measurement Equation is linear. The discrete (pixelized) version can be written as a simple matrix multiplication Imaging is finding the image that best matches the data. For Gaussian noise that is the best least squares fit The solution is But is too large to compute or invert, and usually ill conditioned. In practive iterative methods are used, avoiding direct inversion and adding constraints or regularization.

The derivative of the cost function

Most if not all of these methods need the derivative of the cost function which basically is the dirty/residual image. The products and can be written as

y = Ax (1) = ‖y − Ax x̂ argmin

x

‖2 (2) = ( A x x̂ AH )−1AH (3) ( A) AH ‖y − Ax = (y − Ax) ∂ ∂x ‖2 AH (1) Ax y AH

N N

SLIDE 2 .

Gridding, Degridding, W projection and A Projection

Computing visibilities and pixels this way is expensive because each pixel is the sum of all visibilities and each predicted visibility is the sum of all (nonzero) pixels of the model image. The solution is of course well known. These equation resemble the Discrete Fourier Transform, which can be computed very efficiently. For this to work the measured visibility need to be on a regular grid, which they aren't. The trick is to resample the data from or to a regular grid using convolutional resampling. The convolution kernel is an antialiasing filter, but it can also include other effects, like the W term and the beam/ A term

Motivation for a new algorithm: AWImager + ionosphere

The convolution kernel is computed on an oversampled grid. The taper, wterm and aterm are computed in the image domain and then zero padded by the required oversampling factor, and then Fourier transformed. When the aterm is varying over very short times scales, which is the case when ionospheric effects are included, the computation of the convolution kernels becomes much more expensive then the subsequent gridding operation itself.

Image Domain Gridding

Idea: why not pull the gridding operation to the image domain, instead of bringing the convolution kernel to the uv domain? But wait: we moved the operations from the image domain to the uv domain because it is more efficient. If we move the operation back to the image domain again, aren't we then back to the DFT. Well, almost, but not quite. The difference is that we operare on far fewer pixels, because we select only a small region in the uvdomain.

UV tracks

= Vpqk ∑

i=1 N

∑

j=1 N

e−2πj(

+ + ) upqkli vpqkmj wpqknij JpijkBijJH qijk

(2) = Iij ∑

k=1 ∑ p=1 ∑ q=1

e2πj(

+ + ) upqkli vpqkmj wpqknij JH pijkVpqJqijk

(3)

SLIDE 3

Partitioning the visibilities

SLIDE 4

UV Subgrid encompassing all affected pixels

SLIDE 5 Equation for the subgrid

Selecting a subgrid shifts origin of Fourier transform to center of subgrid.

N N

SLIDE 6 Gridding flow diagram Degridding flow diagram

Vpqk Iij = ∑

i=1 N

∑

j=1 N

e−2πj((

− ) +( − ) + ) upqk u0 li vpqk v0 mj wpqknij JpijkBijJH qijkWij

= ∑

k=1

e2πj((

− ) +( − ) + ) upqk u0 li vpqk v0 mj wpqknij JH pijkVpqJqijkWij

(7) (8)

SLIDE 7

Effective operation in the uv domain

SLIDE 8

Effective operation in the image domain

= [ ( , ) ( , )]( , ) ̂ ⃛ ˜

SLIDE 9

where is the infinitely repeated model image and is the sinc interpolated window.

Error compared to classical gridding with PSWF

W PSWF N=8 N=16 N=24 N=32 N=48 N=64 3.0 3.33e02 4.63e02 2.87e02 2.25e02 1.92e02 1.54e02 1.32e02 5.0 1.68e03 4.60e03 2.59e03 1.94e03 1.60e03 1.25e03 1.06e03 7.0 7.96e05 2.99e04 1.67e04 1.25e04 1.03e04 7.89e05 6.62e05 9.0 3.68e06 9.08e06 6.96e06 5.78e06 4.45e06 3.71e06 11.0 1.68e07 4.82e07 3.55e07 2.98e07 2.33e07 1.95e07 13.0 7.58e09 2.70e08 1.79e08 1.47e08 1.16e08 9.83e09 15.0 3.40e10 1.45e09 9.15e10 7.25e10 5.61e10 4.78e10

Error as function position in subgrid Error as function of position in image

= [ (l, m) (l, m)](u, v) ŷ I⃛ W ˜ (l, m) I⃛ (l, m) W ˜

SLIDE 10

Error as function of position in image Subgrid pixel budget

Tapering window 7 W term 8 A term 8 UV coverage 9

SLIDE 11

Computational Properties

very regular structure with lots of paralellism fewer access to memory, because there is no lookup in a sampled convolution function But touches many more pixels per visibility, typically 32x32 vs 7x7 classical gridding and needs to evaluate a sincos per pixel Well suited for a GPU with sincos hardware instruction

Implementation

Bram Veenboer Ph.D. Researcher ASTRON (Netherlands Institute for Radio Astronomy) DOME Project (ASTRON + IBM) High Performance Computing Implementations for different platforms: CPU, Xeon Phi, CUDA, OpenCL It provides a python interface and a c++ interface git clone git@gitlab.com:astronidg/code.git (not for commercial use, patent pending)

CPU Demo in laptop

SLIDE 12

In [6]:

CPU Demo on compute node

2x Xeon E52660v3, 20 cores, 40 threads >>> Configuration PARAMETERS: Number of stations == 44 Number of baselines == 946 Number of channels == 8 Number of time == 256 Number of timeslots == 16 Imagesize == 0.1 Grid size == 1024 Subgrid size == 32 Job size == 256 Job size (gridding) == 256 Job size (degridding) == 256 Job size (gridder) == 256 Job size (adder) == 256 Job size (splitter) == 256 Job size (degridder) == 256 !NR_TIME=256 cpuoptimized.x

SLIDE 13

In [7]:

GPU demo on laptop

cpudemo >>> Configuration PARAMETERS: Number of stations == 44 Number of baselines == 946 Number of channels == 8 Number of time == 4096 Number of timeslots == 32 Imagesize == 0.1 Grid size == 4096 Subgrid size == 32 Job size == 256 Job size (gridding) == 256 Job size (degridding) == 256 Job size (gridder) == 256 Job size (adder) == 256 Job size (splitter) == 256 Job size (degridder) == 256 !ssh node505 SUBGRIDSIZE=32 NR_TIMESLOTS=32 ./cpudemo.sh

SLIDE 14

In [8]:

GPU demo on a compute node

>>> Configuration PARAMETERS: Number of stations == 44 Number of baselines == 946 Number of channels == 8 Number of time == 4096 Number of timeslots == 16 Imagesize == 0.1 Grid size == 1024 Subgrid size == 32 Job size == 256 Job size (gridding) == 256 Job size (degridding) == 256 Job size (gridder) == 256 Job size (adder) == 256 Job size (splitter) == 256 Job size (degridder) == 256 !cudageneric.x

SLIDE 15

In [14]:

Demo python interface

gpudemo >>> Configuration PARAMETERS: Number of stations == 44 Number of baselines == 946 Number of channels == 8 Number of time == 32000 Number of timeslots == 16 Imagesize == 0.1 Grid size == 4096 Subgrid size == 32 Job size == 256 Job size (gridding) == 256 Job size (degridding) == 256 Job size (gridder) == 256 Job size (adder) == 256 Job size (splitter) == 256 Job size (degridder) == 256 !ssh node505 SUBGRIDSIZE=32 NR_TIME=32000 ./gpudemo.sh

SLIDE 16

In [2]: nr_stations = 12 nr_baselines = 66 nr_channels = 8 nr_timesteps = 4096 nr_timeslots = 8 nr_polarizations = 4 subgrid_size = 32 grid_size = 1024 image_size = 0.0599999986589 kernel_size = 17 job size (gridding) = 256 job size (degridding) = 256 %matplotlib inline from common import * main(idg.CUDA.Generic)

SLIDE 17

Comparison to WSClean gridder

Why compare to WSClean? AWImager can correct for the LOFAR beam, uses a A projection and a combination of W projection and W stacking Computing the kernels takes time but the beam model is not good enough for noticable difference in the image Obtaining a good ionosperic model is difficult, but it does improve the image, > horrendously slow WSClean is fast and became the defacto standard for LOFAR imaging What do we compare? Only the pure gridding time Number of W stacking planes is reduced by two time the number of pixels reserved for the W term

wsclean

Dataset 10 Subbands x 2 channels 55 Stations, 1485 baselines 10s integration time 8.8 hours 93M visibilities, 77M selected visibilities

SLIDE 18

no gridder: 25s wsclean gridder: 35.6s idg gridder: 33.8

Conclusions

Image domain gridding can do fast gridding (IO bound) high time resolution A term Still to do full clean on large dataset fill A term with something useful