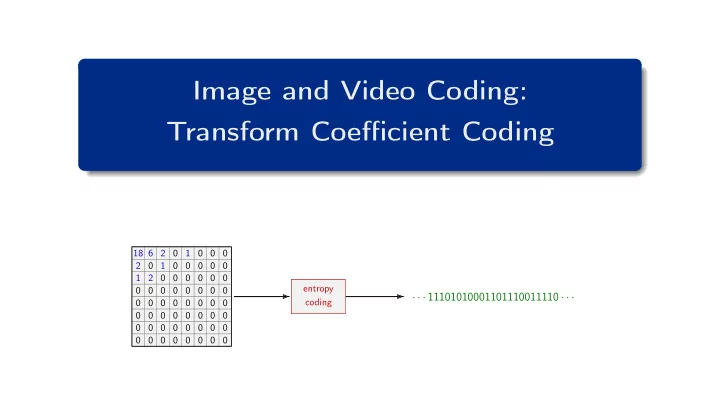

Image and Video Coding: Transform Coefficient Coding

18 6 2 0 1 0 0 0 2 0 1 0 0 0 0 0 1 2 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

· · · 11101010001101110011110 · · ·

entropy coding

Image and Video Coding: Transform Coefficient Coding 18 6 2 0 1 0 0 - - PowerPoint PPT Presentation

Image and Video Coding: Transform Coefficient Coding 18 6 2 0 1 0 0 0 2 0 1 0 0 0 0 0 1 2 0 0 0 0 0 0 entropy 0 0 0 0 0 0 0 0 11101010001101110011110 coding 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

18 6 2 0 1 0 0 0 2 0 1 0 0 0 0 0 1 2 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

· · · 11101010001101110011110 · · ·

entropy coding

18 6 2 0 1 0 0 0 2 0 1 0 0 0 0 0 1 2 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

transform & quantization entropy coding

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 2 / 42

Variable-Length Coding / Scalar Codes

symbol codeword a 000 b 001 c 010 d 011 e 100 f 101 g 110 h 111 symbol codeword a 1 b 01 c 001 d 0001 e 00001 f 000001 g 0000001 h 0000000 symbol codeword a 0000 b 0001 c 001 d 01 e 10 f 110 g 1110 h 1111

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 3 / 42

Variable-Length Coding / Scalar Codes

letter codeword a 00 b 010 c 011 d 10 e 1100 f 1101 g 111

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 4 / 42

Variable-Length Coding / Scalar Codes

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 5 / 42

Variable-Length Coding / Scalar Codes

1 Construct binary code tree (for given probability mass function)

2 Construct code by labeling the branches with “0” and “1”

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 6 / 42

Variable-Length Coding / Scalar Codes

0.16 a 0.04 b 0.04 c 0.16 d 0.23 e 0.07 f 0.06 g 0.09 h 0.15 i 0.08 0.16 a 0.08 0.04 b 0.04 c 0.16 d 0.23 e 0.07 f 0.06 g 0.09 h 0.15 i 0.13 0.16 a 0.08 0.04 b 0.04 c 0.16 d 0.23 e 0.13 0.07 f 0.06 g 0.09 h 0.15 i

0.23 e 0.16 a 0.16 d 0.15 i 0.13 0.07 f 0.06 g 0.09 h 0.08 0.04 b 0.04 c 0.17 0.23 e 0.16 a 0.16 d 0.15 i 0.13 0.07 f 0.06 g 0.17 0.09 h 0.08 0.04 b 0.04 c 0.28 0.23 e 0.16 a 0.16 d 0.28 0.15 i 0.13 0.07 f 0.06 g 0.17 0.09 h 0.08 0.04 b 0.04 c 0.32 0.23 e 0.32 0.16 a 0.16 d 0.28 0.15 i 0.13 0.07 f 0.06 g 0.17 0.09 h 0.08 0.04 b 0.04 c

0.32 0.16 a 0.16 d 0.28 0.15 i 0.13 0.07 f 0.06 g 0.23 e 0.17 0.09 h 0.08 0.04 b 0.04 c 0.40 0.32 0.16 a 0.16 d 0.28 0.15 i 0.13 0.07 f 0.06 g 0.40 0.23 e 0.17 0.09 h 0.08 0.04 b 0.04 c 0.60 0.60 0.32 0.16 a 0.16 d 0.28 0.15 i 0.13 0.07 f 0.06 g 0.40 0.23 e 0.17 0.09 h 0.08 0.04 b 0.04 c 1.00

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 7 / 42

Variable-Length Coding / Conditional Codes and Block Codes

binary source

sk p(sk) codeword a 0.9 b 0.1 1 H ≈ 0.469 ¯ ℓ = 1 ̺′ ≈ 113 %

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 8 / 42

Variable-Length Coding / Conditional Codes and Block Codes

conditional Huffman code sk−1 = a sk−1 = b sk−1 = c sk p(sk | a) codeword p(sk | b) codeword p(sk | b) codeword a 0.90 0.15 10 0.25 10 b 0.05 10 0.80 0.15 11 c 0.05 11 0.05 11 0.60 ¯ ℓa = 1.1 ¯ ℓb = 1.2 ¯ ℓc = 1.4 scalar Huffman code sk p(sk) codeword a

29/45

b

11/45

10 c

5/45

11 ¯ ℓscal = 61/45 ≈ 1.3556

¯ ℓcond =

p(z) · ¯ ℓz = 29 45 · 1.1 + 11 45 · 1.2 + 5 45 · 1.4 = 521 450 ≈ 1.1578

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 9 / 42

Variable-Length Coding / Conditional Codes and Block Codes

conditional pmf sk p(sk|a) p(sk|b) p(sk|c) a 0.90 0.15 0.25 b 0.05 0.80 0.15 c 0.05 0.05 0.60 scalar: ¯ ℓscal ≈ 1.3556 conditional: ¯ ℓcond ≈ 1.1578 block Huffman code (N = 2) sksk+1 p(sk, sk+1) codeword aa 0.5800 ab 0.0322 10000 ac 0.0322 10001 ba 0.0367 1010 bb 0.1956 11 bc 0.0122 101100 ca 0.0278 10111 cb 0.0167 101101 cc 0.0667 1001 ¯ ℓ2 ≈ 2.0188 ¯ ℓ ≈ 1.0094 block Huffman coding N ¯ ℓ # codewords 1 1.3556 3 (scalar) 2 1.0094 9 3 0.9150 27 4 0.8690 81 5 0.8462 243 6 0.8299 729 7 0.8153 2187 8 0.8027 6561 9 0.7940 19683

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 10 / 42

Variable-Length Coding / Conditional Codes and Block Codes

N→∞

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 11 / 42

Variable-Length Coding / V2V Codes

A = {a, b} sequence codeword aaa aab 100 ab 101 ba 110 bb 111 A = {a, b} sequence codeword aaaaa aaaab 10 aaab 110 aab 1110 ab 11110 b 11111 A = {x, y, z} sequence codeword xxx xxy 100 xxz 101 xy 1100 xz 1101 y 1110 z 1111

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 12 / 42

Variable-Length Coding / V2V Codes

iid source (M = 3) symbol probability a 0.80 b 0.15 c 0.05 entropy rate: ¯ H(S) = 0.88418 ( equal to H(S) ) scalar Huffman: ¯ ℓ = 1.2 ( 3 codewords ) 2-symbol blocks: ¯ ℓ = 0.93375 ( 9 codewords ) V2V code: ¯ ℓ = 0.88934 ( 7 codewords ) ̺ = 0.00516 ( ̺′ = 0.58 % )

a a a b c b c b c aaa aab aac ab ac b c

(0.512) (0.096) (0.032) (0.12) (0.04) (0.15) (0.05) 1 1 1 1 1 1 1 000 01000 001 01001 011 0101

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 13 / 42

Run-Level Coding Approaches / What Entropy Coding for Transform Coefficient Levels ?

7 3 1 0 -3 -1 0 0 1 0 -1 0 -2 0 0 0 0 0 0 -1 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

2 -1 0 0 0 0 0 0

0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0 0

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 14 / 42

Run-Level Coding Approaches / What Entropy Coding for Transform Coefficient Levels ?

Exp-Golomb code

q codewords 1 10 0

10 1 2 110 00

110 01 3 110 10

110 11 4 1110 000

1110 001 5 1110 010

1110 011 6 1110 100

1110 101 . . . . . .

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 15 / 42

Run-Level Coding Approaches / What Entropy Coding for Transform Coefficient Levels ?

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 16 / 42

Run-Level Coding Approaches / What Entropy Coding for Transform Coefficient Levels ?

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 17 / 42

Run-Level Coding Approaches / Run-Level Coding

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 18 / 42

Run-Level Coding Approaches / Run-Level Coding

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 19 / 42

Run-Level Coding Approaches / Run-Level Coding

excerpt of codeword table (run, level) codeword (s = sign) (0, ±1) 11s (0, ±2) 0100 s (0, ±3) 0010 1s (0, ±4) 0000 110s (1, ±1) 011s (1, ±2) 0001 10s (1, ±3) 0010 0101 s (2, ±1) 0101 s (eob) 10

1 Scanning and conversion into (run, level) pairs

2 Conversion into bitstream (via table look-up)

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 20 / 42

Run-Level Coding Approaches / Run-Level Coding

category absolute levels 1 1 2 2 ... 3 3 4 ... 7 4 8 ... 15 5 16 ... 31 6 32 ... 63 7 64 ... 127 8 128 ... 255 9 256 ... 511 10 512 ... 1023

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 21 / 42

Run-Level Coding Approaches / Improvements of Run-Level Coding

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 22 / 42

Run-Level Coding Approaches / Improvements of Run-Level Coding

excerpt of codeword table (run, level, last) codeword (s = sign) (0, ±1, 0) 10s (0, ±2, 0) 1111 s (0, ±3, 0) 0101 01s (0, ±4, 0) 0010 111s (1, ±1, 0) 110s (1, ±2, 0) 0101 00s (1, ±3, 0) 0001 1110 s (2, ±1, 0) 1110 s (2, ±1, 1) 0011 10s

1 Scanning and conversion into (run, level, last) events

2 Conversion into bitstream (without coded block pattern)

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 23 / 42

Run-Level Coding Approaches / Improvements of Run-Level Coding

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 24 / 42

Arithmetic Coding

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 25 / 42

Arithmetic Coding / Shannon-Fano-Elias Coding

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 26 / 42

Arithmetic Coding / Shannon-Fano-Elias Coding

1/2

1/3 1/2

1/6 5/6

L0 + W0 = 1 L0 = 0 A N B A N B A N B A N B A N B A N B L = 0.8819.. L+W = 0.8842.. which value ?

Algorithm: Interval Refinement initialization: W0 = 1 L0 = 0 iteration: Wn+1 = Wn · p(sn) Ln+1 = Ln + Wn · c(sn) with c(s) =

p(a) init

B A N A N A

1 6 1 12 1 36 1 72 1 216 1 432

5 6 5 6 21 24 21 24 127 144 127 144

144

1 432

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 27 / 42

Arithmetic Coding / Shannon-Fano-Elias Coding

(n − 3) · 2−K (n − 2) · 2−K (n − 1) · 2−K n · 2−K (n + 1) · 2−K (n + 2) · 2−K (n + 3) · 2−K

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 28 / 42

Arithmetic Coding / Shannon-Fano-Elias Coding

1/2

1/3 1/2

1/6 5/6

L0 + W0 = 1 L0 = 0 A N B A N B A N B A N B A N B A N B L = 0.8819.. L+W = 0.8842.. v = 0.8828.. Encoding Algorithm initialization: W0 = 1 L0 = 0 iteration: Wn+1 = Wn · p(sn) Ln+1 = Ln + Wn · c(sn) finalization: K = ⌈− log2 W ⌉ z = ⌈ L · 2K⌉ codeword: integer z with K bits

B A N A N A

1 6 1 12 1 36 1 72 1 216 1 432

5 6 5 6 21 24 21 24 127 144 127 144

144 · 512

512

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 29 / 42

Arithmetic Coding / Shannon-Fano-Elias Coding

a p(a) c(a) A

1/2

N

1/3 1/2

B

1/6 5/6

Wn+1 = Wn · p(.) Ln+1 = Ln+Wn · c(.) L0 + W0 = 1 L0 = 0 A N B A N B A N B A N B A N B A N B (Ln, Wn)

5 6, 1 6 5 6, 1 12 21 24, 1 36 21 24, 1 72 127 144, 1 216

(Ln+1, Wn+1) (A)

2 5 6, 1 12 5 6, 1 24 21 24, 1 72 21 24, 1 144 127 144, 1 432

(Ln+1, Wn+1) (N)

1 2, 1 3 11 12, 1 18 21 24, 1 36 8 9, 1 108 127 144, 1 216 191 216, 1 648

(Ln+1, Wn+1) (B)

5 6, 1 6 35 36, 1 36 65 72, 1 72 97 108, 1 216 383 432, 1 432 287 324, 1 1296

symbol sn

B A N A N A

v = (0.111000100)b = 452

512

b = "111000100" s = "BANANA"

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 30 / 42

Arithmetic Coding / Arithmetic Coding

zn−U bits

settled bits

active bits

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 31 / 42

CABAC / Basic Design

binary arithmetic coder bypass mode regular mode binarizer context modelling regular arithmetic coding engine bypass arithmetic coding engine

previous bin for context model update bin string bin bin bin context model

bitstream syntax element

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 32 / 42

CABAC / Basic Design

truncated exponential Rice codes ( R = 0 : unary ) value fixed-length unary unary Golomb R = 1 R = 2 R = 3 000 1 1 1 10 100 1000 1 001 01 01 010 11 101 1001 2 010 001 001 011 010 110 1010 3 011 0001 0001 0010 0 011 111 1011 4 100 0000 1 0000 1 0010 1 0010 0100 1100 5 101 0000 01 0000 01 0011 0 0011 0101 1101 6 110 0000 001 0000 001 0011 1 0001 0 0110 1110 7 111 0000 0001 0000 000 0001 000 0001 1 0111 1111 8 0000 0000 1 0001 001 0000 10 0010 0 0100 0 9 0000 0000 01 0001 010 0000 11 0010 1 0100 1 10 0000 0000 001 0001 011 0000 010 0011 0 0101 0 11 0000 0000 0001 0001 100 0000 011 0011 1 0101 1 12 0000 0000 0000 1 0001 101 0000 0010 0001 00 0110 0 13 0000 0000 0000 01 0001 110 0000 0011 0001 01 0110 1 14 0000 0000 0000 001 0001 111 0000 0001 0 0001 10 0111 0 15 0000 0000 0000 0001 0000 1000 0 0000 0001 1 0001 11 0111 1 · · · · · · · · · · · · · · · · · ·

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 33 / 42

CABAC / Basic Design

63

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 34 / 42

CABAC / Basic Design

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 35 / 42

CABAC / Transform Coefficient Coding

zig-zag scan levels: 9 0 -6 3 0 -1 1 · · · significance map (forward order, interleaved) sig flag: 1 1 1 1 1 last flag: 1 absolute values and sign (reverse order, interleaved) abs minus 1: 8 5 2 sign flag: 1 1

1 Coded Block Flag (CBF):

2 Significance Map (forward):

3 Actual Values (reverse):

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 36 / 42

CABAC / Transform Coefficient Coding

abs minus 1 abs sig prefix suffix 1 1 2 1 10 3 1 110 4 1 1110 5 1 11110 6 1 111110 7 1 1111110 8 1 11111110 9 1 111111110 10 1 1111111110 11 1 11111111110 12 1 111111111110 13 1 1111111111110 14 1 11111111111110 15 1 11111111111111 0 16 1 11111111111111 100 17 1 11111111111111 101 18 1 11111111111111 11000 19 1 11111111111111 11001 20 1 11111111111111 11010 · · · · · · · · · · · ·

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 37 / 42

CABAC / Transform Coefficient Coding

transform block 4×4 subblock first non-zero

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 38 / 42

CABAC / Transform Coefficient Coding

1 Significance bins sig for all scan positions (in regular mode) 2 Up to 8 greater-than-one bins gt1

3 Up to 1 greater-than-two bin gt2

4 Sign flags for non-zero coefficients

5 Remainders rem using Rice-Golomb code (in bypass mode)

|q| sig gt1 gt2 rem – – – 1 1 – – 2 1 1 – 3 1 1 1 4 1 1 1 1 5 1 1 1 2 6 1 1 1 3 7 1 1 1 4 8 1 1 1 5 9 1 1 1 6 10 1 1 1 7 11 1 1 1 8 12 1 1 1 9 13 1 1 1 10 14 1 1 1 11 15 1 1 1 12 . . . . . . . . . . . . . . .

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 39 / 42

CABAC / Transform Coefficient Coding

1 Context-coded bins sig, gt1, par, gt3

2 Remainder rem using parametric Golomb-Rice code

3 Sign flags for non-zero coefficients (in bypass mode) |q| sig gt1 par gt3 rem – – – – 1 1 – – – 2 1 1 – 3 1 1 1 – 4 1 1 1 5 1 1 1 1 6 1 1 1 1 7 1 1 1 1 1 8 1 1 1 2 9 1 1 1 1 2 10 1 1 1 3 11 1 1 1 1 3 12 1 1 1 4 13 1 1 1 1 4 14 1 1 1 5 15 1 1 1 1 5 . . . . . . . . . . . . . . . . . .

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 40 / 42

CABAC / Transform Coefficient Coding

template dclass (luma) dclass (chroma)

1 Sum of partially reconstructed values inside local template (first pass: q∗ = sig + gt1 + par + 2 · gt3) 2 Class dclass for diagonal position (3 classes for luma, 2 classes for chroma) 3 For special quantizer (TCQ): Quantization state (0..3)

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 41 / 42

Summary

Heiko Schwarz (Freie Universität Berlin) — Image and Video Coding: Transform Coefficient Coding 42 / 42