Introductory Computational Science Introductory Computational Science Rubin Landau, EPIC/OSU 2006 Rubin Landau, EPIC/OSU 2006 1 1

High Performance Hardware, Memory & CPU High Performance Hardware, Memory & CPU

Rubin H. Landau Rubin H. Landau

With With

Sally Haerer and Scott Clark Sally Haerer and Scott Clark

Computational Physics for Undergraduates Computational Physics for Undergraduates

BS Degree Program: Oregon State University BS Degree Program: Oregon State University

“Engaging People in Cyber Infrastructure Engaging People in Cyber Infrastructure” Suppor Support by EPICS/NSF & OSU by EPICS/NSF & OSU

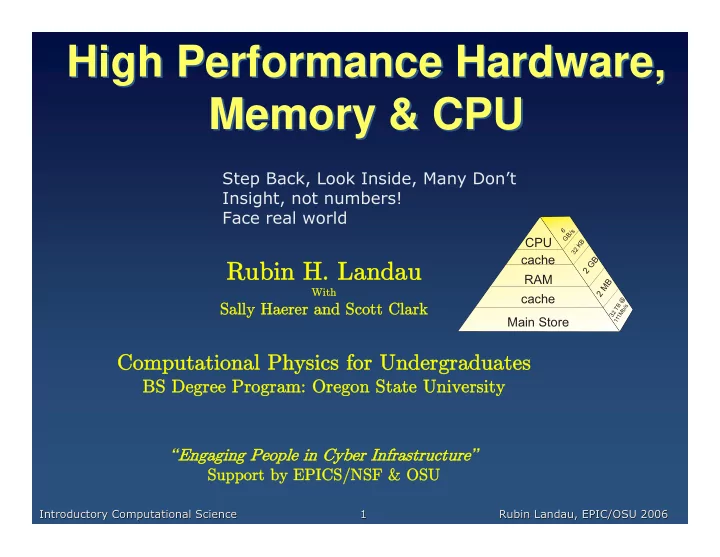

Main Store cache cache RAM CPU

32 TB @

1 1 1 M b / s

2 MB 2 GB

3 2 K B 6 GB/s

Step Back, Look Inside, Many Don’t Insight, not numbers! Face real world