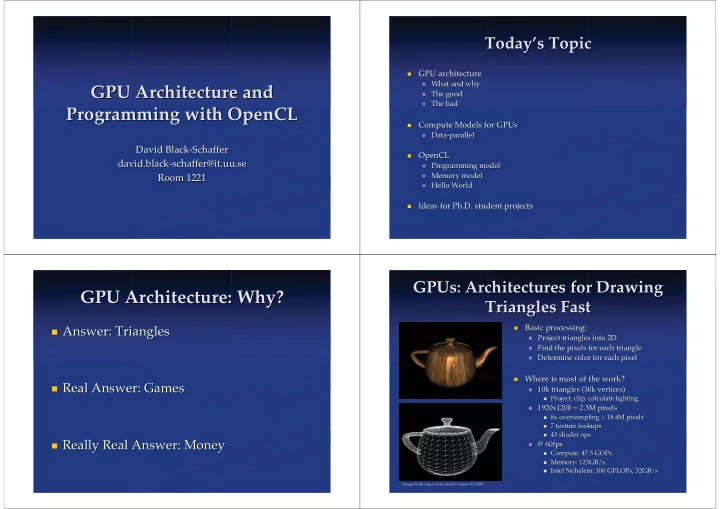

GPU Ar GPU Architecture and chitecture and Programming with Programming with OpenCL OpenCL

David Black-Schaffer David Black-Schaffer david david. .black-schaffer@it black-schaffer@it. .uu uu.se .se Room 1221 Room 1221

Today Today’ ’s Topic s Topic

- GPU architecture

GPU architecture

- What and why

What and why

- The good

The good

- The bad

The bad

- Compute Models for

Compute Models for GPUs GPUs

- Data-parallel

Data-parallel

- OpenCL

OpenCL

- Programming model

Programming model

- Memory

Memory model model

- Hello World

Hello World

- Ideas for Ph.D. student projects

Ideas for Ph.D. student projects

GPU Architecture: Why? GPU Architecture: Why?

- Answer: Triangles

Answer: Triangles

- Real Answer: Games

Real Answer: Games

- Really Real Answer: Money

Really Real Answer: Money

GPUs GPUs: Architectures for Drawing : Architectures for Drawing Triangles Fast Triangles Fast

- Basic processing:

Basic processing:

- Project triangles into 2D

Project triangles into 2D

- Find the pixels for each triangle

Find the pixels for each triangle

- Determine color for each pixel

Determine color for each pixel

- Where is most of the work?

Where is most of the work?

- 10k triangles (30k vertices)

10k triangles (30k vertices)

- Project, clip, calculate lighting

Project, clip, calculate lighting

- 1920x1200 = 2.3M pixels

1920x1200 = 2.3M pixels

- 8x

8x oversampling

- versampling = 18.4M pixels

= 18.4M pixels

- 7 texture lookups

7 texture lookups

- 43

43 shader shader ops

- ps

- @ 60fps

@ 60fps

- Compute: 47.5

Compute: 47.5 GOPs GOPs

- Memory: 123GB/s

Memory: 123GB/s

- Intel Nehalem: 106

Intel Nehalem: 106 GFLOPs GFLOPs, , 32GB/s 32GB/s

Images from caig.cs.nctu.edu.tw/course/CG2007