ANGELO SHERLOCK ANDERSON

DONOVAN

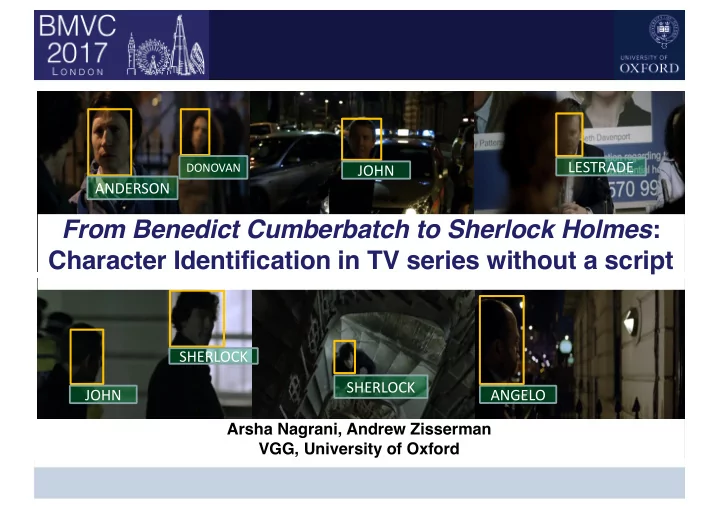

From Benedict Cumberbatch to Sherlock Holmes : Character - - PowerPoint PPT Presentation

LESTRADE DONOVAN JOHN ANDERSON From Benedict Cumberbatch to Sherlock Holmes : Character Identification in TV series without a script SHERLOCK SHERLOCK JOHN ANGELO Arsha Nagrani, Andrew Zisserman VGG, University of Oxford Goal Identify

DONOVAN

VGG, Dept. of Engineering Science, University of Oxford

2

Useful for: Ø Content-based browsing e.g. ‘Fast-forward to when Sherlock first meets John’ Ø One step closer to story understanding

VGG, Dept. of Engineering Science, University of Oxford

3

Cour et al, 2009 Bojanowski et al, 2013 Tapaswi et al, 2016

VGG, Dept. of Engineering Science, University of Oxford

4

VGG, Dept. of Engineering Science, University of Oxford

5

Actor Images are usually taken from red carpet photoshoots Ø Frontal Ø Good lighting Ø Standard expressions

Benedict Cumberbatch Sherlock Holmes

VGG, Dept. of Engineering Science, University of Oxford

6

VGG, Dept. of Engineering Science, University of Oxford

7

VGG, Dept. of Engineering Science, University of Oxford

8

VGG, Dept. of Engineering Science, University of Oxford

9

VGG, Dept. of Engineering Science, University of Oxford

10

VGG, Dept. of Engineering Science, University of Oxford

11

VGG, Dept. of Engineering Science, University of Oxford

12

VGG, Dept. of Engineering Science, University of Oxford

13

VGG, Dept. of Engineering Science, University of Oxford

14

VGG, Dept. of Engineering Science, University of Oxford

15

Chung, J. S., and Zisserman, A. "Out of time: automated lip sync in the wild." Asian Conference

VGG, Dept. of Engineering Science, University of Oxford

16

300x512 Raw audio signal 300x512

maxpool maxpool

7x7x96

5x5x256

3x3x256 3x3x256 3x3x256

C1 C2 C3 C4 C5 FC7 FC8

3x3x256 3x3x256

avgpool FC6

9x1

VGG, Dept. of Engineering Science, University of Oxford

17

VGG, Dept. of Engineering Science, University of Oxford

18

Benedict Cumberbatch Martin Freeman Rupert Graves

Actor images Cast Lists easily available on IMDB

VGG, Dept. of Engineering Science, University of Oxford

19

VGG, Dept. of Engineering Science, University of Oxford

20

VGG, Dept. of Engineering Science, University of Oxford

21

VGG, Dept. of Engineering Science, University of Oxford

22

0.2 0.4 0.6 0.8 1

proportion of tracks

0.2 0.4 0.6 0.8 1

per sample accuracy

0.2 0.4 0.6 0.8 1 0.2 0.4 0.6 0.8 1 0.2 0.4 0.6 0.8 1 0.2 0.4 0.6 0.8 1

0.0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1.0

face(actor) AP:0.98 face(character) AP:0.99 face+voice(character) AP:0.99

0.0 0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1.0

face(actor) face(character) face+voice(character)

VGG, Dept. of Engineering Science, University of Oxford

23

VGG, Dept. of Engineering Science, University of Oxford

24

[2] O. M. Parkhi, E. Rahtu, A. Zisserman, "It's in the bag: Stronger supervision for automated face labelling", ICCV Workshop, 2015

0.2 0.4 0.6 0.8 1

proportion of tracks

0.2 0.4 0.6 0.8 1

per sample accuracy

0.2 0.4 0.6 0.8 1

proportion of tracks

0.2 0.4 0.6 0.8 1

per sample accuracy

0.2 0.4 0.6 0.8 1

proportion of tracks

0.2 0.4 0.6 0.8 1

per sample accuracy

0.2 0.4 0.6 0.8 1

proportion of tracks

0.2 0.4 0.6 0.8 1

per sample accuracy

Bojanowski ’13 [1] Parkhi ’15 [2] Actor face only Our method (final) AP: 0.75 AP: 0.93 AP: 0.89 AP: 0.96

[1] P. Bojanowski, F. Bach, I. Laptev, J. Ponce, C. Schmid, J. Sivic, "Finding actors and actions in movies", ICCV, 2013

VGG, Dept. of Engineering Science, University of Oxford

25

VGG, Dept. of Engineering Science, University of Oxford

26

Small and very dark faces Extreme occlusion cases where the character is not speaking Back of heads

VGG, Dept. of Engineering Science, University of Oxford

27