Filling the Performance Gap in Convolution Implementations for - PowerPoint PPT Presentation

www.bsc.es Filling the Performance Gap in Convolution Implementations for NVIDIA GPUs Antonio J. Pea, Pedro Valero-Lara, Marc Jord GTC 2019 - San Jose Agenda Intro Background Convolutjonal Neural Networks Convolutjon

www.bsc.es Filling the Performance Gap in Convolution Implementations for NVIDIA GPUs Antonio J. Peña, Pedro Valero-Lara, Marc Jordà GTC 2019 - San Jose

Agenda ● Intro ● Background ○ Convolutjonal Neural Networks ○ Convolutjon operatjon ○ Common characteristjcs of CNNs ● cuDNN convolutjon algorithms survey ● Design ○ Data reuse present in conv layers ○ Data layout ○ Algorithm stages ● Performance evaluatjon ● Conclusions & ongoing work 2

Introduction ● Interest in neural networks resurged in recent years ○ Deep Neural Networks (DNNs) ● Made possible by ○ Availability of very large annotated datasets (e.g. imageNet) ○ High-throughput heterogeneous systems ● Convolutjonal Neural Networks (CNNs) ○ High accuracy in image classifjcatjon benchmarks ○ Several algorithms (Direct, GEMM, FFT, Winograd) ● Our convolutjon implementatjon for NVIDIA GPUs ○ Based on direct applicatjon of the convolutjon formula AlexNet Structure ○ Effjciently exploits in-core memories and global memory accesses 3

Convolutional Neural Networks (CNNs) ● Inclusion of convolutjonal layers Fully-connected layer ● Convolutjonal layer Input (fmatuened) Out i = ActjvatjonFunc(Sum j=0..#In (W i,j · In j ) + bias) ○ Weights are grouped in fjlters Output ○ Filters are shared by several output elements Weights (fmatuened) ○ Uses convolutjon operatjons as part of its computatjon Convolutjonal layer ● Advantage over fully-connected layers Output = ActjvatjonFunc(ConvolutjonOps(Input, ○ Storage and computatjonal cost does not depend * Filters) + bias) = on input or output size * - Number of fjlters and its size are a design choice * ○ Translatjon invariance - Filters “see” difgerent parts of the input ● Serves as automatjc feature extractor ○ Filters are trained to detect relevant patuerns Trained fjlters in the 1st convolutjonal layer of AlexNet 4

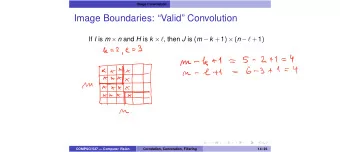

Convolution Operation ● Output elements are the scalar product of one fjlter and a subvolume of the input ○ Input and fjlter depth are equal ○ Difgerent input subvolume for each output element (Dark blue highlight) ● Output planes are the convolutjon of one input with one of the fjlters ○ Output depth = number of fjlters ○ Filter is translated over the X and Y dimensions ● Convolutjon parameters ○ ○ # of inputs (aka batch size, N) Depth ○ ○ Stride Input X, Y size (H, W) ○ ○ # of fjlters (Nf) Padding ○ ○ Dilatjon Filter X, Y size (aka receptjve fjeld, Hf, Wf) 5

Convolution Operation - Example ● Example convolutjon with 1 input and 2 fjlters ○ 1 input of 5 x 5 x 3 ○ 2 fjlters of 3 x 3 x 3 1 output of 3 x 3 x 2 (output Z is the number of fjlters) ○ Stride X and Y = 1 = * Output (3x3x2) Input (5x5x3) Filters (3x3x3) 6

Convolution parameter values in CNNs ● Parameters from 5 well-known CNNs ○ AlexNet, GoogleNet, Resnet50, SqueezeNet, VGG19 ● Overall structure ○ Initjal layers have large input X/Y size, small depth ○ Final layers have small input X/Y size, large depth … … … Inputs’ shape at difgerent layer levels of the CNN 7

Convolution parameter values in CNNs ● Parameters from 5 well-known CNNs ○ AlexNet, GoogleNet, Resnet50, SqueezeNet, VGG19 ● Overall structure ○ Initjal layers have large input X/Y size, small depth = * ○ Final layers have small input X/Y size, large depth ● Padding to maintain X/Y size ○ Input X/Y size reductjon is done with pooling layers W f Zero-padding of half W f of the fjlter size keeps 2 Pooling applies a reductjon operatjon output X&Y size equal (e.g. avg, max,…) to each tjle to input = Pooling of 2x2 tjles halves the X & Y size 8

Convolution parameter values in CNNs ● Parameters from 5 well-known CNNs ○ AlexNet, GoogleNet, Resnet50, SqueezeNet, VGG19 ● Overall structure ○ Initjal layers have large input X/Y size, small depth ○ Final layers have small input X/Y size, large depth ● Padding to maintain X/Y size ○ Input X/Y size reductjon is done with pooling layers ● Stride = 1 for most convolutjons ○ 95% of all convolutjon confjguratjons ● Filter sizes are small ○ 1x1, 3x3, 5x5, … Characteristjcs of convolutjonal layers with stride=1 in the selected CNNs 9

Convolution parameter values in CNNs ● Parameters from 5 well-known CNNs ○ AlexNet, GoogleNet, Resnet50, SqueezeNet, VGG19 ● Overall structure ○ Initjal layers have large input X/Y size, small depth ○ Final layers have small input X/Y size, large depth ● Padding to maintain X/Y size ○ Input X/Y size reductjon is done with pooling layers ● Stride = 1 for most convolutjons ○ 95% of all convolutjon confjguratjons ● Filter sizes are small ○ 1x1, 3x3, 5x5, … 1 x 1 1 x 1 pool ● Convolutjons with 1x1 fjlters are a special case 1 x 1 ○ Reduce the depth of inputs to reduce the computatjonal 3 x 3 5 x 5 1 x 1 cost of the following convolutjonal layer (with larger fjlters) concat Inceptjon module from GoogleNet 10

Convolution algorithms in cuDNN GEMM-based Algorithm ● Generate two intermediate matrices, multjply them, and reshape the result ○ Filters matrix → fmatuened fjlters as rows ○ Inputs matrix → Elements of input subvolumes as columns (im2col in Matlab) ● Pros ○ Can exploit existjng high-performance GEMM libs 0 (MKL, cuBLAS, …) ● Cons ○ Requires extra memory for intermediate matrices ○ Inputs’ intermediate matrix is larger than inputs themselves 0 0 Image from Chetlur et al., cuDNN: Effjcient primitjves for deep learning 11

Convolution algorithms in cuDNN Arithmetjc strength reductjon approaches ● Algorithmic transformatjon to trade multjplicatjons by additjons ○ Additjons are faster to execute than multjplicatjons ● Winograd ○ Used in fast FIR fjlter algorithms in signal processing ○ Inputs: g, d ○ Coeffjcient matrices: A, B, G ● Fast Fourier Transform ○ FFT + Transformatjon + Inverse FFT 12

cuDNN Convolution Algorithms – Performance survey As part of our study, we did a performance survey of cuDNN convolutjon algorithms ● 3 convolutjon algorithms ○ GEMM, Winograd, FFT ○ Total of 7 variants: 3 of GEMM (1 explicit input transformatjon, 2 implicit), 2 of Winograd, and 2 of FFT ● Convolutjon confjguratjons from well-known CNNs: AlexNet, GoogleNet, Resnet50, SqueezeNet, VGG19 ● cuDNN 6 on V100-SXM2 (volta) ● Performance normalized to the best performing algorithm for each convolutjon confjguratjon ○ Best algorithm is at Y=1 ○ X axis labels are <inputXY>-<batch size>-<fjlter XY>-<#fjlters>-<depth> 2 1 1x1 5x5 3x3 13

cuDNN Convolution Algorithms – Performance survey Convolutjon confjguratjons with 1x1 fjlters (only GEMM variants support this fjlter size) ● The implicit variants clearly outperform explicit GEMM ○ Explicit GEMM is +1.5x slower for most of the confjguratjons ● GEMM-implicit-precomp is betuer when the batch size is > 1 14

cuDNN Convolution Algorithms – Performance survey Confjguratjons with 3x3 fjlters 3x3 5x5 ● Winograd is clearly the best ○ Initjally designed for this fjlter size ● GEMM-impl-precomp outperforms it when depth is small and input X&Y size large Confjguratjons with 5x5 fjlters ● GEMM-impl-precomp is the best performing Best is the other winograd ● FFT gets close in a few cases variant, not shown to reduce only clutuer ○ Betuer suited for larger fjlter sizes 15

Design

Design – Data reuse The convolutjons of a convolutjonal layer expose two levels of data reuse At the layer level ● A batch of inputs are convolved with all the layer fjlters ○ Each fjlter is used with all the inputs ○ Each input is used with all the fjlters = * Filters Outputs Inputs 17

Design – Data reuse The convolutjons of a convolutjonal layer expose two levels of data reuse At the layer level ● A batch of inputs are convolved with all the layer fjlters ○ Each fjlter is used with all the inputs ○ Each input is used with all the fjlters At the convolutjon level ● Input elements reuse ○ Not constant: input z-rows in the center are reused more ● Filter elements reuse ○ Each fjlter z-row is reused the same amount of tjmes ○ Inputs are usually larger => more reuse of fjlter z-rows ○ If stride = 1 (common in CNNs), reuse is done by Filter elements reuse : Input elements that reuse two contjguous subvolume example Z-rows of the fjlter (in matching colors) in a convolutjon with stride=1 18

Design – Data layout Flatuened representatjon of the 4-D tensors ● How are data stored in memory ● Denoted as a four letuer acronym, one letuer per dimension ○ Right-most dim elements are contjguous in memory ● Dimensions ○ N: batch ○ C: depth ○ W: width ○ H: height ● Common layouts in CNNs ○ NCHW ○ NHWC 19

Design – Data layout Considering data layout + data reuse + coalescing If we have ● NCHW layout ● Warps mapped along W dimension ● Stride = 1 We get ● Good coalescing loading inputs ○ Fully-coalesced warps ○ Some warps may have a gap (overhead similar to misaligned accesses) ○ No need for layout transformatjons before the actual computatjon ● Threads in a warp reuse fjlter data Example with warp size = 4 ○ Exploit shared mem and shuffme instructjons ○ Faster mem access 20

Recommend

More recommend

Explore More Topics

Stay informed with curated content and fresh updates.

![Convolution Layers Convolution Layers In [1]: from mxnet import autograd, nd from mxnet.gluon](https://c.sambuz.com/888999/convolution-layers-convolution-layers-s.webp)