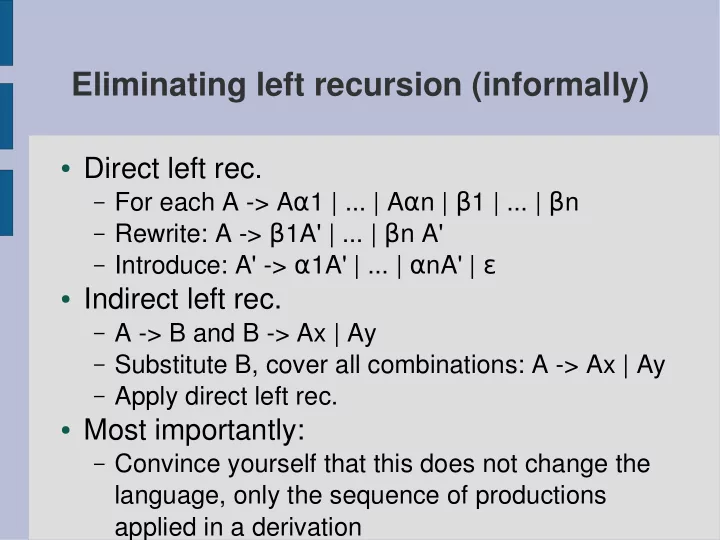

Eliminating left recursion (informally)

- Direct left rec.

– For each A -> Aα1 | ... | Aαn | β1 | ... | βn – Rewrite: A -> β1A' | ... | βn A' – Introduce: A' -> α1A' | ... | αnA' | ε

- Indirect left rec.

– A -> B and B -> Ax | Ay – Substitute B, cover all combinations: A -> Ax | Ay – Apply direct left rec.

- Most importantly:

– Convince yourself that this does not change the