Einführung in Visual Computing

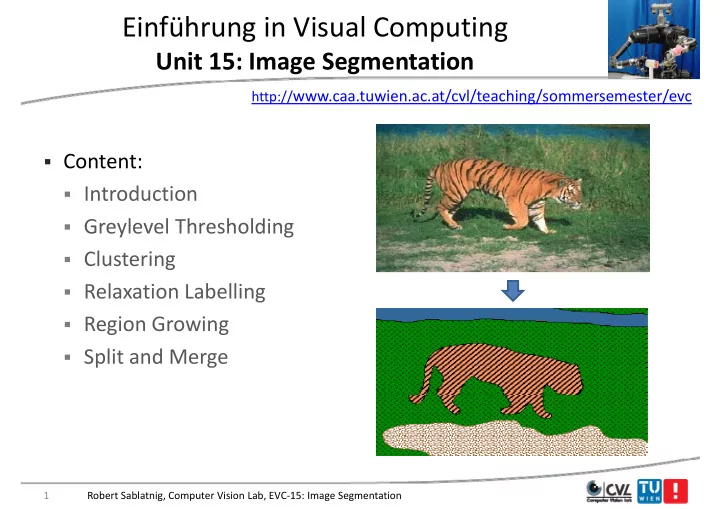

U it 15 I S t ti Unit 15: Image Segmentation

http://www.caa.tuwien.ac.at/cvl/teaching/sommersemester/evc

- Content:

- Introduction

- Greylevel Thresholding

- Greylevel Thresholding

- Clustering

- Relaxation Labelling

- Region Growing

- Split and Merge

1 Robert Sablatnig, Computer Vision Lab, EVC‐15: Image Segmentation