1

4/10/08 1

CSCI 5832 Natural Language Processing

Jim Martin Lecture 20

4/10/08 2

Today 4/3

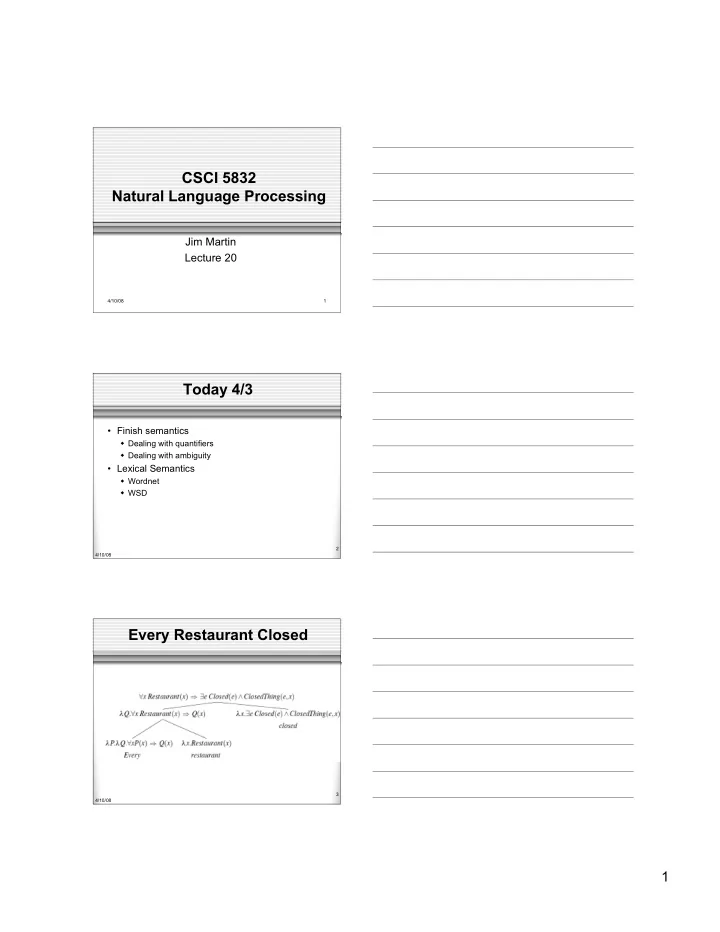

- Finish semantics

Dealing with quantifiers Dealing with ambiguity

- Lexical Semantics

Wordnet WSD

4/10/08 3

CSCI 5832 Natural Language Processing Jim Martin Lecture 20 - - PDF document

CSCI 5832 Natural Language Processing Jim Martin Lecture 20 4/10/08 1 Today 4/3 Finish semantics Dealing with quantifiers Dealing with ambiguity Lexical Semantics Wordnet WSD 2 4/10/08 Every Restaurant Closed 3

4/10/08 1

4/10/08 2

4/10/08 3

4/10/08 4

4/10/08 5

4/10/08 6

4/10/08 7

4/10/08 8

4/10/08 9

4/10/08 10

4/10/08 11

4/10/08 12

4/10/08 13

4/10/08 14

4/10/08 15

4/10/08 16

4/10/08 17

4/10/08 18

4/10/08 19

4/10/08 20

4/10/08 21

4/10/08 22

4/10/08 23

4/10/08 24

4/10/08 25

4/10/08 26

4/10/08 27

4/10/08 28

4/10/08 29

4/10/08 30

4/10/08 31

4/10/08 32

4/10/08 33

4/10/08 34

4/10/08 35

4/10/08 36

4/10/08 37

4/10/08 38

4/10/08 39

4/10/08 40

Serranidae)

freshwater fishes especially of the genus Micropterus)

instruments)

freshwater spiny-finned fishes)

4/10/08 41

4/10/08 42

4/10/08 43

positions to the right and left of the target word

speech

window regardless of position

4/10/08 44

4/10/08 45

4/10/08 46

4/10/08 47

4/10/08 48

4/10/08 49

4/10/08 50

4/10/08 51

4/10/08 52

4/10/08 53

4/10/08 54

4/10/08 55

4/10/08 56

4/10/08 57

4/10/08 58

4/10/08 59

4/10/08 60

4/10/08 61

4/10/08 62

1. bass - (the lowest part of the musical range) 2. bass, bass part - (the lowest part in polyphonic music) 3. bass, basso - (an adult male singer with the lowest voice) 4. sea bass, bass - (flesh of lean-fleshed saltwater fish of the family Serranidae) 5. freshwater bass, bass - (any of various North American lean-fleshed freshwater fishes especially of the genus Micropterus) 6. bass, bass voice, basso - (the lowest adult male singing voice) 7. bass - (the member with the lowest range of a family of musical instruments) 8. bass -(nontechnical name for any of numerous edible marine and freshwater spiny-finned fishes)

4/10/08 63