Motion models CS 4495 Computer Vision – A. Bobick

Aaron Bobick School of Interactive Computing

CS 4495 Computer Vision Motion Models Aaron Bobick School of - - PowerPoint PPT Presentation

Motion models CS 4495 Computer Vision A. Bobick CS 4495 Computer Vision Motion Models Aaron Bobick School of Interactive Computing Motion models CS 4495 Computer Vision A. Bobick Outline Last time: dense motion: optic flow

Motion models CS 4495 Computer Vision – A. Bobick

Aaron Bobick School of Interactive Computing

Motion models CS 4495 Computer Vision – A. Bobick

Motion models CS 4495 Computer Vision – A. Bobick

Many slides adapted from S. Seitz, R. Szeliski, M. Pollefeys, K. Grauman and others…

Motion models CS 4495 Computer Vision – A. Bobick

Will start by estimating motion of each pixel separately Then will consider motion of entire image

Motion models CS 4495 Computer Vision – A. Bobick

How to estimate pixel motion from image I(x,y,t) to I(x,y,t) ?

– given a pixel in I(x,y,t), look for nearby pixels of the same color in I(x,y,t+1)

Key assumptions

– For grayscale images, this is brightness constancy

This is called the optical flow problem

( , , ) I x y t ( , , 1) I x y t +

Motion models CS 4495 Computer Vision – A. Bobick

( , , 1) ( , , ) ( , , 1) ( , , ) [ ( , , 1) ( , )] ,

x y x y t x y t

I x u y v t I x y t I x y t I u I v I x y t I x y t I x y I u I v I I u I v I I u v = + + + − ≈ + + + − ≈ + − + + ≈ + + ≈ + ∇ ⋅ < >

In the limit as u and v go to zero, this becomes exact Brightness constancy constraint equation

x y t

,

t

I I u v = + ∇ ⋅ < >

Motion models CS 4495 Computer Vision – A. Bobick

Intuitively, what does this constraint mean?

2 unknowns, one equation edge (u,v) (u’,v’) gradient (u+u’,v+v’)

x y t

,

t

I I u v = + ∇ ⋅ < >

Motion models CS 4495 Computer Vision – A. Bobick

Motion models CS 4495 Computer Vision – A. Bobick

Motion models CS 4495 Computer Vision – A. Bobick

Solution: solve least squares problem

Motion models CS 4495 Computer Vision – A. Bobick

TA

direction of fastest intensity change

Motion models CS 4495 Computer Vision – A. Bobick

tracking features – maybe SIFT – more later….

Motion models CS 4495 Computer Vision – A. Bobick

tracking features – maybe SIFT – more later….

Motion models CS 4495 Computer Vision – A. Bobick

Iterative Lukas-Kanade Algorithm

1.

Estimate velocity at each pixel by solving Lucas-Kanade equations

2.

Warp It towards It+1 using the estimated flow field

3.

Repeat until convergence

Motion models CS 4495 Computer Vision – A. Bobick

x x0

Initial guess: Estimate: estimate update

(using d for displacement here instead of u)

Motion models CS 4495 Computer Vision – A. Bobick

x x0

estimate update Initial guess: Estimate:

Motion models CS 4495 Computer Vision – A. Bobick

x x0

Initial guess: Estimate: Initial guess: Estimate: estimate update

Motion models CS 4495 Computer Vision – A. Bobick

x x0

Motion models CS 4495 Computer Vision – A. Bobick

Motion models CS 4495 Computer Vision – A. Bobick

Temporal aliasing causes ambiguities in optical flow because images can have many pixels with the same intensity. I.e., how do we know which ‘correspondence’ is correct?

nearest match is correct (no aliasing) nearest match is incorrect (aliasing)

To overcome aliasing: coarse-to-fine estimation.

actual shift estimated shift

Motion models CS 4495 Computer Vision – A. Bobick

Motion models CS 4495 Computer Vision – A. Bobick

image 2 image 1

Gaussian pyramid of image 1 Gaussian pyramid of image 2 image 2 image 1

u=10 pixels u=5 pixels u=2.5 pixels u=1.25 pixels

Motion models CS 4495 Computer Vision – A. Bobick

image I image J

Gaussian pyramid of image 1 Gaussian pyramid of image 2 image 2 image 1

run iterative L-K

run iterative L-K warp

warp & upsample Upsample flow

. . .

Motion models CS 4495 Computer Vision – A. Bobick

Motion models CS 4495 Computer Vision – A. Bobick

Throw away every other row and column to create a 1/2 size image

1/4 1/8

Motion models CS 4495 Computer Vision – A. Bobick

1/4 (2x zoom) 1/8 (4x zoom) Aliasing! What do we do? 1/2

Motion models CS 4495 Computer Vision – A. Bobick

G 1/4 G 1/8 Gaussian 1/2

Motion models CS 4495 Computer Vision – A. Bobick

G 1/4 G 1/8 Gaussian 1/2

Motion models CS 4495 Computer Vision – A. Bobick

Motion models CS 4495 Computer Vision – A. Bobick

Need this! Original image

Motion models CS 4495 Computer Vision – A. Bobick

Known as a Gaussian Pyramid [Burt and Adelson, 1983]

Motion models CS 4495 Computer Vision – A. Bobick

Need Gk to reconstruct Reduce Expand

Motion models CS 4495 Computer Vision – A. Bobick

Reduce Apply “5-tap” separable filter to make reduced image.

Motion models CS 4495 Computer Vision – A. Bobick

Reduce Expand Apply “5-tap” separable filter to make reduced image. Apply different “3-tap” separable filters for even and odd pixels to make expanded image...

Motion models CS 4495 Computer Vision – A. Bobick

Apply different “3-tap” separable filters for even and

Even Odd Coarser Finer

Motion models CS 4495 Computer Vision – A. Bobick

Motion models CS 4495 Computer Vision – A. Bobick

L0 L2 L4 Reconstructed

Motion models CS 4495 Computer Vision – A. Bobick

Motion models CS 4495 Computer Vision – A. Bobick

Reduce Reduce Reduce Reduce

LK LK LK Warp Warp Warp x2 Expand x2 Expand

Final <u(x,y), v(x,y)>

x2 Expand

Motion models CS 4495 Computer Vision – A. Bobick

Compute Iterative LK at highest level Initialize 𝑣𝐿+1, 𝑤𝐿+1 = 0 at size of level K+1 For Each Level i from K to 0

𝑞, 𝑤𝑗 𝑞flow fields of now twice

resolution as level i+1.

𝑞, 𝑤𝑗 𝑞 by 2 to get predicted flow

𝜀, 𝑤𝑗 𝜀 (the correction in flow)

𝑣𝑗 = 𝑣𝑗

𝑞 + 𝑣𝑗 𝜀; 𝑤𝑗 = 𝑤𝑗 𝑞 + 𝑤𝑗 𝜀

Motion models CS 4495 Computer Vision – A. Bobick

* From Khurram Hassan-Shafique CAP5415 Computer Vision 2003

Motion models CS 4495 Computer Vision – A. Bobick

* From Khurram Hassan-Shafique CAP5415 Computer Vision 2003

Motion models CS 4495 Computer Vision – A. Bobick

constraint between locations (smoothness is either explicit

close to a translation.

Ready for another old slide?

Motion models CS 4495 Computer Vision – A. Bobick

Translation 2 unknowns Similarity 4 unknowns Perspective 8 unknowns Affine 6 unknowns

Motion models CS 4495 Computer Vision – A. Bobick

Motion models CS 4495 Computer Vision – A. Bobick

− − − =

1 2 1 3 2 3

a a a a a a ax

+ − − − ≈

Z Y x

T T T X Y X Z Y Z Z Y X

V V V Z Y X V V V ω ω ω ω ω ω

=

Z Y X

V V V =

Z Y X

T T T

V V V =

Z Y X

ω ω ω

Velocity Vector

Translational Component of Velocity Angular Velocity

Motion models CS 4495 Computer Vision – A. Bobick

Z V y Z V f Z V Z Y f Z V f Z YV ZV f v v Z V x Z V f Z V Z X f Z V f Z XV ZV f v u

Z Y Z Y Z Y y Z X Z X Z X x

− = − = − = = − = − = − = =

2 2

Z Y f y Z X f x = =

Take derivatives: ( , ) f x x y f y − = − A

2 2

( ) / ( ) / ( , ) ( ) / ( ) / xy f f x f y x y f y f xy f x − + = + − − B

Where T is translation vector, Ω is rotation Why is Z only here?

Motion models CS 4495 Computer Vision – A. Bobick

2 1 2 3 7 8 2 4 5 6 7 8

Motion models CS 4495 Computer Vision – A. Bobick

1 2 3 4 5 6

Motion models CS 4495 Computer Vision – A. Bobick

6 5 4 3 2 1

t y x

equation: y a x a a y x v y a x a a y x u

6 5 4 3 2 1

) , ( ) , ( + + = + + =

2

t y x

6 5 4 3 2 1

t y x

Motion models CS 4495 Computer Vision – A. Bobick

any linear model easily. Others with some work.

2

t y x

6 5 4 3 2 1

1 1 1 1 1 1 2 2 2 2 2 2 3 4 5 6 x x x y y y t x x x y y y t n x x n x n y y n y n t

a I I x I y I I x I y I a I I x I y I I x I y I a a a I I x I y I I x I y I a ⋅ = −

Motion models CS 4495 Computer Vision – A. Bobick

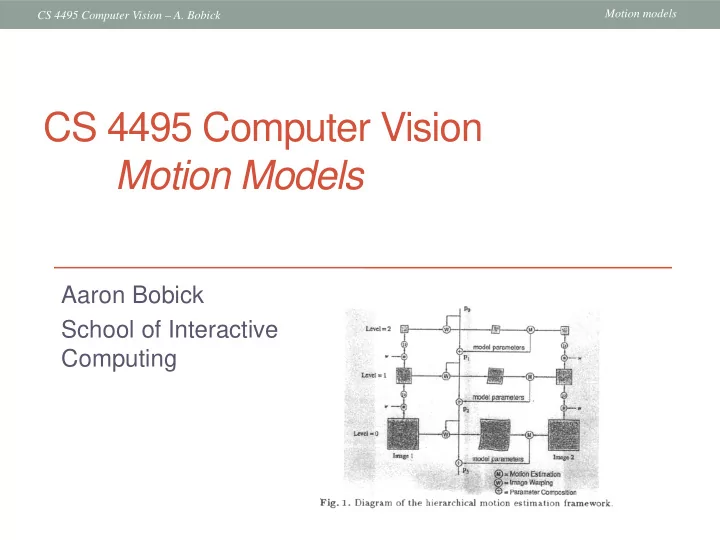

James R. Bergen, P. Anandan, Keith J. Hanna, Rajesh Hingorani: “Hierarchical Model-Based Motion Estimation," ECCV 1992: 237-252

Motion models CS 4495 Computer Vision – A. Bobick

Motion models CS 4495 Computer Vision – A. Bobick

which has a coherent motion

Motion models CS 4495 Computer Vision – A. Bobick

motion model

Motion models CS 4495 Computer Vision – A. Bobick

6 5 4 3 2 1

Local flow estimates

Motion models CS 4495 Computer Vision – A. Bobick

6 5 4 3 2 1

Equation of a plane (parameters a1, a2, a3 can be found by least squares)

Motion models CS 4495 Computer Vision – A. Bobick

6 5 4 3 2 1

1D example

u(x,y) Local flow estimate Segmented estimate Line fitting

Equation of a plane (parameters a1, a2, a3 can be found by least squares)

True flow “Foreground” “Background” Occlusion

Motion models CS 4495 Computer Vision – A. Bobick

parameters in each block by least squares

the scene

Motion models CS 4495 Computer Vision – A. Bobick

Motion models CS 4495 Computer Vision – A. Bobick

sometimes over multiple frames

brightness variations

variations