Course Objective : to teach you some data structures and associated - PowerPoint PPT Presentation

Course Objective : to teach you some data structures and associated algorithms INF421, Lecture 6 Evaluation : TP not en salle info le 16 septembre, Contrle la fin. Trees Note: max( CC, 3 4 CC + 1 4 TP ) Organization : fri 26/8, 2/9, 9/9,

Course Objective : to teach you some data structures and associated algorithms INF421, Lecture 6 Evaluation : TP noté en salle info le 16 septembre, Contrôle à la fin. Trees Note: max( CC, 3 4 CC + 1 4 TP ) Organization : fri 26/8, 2/9, 9/9, 16/9, 23/9, 30/9, 7/10, 14/10, 21/10, Leo Liberti amphi 1030-12 (Arago), TD 1330-1530, 1545-1745 (SI31,32,33,34) Books : LIX, ´ Ecole Polytechnique, France 1. Ph. Baptiste & L. Maranget, Programmation et Algorithmique , Ecole Polytechnique (Polycopié), 2006 2. G. Dowek, Les principes des langages de programmation , Editions de l’X, 2008 3. D. Knuth, The Art of Computer Programming , Addison-Wesley, 1997 4. K. Mehlhorn & P . Sanders, Algorithms and Data Structures , Springer, 2008 Website : www.enseignement.polytechnique.fr/informatique/INF421 Contact : liberti@lix.polytechnique.fr (e-mail subject: INF421) INF421, Lecture 6 – p. 1 INF421, Lecture 6 – p. 2 Lecture summary The minimal knowledge Introduction and reminders A tree is a connected relation without cycles Definitions and properties A tree on n nodes has n − 1 branches There are n n − 2 labelled trees Listing chemical trees Trees in psychology and languages The same molecular formula can correspond to different bond trees (isomers) Depth-First Search (DFS) The analysis of sentences yields grammatical trees Spanning trees The Graph Scanning algorithm, DFS and BFS The cheapest kind of distribution network is a spanning tree INF421, Lecture 6 – p. 3 INF421, Lecture 6 – p. 4

Trees Introduction and reminders INF421, Lecture 6 – p. 5 INF421, Lecture 6 – p. 6 How we draw them Nomenclature INF421, Lecture 6 – p. 7 INF421, Lecture 6 – p. 8

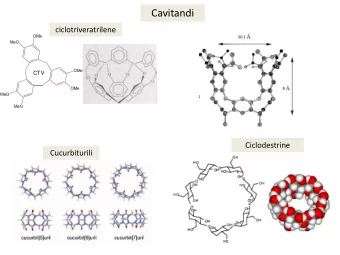

Graphical representation Recall from INF311 Binary trees Their implementations root How to explore them in prefix, infix, postfix order branch height or depth = 2 How to store mathematical expressions in trees leaf node leaf leaf leaf subtree height/depth = length (#branches) of longest walk [root → leaf] INF421, Lecture 6 – p. 9 INF421, Lecture 6 – p. 10 Some applications of trees Chemistry (molecular composition and structure) Psychology (natural language) Distribution networks of minimum cost Definitions and properties Computer science model for recursion (Lecture 3) data structures for sorting and searching (Lecture 7) INF421, Lecture 6 – p. 11 INF421, Lecture 6 – p. 12

Graphs and digraphs Relations A relation A on V is also called a digraph G = ( V, A ) A relation A on a set V is a subset of V × V A symmetric relation E on V is also called a graph G = ( V, E ) v 3 Digraphs have arcs ( u, v ) , graphs have edges { u, v } A digraph/graph is simple if it has no loops V = { v 1 , . . . , v 5 } v 4 v 1 v 2 A = { ( v 1 , v 3 ) , ( v 1 , v 2 ) , ( v 4 , v 5 ) , ( v 5 , v 4 ) , ( v 5 , v 5 ) } In a graph context, nodes are also called vertices arc edge Notation: given v ∈ V , v 5 loop Arc : an element of A ; loop : a pair ( v, v ) if E is symmetric N ( v ) = { u ∈ V | { u, v } ∈ E } is the star of v Edge : e = { ( u, v ) , ( v, u ) } (denote by e = { u, v } ) N + ( v ) N ( v ) ( u, v are incident to e , and u, v are adjacent ) N − ( v ) v v Symmetric relation: if ( u, v ) ∈ A , then ( v, u ) ∈ A N + ( v ) = { u ∈ V | ( v, u ) ∈ A } = outgoing star if A is not symmetric Reflexive relation: ( v, v ) ∈ A for all v ∈ V and N − ( v ) = { u ∈ V | ( u, v ) ∈ A } = incoming star of v Irreflexive or simple relation: ( v, v ) �∈ A for all v ∈ V Also δ ( v ) = {{ u, v } | u ∈ N ( v ) } , δ + ( v ) = { ( v, u ) | u ∈ N + ( v ) } Transitive relation: if ( u, v ) , ( v, w ) ∈ A then ( u, w ) ∈ A and δ − ( v ) = { ( u, v ) | u ∈ N − ( v ) } defined equivalently INF421, Lecture 6 – p. 13 INF421, Lecture 6 – p. 14 Walks and paths Properties of walks and paths Let i = ( i 1 , . . . , i k ) with k > 1 ; P = { ( v i j , v i j +1 ) | j < k } is a Let W be a walk given by the node sequence v i 1 , . . . , v i k walk v 1 → v k Every contiguous subsequence of v i 1 , . . . , v i k is also a ( i 1 , i 2 , i 3 ) = (2 , 1 , 1) walk v 1 v 2 v 3 v 4 P = { ( v 2 , v 1 ) , ( v 1 , v 1 ) } v 1 v 2 v 3 v 4 ( i 1 , i 2 , i 3 ) = (2 , 4 , 3) v 1 v 2 v 3 v 4 simple P = { ( v 2 , v 4 ) , ( v 4 , v 3 ) } v 4 , v 3 subwalk of v 1 , v 2 , v 4 , v 3 If W 1 is a walk u → v and W 2 is a walk v → w , then the G = ( V, A ) a digraph, G − 1 obtained by reversing all arcs in A sequence W = W 1 ∪ W 2 is a walk u → w Thm. If W is a walk in G , W − 1 is a walk in G − 1 v 1 v 2 v 3 v 4 A relation P is a path u → v if there is a walk W ⊆ P v 1 , v 2 and v 2 , v 4 walks ⇒ v 1 , v 2 , v 4 a walk from u to v such that P = W ∪ W − 1 The same holds for paths graphical representation of a path: • • • INF421, Lecture 6 – p. 15 INF421, Lecture 6 – p. 16

Circuits and cycles Connectedness Let A be a symmetric relation If a walk has i 1 = i k : circuit If for all u, v ∈ V there is a path u → v in A , then A is v 2 connected , otherwise disconnected v 3 v 1 v 1 v 2 v 3 v 4 v 1 v 2 v 3 v 4 disconnected v 4 connected circuit If A is not symmetric, equivalent notion is strong connectivity If a path with at least 3 nodes has i 1 = i k : cycle (replace “path” with “walk”) v 2 Let e be an edge in A , if A � { e } is disconnected, A is minimally connected v 3 v 1 v 1 v 2 v 3 v 4 v 4 cycle minimally connected INF421, Lecture 6 – p. 17 INF421, Lecture 6 – p. 18 Mathematical definition of a tree Orientations The outward orientation of a tree T with root r ∈ V is a Tree : a minimally connected relation T on a set V relation U such that: for every edge { ( u, v ) , ( v, u ) } of T , U contains only one of the arcs If one node is specified as the root , then the tree is for every leaf node ℓ of T , U has a path r → ℓ rooted Every node which only appears as part of a single edge v 1 v 3 v 4 r → v 1 v 3 v 4 r is called a dangling node The inward orientation is such that for every leaf node ℓ of v 1 v 2 v 3 v 4 T , U has a path ℓ → r v 1 , v 3 : dangling nodes v 1 r v 3 v 4 → A dangling node which is not the root is called a leaf v 1 v 3 v 4 r Edges of a rooted tree are also called branches INF421, Lecture 6 – p. 19 INF421, Lecture 6 – p. 20

A tree has | V | − 1 edges A tree has no cycles Lemma Thm. A cycle is not minimally connected A tree T on a set V has | V | − 1 edges Proof Cycle : a path C = W ∪ W − 1 where W is a walk ( v i 1 , . . . , v i k ) with i 1 = i k and k ≥ 3 Proof Every contiguous subsequence of W is a (sub)walk of W Let m ( T ) be the number of edges in T Consider any subwalk W 1 = ( v i j , . . . , v i h ) of W with j < h Show m ( T ) = | V | − 1 by induction on | V | Both ( v i 1 , . . . , v i j ) and ( v i h , . . . , v i k ) are contiguous subseq. of W , hence walks in W If | V | = 2 , a minimally connected relation requires one edge Their union W 0 = ( v i h , v i h +1 , . . . , v i k = v i 1 , . . . , v i j ) is also a walk in W Induction hypothesis : Suppose m ( T ) = | V | − 2 for all trees T on | V | − 1 nodes Since W − 1 ⊆ C , the walk W 2 = W − 1 is also in C 0 Let T be any tree on V Since C is symmetric, the paths P 1 , P 2 induced by W 1 , W 2 are both in C Any tree must have at least one leaf node ℓ (why?) Notice P 1 , P 2 are two paths v i j → v i h that have no common edges Because ℓ is a leaf, it is incident to only one edge e Notice also that P 1 ∪ P 2 = C Consider the tree T ′ = T � { e } on V ′ = V � { ℓ } Taking away an edge from P 1 or P 2 does not disconnect C C is not minimally connected Because | V ′ | = | V | − 1 , m ( T ′ ) = | V | − 2 by the induction hypothesis Thm. Thus, T has exactly m ( T ) = m ( T ∪ { e } ) = m ( T ) + 1 = | V | − 1 edges A tree has no cycles INF421, Lecture 6 – p. 21 INF421, Lecture 6 – p. 22 The converse Thm. If T is a symmetric relation on V with no cycles and m ( T ) = | V | − 1 , then T is a tree Chemical trees Proof By induction on | V | , aim to show T is a tree Recall: ∀ v ∈ V , δ ( v ) is the set of edges incident to v Since T has no cycles, there must be at least one node ℓ with | δ ( ℓ ) | = 1 (why?) Let V ′ = V � { ℓ } and T ′ = T � { e } , where { e } = δ ( ℓ ) Since T has no cycles, T ′ has no cycles either (why?) Since | T ′ | = | T | − 1 and | V ′ | = | V | − 1 , we have | T ′ | = | V ′ | − 1 By the induction hypothesis, | T ′ | is a tree Hence T is minimally connected Since e is the only edge in T incident to ℓ , T = T ′ ∪ { e } is also minimally connected Hence T is a tree INF421, Lecture 6 – p. 23 INF421, Lecture 6 – p. 24

Recommend

More recommend

Explore More Topics

Stay informed with curated content and fresh updates.

![R [ ]](https://c.sambuz.com/828992/r-s.webp)