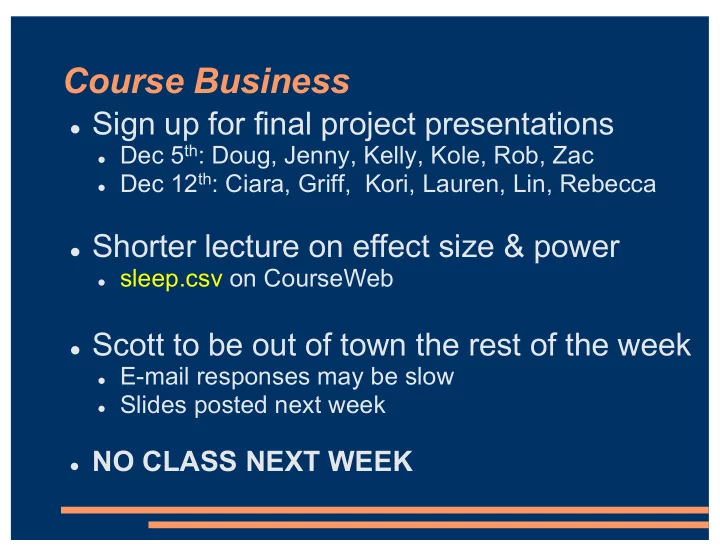

Course Business

l Sign up for final project presentations

l Dec 5th: Doug, Jenny, Kelly, Kole, Rob, Zac l Dec 12th: Ciara, Griff, Kori, Lauren, Lin, Rebecca

l Shorter lecture on effect size & power

l sleep.csv on CourseWeb

l Scott to be out of town the rest of the week

l E-mail responses may be slow l Slides posted next week

l NO CLASS NEXT WEEK