Conditioning in 90B

John Kelsey, NIST, May 2016

Conditioning in 90B John Kelsey, NIST, May 2016 Overview What is - - PowerPoint PPT Presentation

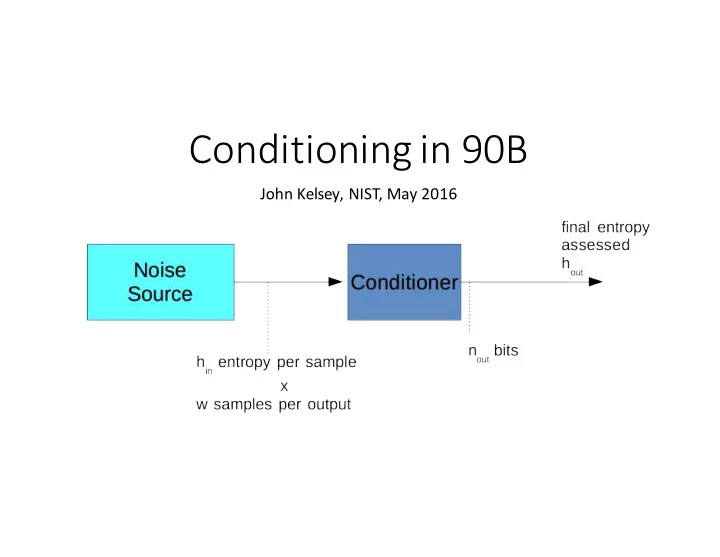

Conditioning in 90B John Kelsey, NIST, May 2016 Overview What is Conditioning? Vetted and Non-Vetted Functions EntropyArithmetic Open Issues Conditioning Optionalnot all entropy sources haveit. Improvestatistics

John Kelsey, NIST, May 2016

sources ¡haveit.

entropy/output.

the source to producefull-‑ entropy outputs.

Figuring out hout is the whole point of this presentation.

Designers can choose their own conditioning function.

90B specifies six “vetted” conditioning functions.

strong functions.

function ¡that:

than 128 bits of entropyinto its output.

function

= 128

entropy must pass.

input of this function, no more than 128 bits can ever come out...

function ¡of those 128 bits of internal state.

the minimum of output and internal width.

internal ¡width as to output size.

inputs.

this

min 𝑥×ℎ56, 𝟏. 𝟗𝟔𝑜798 , 𝟏. 𝟗𝟔𝑟 , if w×ℎ56< 2 min 𝑜798 , 𝑟 ℎ798 = ?min 𝑜798 , 𝑟 , if 𝑥×ℎ56 ≥ 2 min 𝑜798 , 𝑟

= entropy/sample ¡from noise ¡source

= noise ¡source ¡samples per conditioned output

= internal width of conditioning function

= output size ¡of conditioning function in bits

= entropy per conditioned output (what we are trying to find) min 𝑥×ℎ56,𝟏. 𝟗𝟔𝑜798, 𝟏. 𝟗𝟔𝑟 , if w×ℎ56< 2min 𝑜798, 𝑟 ℎ798 = ? min 𝑜798, 𝑟 , if 𝑥×ℎ56 ≥ 2 min 𝑜798 , 𝑟

min 𝒙×𝒊𝒋𝒐,0.85𝑜798,0.85𝑟 , if w×ℎ56< 2 min 𝑜798, 𝑟 ℎ798 = ? min 𝑜798, 𝑟 , if 𝑥×ℎ56 ≥ 2min 𝑜798, 𝑟

we lose a little to internal collisions:

get only 𝟏. 𝟗𝟔𝑜798 entropy assessed.

entropy,

𝑥×ℎ56 ≥ 2 min 𝑜798, 𝑟 we

the function hasn’t ¡catastrophicallythrown away entropy.

the noise source on the conditioned outputs.

by inspection.

ℎ798 = min 𝑥×ℎ56, 0.85𝑜798 , 0.85𝑟, ℎ> ×𝑜798 .

= entropy/sample ¡from noise ¡source

= noise ¡source ¡samples per conditioned output

= internal width of conditioning function

= output size ¡of conditioning function in bits

= measured entropy/bit of conditioned outputs

= entropy per conditioned output (what we are trying to find)

ℎ798 = min 𝑥×ℎ56, 0.85𝑜798 , 0.85𝑟, ℎ> ×𝑜798 .

ℎ798 = min 𝒙×𝒊𝒋𝒐, 0.85𝑜798 , 0.85𝑟, ℎ>×𝑜798 . entropy,

𝑥×ℎ56 ≥ 2 min 𝑜798, 𝑟 we

we lose a little to internal collisions:

get only 𝟏. 𝟗𝟔𝑜798 entropy assessed.

conditioned outputs! Note: There is no way to claim full entropy when using a non-‑vetted function.

entropy out.

variable!)

approximation to the reality

Measured Entropy of Conditioned Output

4-‑Bit Conditioner; Entropy Measured by MostCommon Predictor

5 4.5 4 3.5 3 2.5 2 1.5 1 0.5 2 4 6 8

Entropy Input per Output

10 12 14 Output Entropy Rule

7

6-‑Bit Conditioner; Entropy Measured by MostCommon Predictor

Output

6 5 4

Measured Entropy

3 2 1 2 4 6 8 10 12 14 16 18 20

Entropy Input per Output

Output Entropy Rule

9

8-‑Bit Conditioner; Entropy Measured by MostCommon Predictor

1 2 3 4 5 6 7 8

Measured Entropy of Conditioned Output

5 10 15 20 25 30

Entropy Input per Output

Output Entropy Rule

12

10-‑Bit Conditioner; Entropy Measured by MostCommon Predictor

Measured Entropy of Conditioned Output

10 8 6 4 2 5 10 15 20 25 30 35 40

Entropy Input per Output

Output Entropy Rule

14

12-‑Bit Conditioner; Entropy Measured by MostCommon Predictor

Output

12 10 8

Measured Entropy

6 4 2 5 10 15 20 25 30 35 40 45

Entropy Input per Output

Output Entropy Rule

16

14-‑Bit Conditioner; Entropy Measured by MostCommon Predictor

Output

14 12 10 8

Measured Entropy

6 4 2 5 10 15 20 25 30 35 40 45 50

Entropy Input per Output

Output Entropy Rule

14

12-‑Bit Conditioner; Entropy Measured by MostCommon Predictor Multiple Source Types

Output

12 10 8

Measured Entropy

6 4 2 Spike Normal Uniform Rule 5 10 15 20 25 30 35 40 45 50

Entropy Input per Output

Measured Entropy of Conditioned Output

8-‑Bit Conditioner; Entropy Measured by MostCommon Predictor Binary Source

10 9 8 7 6 5 4 3 2 1 5 10 15 20 25 30

Entropy Input per Output

Output Entropy Rule

Measured Entropy of Conditioned Output

12

10-‑Bit Conditioner; Entropy Measured by MostCommon Predictor Binary Source

10 8 6 4 2 5 10 15 20

Entropy Input per Output

Output Entropy Rule 25 30 35

somewhatconservative, estimate of entropy from conditioner

smoother and better behaved even as ¡we get to ¡12-‑ and 14-‑bit conditioner sizes.

intended to increase entropy/output.

gives reasonableanswers for small (tractable) cases.

choose another value?

(and thus more accurate?)

(99% confidence interval) estimate.