SLIDE 1

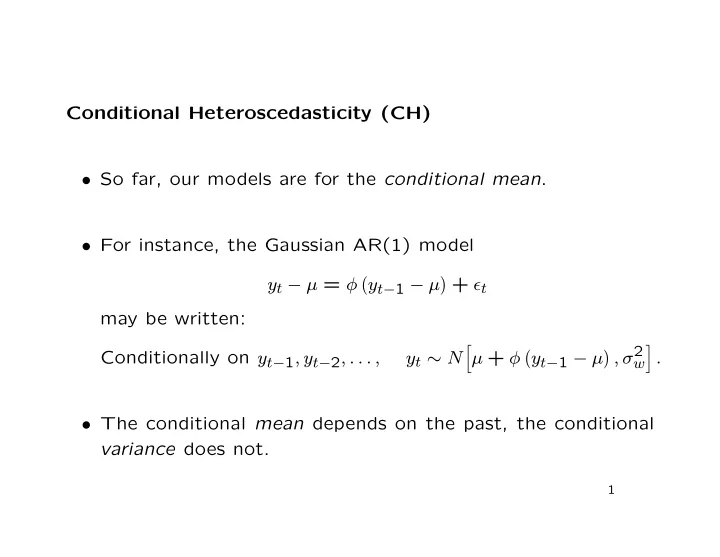

Conditional Heteroscedasticity (CH)

- So far, our models are for the conditional mean.

- For instance, the Gaussian AR(1) model

yt − µ = φ

yt−1 − µ + ǫt

may be written: Conditionally on yt−1, yt−2, . . . , yt ∼ N

- µ + φ

yt−1 − µ , σ2

w

- .

- The conditional mean depends on the past, the conditional