Co-authors: C-F Chien, Y-J Chen National Tsing Hua University ISMI - PowerPoint PPT Presentation

Bayesian Inference Technique for Data mining for Yield Enhancement in Semiconductor Manufacturing Data Presenter: M. Khakifirooz Co-authors: C-F Chien, Y-J Chen National Tsing Hua University ISMI 2015, 16 th -18 th Oct. KAIST, Daejeon, Korea 1

Bayesian Inference Technique for Data mining for Yield Enhancement in Semiconductor Manufacturing Data Presenter: M. Khakifirooz Co-authors: C-F Chien, Y-J Chen National Tsing Hua University ISMI 2015, 16 th -18 th Oct. KAIST, Daejeon, Korea 1

Outline Data Analysis Approach • Bayesian Variable The Purpose Final Selection (BVS) of Bayesian Decision • Data Clearance Inference Table • Yield Classification Data Conclusive Conclusion & Structure Research Path Forward provided by Framework Data Model 2

The Purpose of Bayesian Inference Naïve Bayesian Learning Curve Classifier Gaussian Bayesian Bayesian Bayesian Networks Inference Classifier … 3

The Purpose of Bayesian Inference Yield Learning Curve of Semiconductor Manufacturing: Human Experience Human Experience In addition to data + analytics, Cumulative System Analysis Engineering Training and Experience significantly enhanced yield improvement Effron(1996), Tobin et al. (1999) Yield Learning Curve of Semiconductor Manufacturing 4

Data Structure provided by Data Model ⋕ of process stage 𝑗 = 1, … , 𝑁 sample size 𝑂 Nominal Variables 1 ≤ 𝑙 𝑗 ≤ 𝑂 ⋕ of specify tools at each stage 𝑜 𝑗𝑘 , 𝑘 = 1, … , 𝑙 𝑗 𝐰𝐛𝐬 𝟐 𝐰𝐛𝐬 𝟑 frequency of each specify tool Obs. 1 ≤ 𝑄 𝑜 𝑗𝑘 ≤ 𝑜 𝑗𝑘 ⋕ of exist chambers for each tool 𝑜 1 𝑏 1 𝑏 2 frequency of each exist chamber 𝑞 𝑚 , 𝑚 = 1, … , 𝑄 𝑜 2 𝑏 1 𝑐 2 𝑜 𝑗𝑘 𝑜 3 𝑐 1 Na 𝑄 𝑜𝑗𝑘 𝑄 𝑜𝑗𝑘 𝑙 𝑗 𝑁 𝑙 𝑗 𝑂 = 𝑞 𝑚 𝑂 ∗ 𝑁 = 𝑞 𝑚 Dummy Variables 𝑘=1 𝑚=1 𝑗=1 𝑘=1 𝑚=1 𝐰𝐛𝐬 𝟐 - 𝒃 𝟐 𝐰𝐛𝐬 𝟐 − 𝒄 1 𝐰𝐛𝐬 𝟑 - 𝒃 𝟑 𝐰𝐛𝐬 𝟑 - 𝒄 2 Obs. Response Variable: %Yield (continues) 𝑜 1 1 0 1 0 𝑜 2 1 0 0 1 Explanatory Variables: Stages (tools-chambers) (nominal) 𝑜 3 0 1 0 0 Stages (process time) (continues) 5

Data Structure provided by Data Model 𝒕𝒖𝒃𝒉𝒇 𝟐 𝒕𝒖𝒃𝒉𝒇 𝟑 Yield Yield 𝒕𝒖𝒃𝒉𝒇 𝟐 𝒕𝒖𝒃𝒉𝒇 𝟑 Yield 𝒕𝒖𝒃𝒉𝒇 𝟐 𝒕𝒖𝒃𝒉𝒇 𝟑 obs. 1 𝑈𝑝𝑝𝑚 1 𝑈𝑝𝑝𝑚 2 Chamber 1 Chamber 2 𝐸𝑏𝑢𝑓 1.1 𝐸𝑏𝑢𝑓 1.2 obs. 1 obs. 1 obs. 2 obs. 2 obs. 2 𝑈𝑝𝑝𝑚 1 𝑈𝑝𝑝𝑚 1 Chamber 2 Chamber 1 𝐸𝑏𝑢𝑓 2.1 𝐸𝑏𝑢𝑓 2.2 obs. 3 obs. 3 obs. 3 𝑈𝑝𝑝𝑚 2 Tool 2 Chamber 1 Chamber 2 𝐸𝑏𝑢𝑓 3.1 Date 3.2 Yield 𝒕𝒖𝒃𝒉𝒇 𝟐 𝒕𝒖𝒃𝒉𝒇 𝟑 𝑈𝑝𝑝𝑚 1. Chamber 1 𝑈𝑝𝑝𝑚 2. Chamber 2 obs. 1 obs. 2 𝑈𝑝𝑝𝑚 1. Chamber 2 𝑈𝑝𝑝𝑚 1. Chamber 1 obs. 3 𝑈𝑝𝑝𝑚 2. Chamber 1 𝑈𝑝𝑝𝑚 2. Chamber 2 𝒕 𝟐. 𝑼 𝟐. 𝑫𝒊 𝟐 𝒕 𝟐. 𝑼 𝟐. 𝑫𝒊 𝟑 𝒕 𝟐. 𝑼 𝟑. 𝑫𝒊 𝟐 𝒕 𝟑. 𝑼 𝟑. 𝑫𝒊 𝟑 𝒕 𝟑. 𝑼 𝟑. 𝑫𝒊 𝟑 Yield 0 0 0 obs. 1 1 1 obs. 2 0 1 0 0 1 obs. 3 0 0 0 1 1 𝒕 𝟐. 𝑼 𝟐. 𝑫𝒊 𝟐 𝒕 𝟐. 𝑼 𝟐. 𝑫𝒊 𝟑 𝒕 𝟐. 𝑼 𝟑. 𝑫𝒊 𝟐 𝒕 𝟑. 𝑼 𝟑. 𝑫𝒊 𝟑 𝒕 𝟑. 𝑼 𝟑. 𝑫𝒊 𝟑 Yield 𝐸𝑏𝑢𝑓 1.1 0 0 𝐸𝑏𝑢𝑓 1.2 0 obs. 1 obs. 2 0 𝐸𝑏𝑢𝑓 2.1 0 0 𝐸𝑏𝑢𝑓 2.2 obs. 3 0 0 𝐸𝑏𝑢𝑓 3.1 𝐸𝑏𝑢𝑓 2.3 0 6

Data Structure provided by Data Model 𝐰𝐛𝐬 𝟐 − 𝒄 1 𝐰𝐛𝐬 𝟐 - 𝒃 𝟐 𝐰𝐛𝐬 𝟐 - 𝒅 𝟐 Obs. 𝑜 1 1 0 0 𝑜 2 0 0 1 𝑒 Multinomial 1 3 , 1 3 , 1 var 1 − 𝑏 1 , var 1 − 𝑐 1 , var 1 − 𝑑 1 𝑜 3 0 1 0 3 1 1 1 Pr(i th variable sellected) selection probability based on engineer experience 3 3 3 𝐰𝐛𝐬 𝟐 - 𝒃 𝟐 1,0,0 To randomly pick a point Distribution over Multinomial in this space, we need a (posterior distribution): continues distribution Dirichlet Distribution 0,0,1 0,1,0 𝐰𝐛𝐬 𝟐 − 𝒄 1 𝐰𝐛𝐬 𝟐 - 𝒅 𝟐 7

Data Analysis Approach Critical Phenomena: High dimensionality caused by transforming categorical variables to i. dummies Multicollinearity caused by dummies nature ii. Complicated posterior distribution caused hardness for direct iii. variable selection Remedy: Approximate Inference with Sampling Use random sampling (MCMC techniques: Gibbs sampler , Metropolis-Hastings ,…) to approximate the distribution and selecting significant explanatories 8

Data Analysis Approach: Gibbs Sampler 𝟏 , 𝒚 𝟑 𝟏 Beginning with initial value 𝒚 𝟐 Suppose 𝒚 𝟐 , 𝒚 𝟑 ~𝐐𝐬 𝑦, 𝑦 2 Iterating the above step until the Sampling at iteration t as follow: sample values have the same distribution as if they where Iteration Sample 𝐲 𝟐 Sample 𝐲 𝟑 sampled from the true posterior joint distribution 𝑢 ~𝐐𝐬 x 𝟐 |x 𝟑 𝑢 ~𝐐𝐬 x 𝟑 |x 𝟐 t−1 𝑢 x 𝟐 x 𝟑 k Based on frequency of visits, selecting the most probable variables 9

Data Analysis Approach: Data Clearance When X is categorical (dummy var.) & - parametric or non-parametric? Y is quantitative variable - dependent or independent? - unbalanced class? Yield value Representative var. 53.12 < 1 Bad Yield 53.12 ≤ and ≤ 57.51 Middle Yield ignore > 57.51 Good Yield 0 10

Data Analysis Approach: Data Clearance Variable II Level a Level b Variable I Level c f c𝑏 f c𝑐 Level d f d𝑏 f d𝑐 If both 𝑤𝑏𝑠. 𝐽 & 𝑤𝑏𝑠. 𝐽𝐽 are explanatory: MEASURMENT of AGREEMENT W. S. Robinson(1957) - test the Interchangeability of measures Cohen’s Kappa 𝓛 - measurement of the degree of Homogeneity < 0 , "No agreement" 0 ≤ < 0.2 , “Slight agreement“ 0.2 ≤ < 0.4 , "Fair agreement" If 𝑤𝑏𝑠. 𝐽 is explanatory and 𝑤𝑏𝑠. 𝐽𝐽 is response: 0.4 ≤ < 0.6 , "Moderate agreement" 0.6 ≤ < 0.8 , "Substantial agreement" - measurement of the Reliability of instrument (test/scale) 0.8 ≤ ≤ 1 , "Almost perfect agreement" - measurement of the Objectivity or lack of bias 11

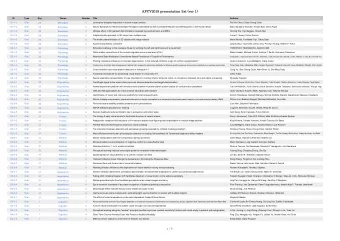

Research Framework (I) T HE CLASS DISTRIBUTION FOR THE KAPPA TEST FOR EACH PAIR OF INPUT VARIABLES Problem Almost perfect A Bayesian Framework for Substantial agreement Moderate agreement agreement Definition Semiconductor Manufacturing Data 3 109 1,764 Fair agreement Slight agreement No agreement 24,539 280,081 758,574 Data Integration Data Preparation Dummy Variable Construction for Integrated Variables (1460 var.) Cohen’s Kappa Data Statistics for Mining & Agreement Wrap the associate variables each pairs of Key Factor input variables Screening Assign Cutting Point & Bad/Middle/Good Wafers No Agreement 12

Research Framework (II) Cohen’s Kappa Data Statistics for RMSE Adjusted R-squared Model Min Median Max Min Median Max Mining & each pairs of X Gibbs + Key Factor & Y 1.842 2.653 2.841 0.046 0.371 0.711 GLM Screening Agreement GBM + 2.534 3.051 3.332 0.000 0.053 0.337 GLM No Agreement RF + 2.268 2.838 3.660 0.016 0.293 0.507 GLM Data Clearance ≤ 0.2 GLM 7.951 34.60 139.8 0.000 0.029 0.214 Number of resamples 20, Number of iterations 2 BVS via Gibbs Sampler GLM Construction with Gaussian Model A Comparison to the Wrapped distribution & Repeated Random Construction, Variables Sub-sampling Validation Evaluation & Interpretation Define Abnormal Devices & Time 13

Decision Graph High Yield Middle Yield Low Yield 14

Decision Table Date Factors Bad Good Stage10 - Tool2 - Chamber3 before 8/29/2014 2:32 after 8/29/2014 12:50 between 8/30/2014 3:26 & Stage12 - Tool2 - Chamber1 before 8/29/2014 10:55 8/30/2014 3:43 after 8/29/2014 7:36 till 8/30/2014 Stage12 - Tool2 - Chamber4 before 8/29/2014 7:36 3:44 Stage13 - Tool5 - Chamber2 - generally effected the high yield Stage17 - Tool2 - Chamber2 after 8/30/2014 12:21 before 8/30/2014 10:37 Stage23-Tool3-Chamber2 - generally effected the high yield Stage44 - Tool7.- Chamber2 and at 9/3/2014 at 9/1/2014 Chamber3 Stage49 - Tool1.- Chamber4 at 9/3/2014 at 9/2/2014 Stage57 - Tool1.- Chamber3 - generally effected the high yield 15

Conclusion & Path Forward Based on the empirical results, we validate that the proposed approach has practical viability, which means adding the efficacy of domain knowledge and experience to the system could improve results. Using the domain knowledge might be to restrict conjunctions in rules to tools, chambers and steps that are related to occurs within a reasonable time frame. The data are not sampled from a stationary population, hence, over the time, the results may change significantly, or some empirical answer might be reject based on engineer domain knowledge, which doesn’t mean that the result is incorrect. The result may be a proxy for one or more events that are occurring elsewhere or at the other periods of the time, hence, the simulation study is an essential tool for evaluation the accuracy of our proposed method. 16

17

Recommend

More recommend

Explore More Topics

Stay informed with curated content and fresh updates.