Click to edit Master title style Overview Click to edit Master - - PowerPoint PPT Presentation

Click to edit Master title style Overview Click to edit Master - - PowerPoint PPT Presentation

Click to edit Master title style Overview Click to edit Master title style Background Methods and Optimity Matrix have been engaged to undertake a formative evaluation of three sites from the earlier New Models of Care (Accelerate)

Click to edit Master title style Background

- Methods and Optimity Matrix have been engaged to undertake a formative

evaluation of three sites from the earlier New Models of Care (Accelerate)

- programme. These sites have since joined Pioneers through the Wave Two

selection process. We are sharing the approach and learning with all Pioneers.

- The NMoC (Accelerate) Programme formative evaluation involves working

closely with local health economies to review and provide constructive feedback on the design and implementation of the programme locally and nationally.

- This Webinar is one of the evaluation feedback mechanisms to help maximise

success for each of the health economies involved in the Pioneer Programme.

Click to edit Master title style Learning outcomes

- By the end of the session you should be able

to:

- Identify the key steps for planning and

implementing an evaluation, including what happens after the evaluation;

- Identify the basic principles of economic

evaluation;

- Utilise the information provided and the key

resources to avoid some of the common evaluation pitfalls.

Click to edit Master title style Planning an evaluation in 6 steps

- 1. Identifying the evaluation audience and

- bjectives;

- 2. Identifying what type of evaluation is appropriate;

- 3. Setting out the evaluation governance structure;

- 4. Deciding and allocating the resources required ;

- 5. Identify the data requirements; and

- 6. Setting out the timing of the evaluation.

Click to edit Master title style Understanding the programme logic

- Logic model sets out what it is the

intervention/programme is supposed to achieve and how

- Examining the validity of the intervention logic

helps to:

– Ask the right questions when designing the evaluation; – Detect and analyse flaws or shortcomings that may become apparent in the later stages of the evaluation; and – Identify key lessons learned.

Click to edit Master title style

A visual representation of a logic model

Click to edit Master title style Defining the logic model components

Click to edit Master title style Developing an evaluation plan

- Why develop an evaluation plan?

- Identifies how you will conduct the evaluation, what resources you will

need, your evaluation objectives and evaluation stakeholders

- When to develop an evaluation plan?

- Start early—before you implement a programme or initiative

- How to develop an evaluation plan?

- 4 main steps:

- Clarify programme objectives and goals

- Develop evaluation questions

- Develop evaluation methods

- Set up a timeline for evaluation activities

Click to edit Master title style Setting the right objectives

- Objectives should always be SMART (specific, measureable, achievable,

realistic and time-specific)

- Should also include an indication of:

- What behaviour you are trying to change

- Who is the target population for this change

- How much change is expected

- When this change will be measured (over what time period)

- For example:

- By the end of the programme , 98% participants (people 65+ with 3 or more

comorbid conditions) will report successfully receiving day-to-day LTC services in an extensivist clinic setting

- Should be achievable and consider what can realistically be achieved in

terms of scale and scope in the given time and resources

Click to edit Master title style Setting evaluation indicators

- How will the questions will be answered (indicators)

- Are they measureable quantitatively or qualitatively?

- Decide where this information will come from (data source)

- From monitoring data or by collecting information from patients?

- Decide how you will gather this information (method)

- Using surveys, interviews, focus groups or will it come from an existing data

source?

Click to edit Master title style Developing evaluation methods

- Monitoring and feedback systems

- Process and outcome measures

- Observational system for live monitoring

- Satisfaction surveys (staff and/or patients)

- Goals, process and outcome measures

- Behavioural surveys

- Interviews and/or focus groups with patients/staff

- Wider-measures of impact (incl economic impact)

Click to edit Master title style Establishing a comparison group

- You need a comparison population to establish what would have

happened if there was no change and this can be done by:

- Population segmentation and matching with a similar population

based on key characteristics;

- Participant who were invited, but declined to participate in the

programme; and

- If no comparison group is possible can use a pre- and post-test with

programme participants.

- Range of statistical techniques to help segment your population

by characteristics of interest so that you can match for the purpose of comparing the intervention’s impact

- Population segmentation: regression analysis, latent class analysis,

etc.

- Matching: propensity score matching, etc.

Click to edit Master title style Key concepts for economic evaluation

- Answers evaluation questions related to effectiveness or

added-value

- To assess cost-effectiveness of interventions, three key

pieces of information are needed:

Click to edit Master title style

Methods for conducting economic evaluation

Click to edit Master title style Determining the economic benefits

- In order to convert the costs, health effects and

economic benefits into a measure of cost-effectiveness we need to compare an intervention to a counterfactual

- A counterfactual is usually what is currently in place (such as

usual care) to deal with the problem under consideration. It is quite common for the counterfactual to be a “do nothing” scenario, for example what would happen if no intervention were available

- Cost, health effects and economic benefits are calculated for

both the counterfactual and the intervention

- The incremental costs and economic benefits (the difference)

between the counterfactual and intervention is used to generate an Incremental Cost Effectiveness Ratio

Click to edit Master title style An economic decision tree

Click to edit Master title style Determining cost-effectiveness

Incremental Cost Effectiveness Ratio (ICER) = Incremental costs / Incremental benefits = (A-B)/ (C-D) ICER =< £20,000 - Cost effective

Click to edit Master title style

What to do with evaluation information?

Click to edit Master title style Common pitfalls to avoid

- Ensure that there is a clear evidence base for the problem you are trying to

address with your programme

- Documented and evidenced case for change

- Might require a feasibility study

- Build evaluation into programme design

- Start early--do not wait until the programme/pilot is over before thinking about evaluating

- Ensure that your objectives are clear, concise, measurable and achievable

- You will need to spend enough time to get these right and to ensure that all involved are

- n the same page

- Evaluation requires objectivity

- Someone involved in the design and delivery of the programme should not conduct the

evaluation

- You will need to identify and allocate the appropriate resources for this activity

- Transparency is crucial

- Keep a well documented, auditable paper trail of decisions and decision-making

- Engage all of the appropriate stakeholders

- Know who these are and have a plan for how they will be engaged and when

Click to edit Master title style Useful evaluation resources

- Personal Social Services Research Unit

- Examples of health and social care evaluations: http://www.pssru.ac.uk/index.php

- CDC Framework for Evaluation

- Guidance and materials for pubic health programmes: http://www.cdc.gov/eval/framework/

- HM Treasury Magenta Book

- Guidance for designing public policy/intervention evaluations:

https://www.gov.uk/government/publications/the-magenta-book

- European Commission’s Evaluation Guidelines

- Comprehensive guidance for undertaking evaluation in the context of the EU:

http://ec.europa.eu/smart-regulation/evaluation/index_en.htm

- Better Evaluation

- Online evaluation community, guidance, resources and examples: http://betterevaluation.org/

- Evaluation Toolbox

- Resources and guidance: http://evaluationtoolbox.net.au/

- Public Health England Standard Evaluation Frameworks

- Specific evaluation frameworks for PH interventions http://www.noo.org.uk/core/frameworks

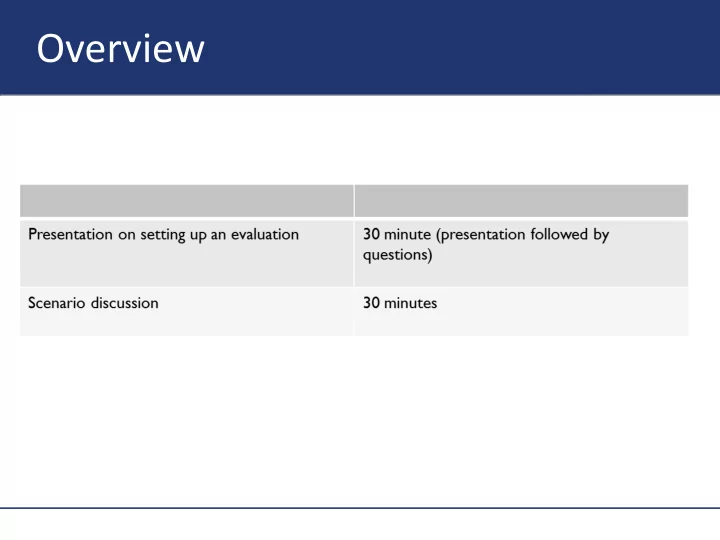

Scenario discussion

30 minutes

Click to edit Master title style Integrated care scenario

Banbridge Integrated Care was launched in April 2014. The service is delivered by a collaboration between Banbrigde Community Health Services, Banbridge NHS Foundation Trust and Borough of Banbridge Social

- Care. It is open to all adults living in Banbridge with the aim of maintaining independence in the community

and preventing unnecessary A&E attendances, hospital and care home admissions and delayed discharges. Users and staff access the service via a single point of contact which incorporates the referral pathways and immediately addresses user needs. Integrated health and social care teams provide a whole system response to intermediate care, hospital discharge, urgent care, and community rehabilitation. All teams have shared access to a re-ablement service.

Click to edit Master title style Integrated care evaluation scenario

Using the 6 steps for setting up an evaluation to design the evaluation plan for the Banbridge Integrated Care Programme.

Click to edit Master title style Integrated care evaluation scenario

- Step 1: Identifying the evaluation audience and

- bjectives

- Who might be the audience for this evaluation?

- Does this programme appear to have been

designed to address a particular need? If so, what?

- What would be an example of a SMART

programme objective for this programme?

- What might be the goals of the evaluation?

Click to edit Master title style Integrated care evaluation scenario

- Step 2: Identifying what type of evaluation is

appropriate

- What type of evaluation might be appropriate (the

programme is ongoing since 2014)?

- What would be some relevant evaluation

questions?

Click to edit Master title style Integrated care evaluation scenario

- Step 3: Setting out the evaluation governance structure

- What structures would be required to manage this

evaluation?

- How might these structures work together?

- How might learning gathered over the course of

the evaluation be captured and feedback?

Click to edit Master title style Integrated care evaluation scenario

- Step 4: Deciding and allocating the resources required

- What resources would be required for carrying out

this evaluation?

- Who will be responsible for allocating these

resources?

Click to edit Master title style Integrated care evaluation scenario

- Step 5: Identify the data requirements

- What indicators could be used to address the

evaluation questions?

- What would be some useful sources of data to

gather information on these indicators?

- How might this data be collected?

Click to edit Master title style Integrated care evaluation scenario

- Step 6: Setting out the timing of the evaluation.

- How long should this evaluation take to carry out

and at what time point should it be conducted?

- When should learning from the evaluation?

Annex

Additional information

Click to edit Master title style What is evaluation?

- Evaluation is an objective process of understanding how

a policy, programme or other intervention was implemented, what effects it had, for whom, how and

- why. (HM Treasury Magenta Book)

- The main purposes of evaluations:

- To contribute to the design of interventions

- To provide input for setting political priorities

- To assist in an efficient allocation of resources

- To improve the quality of the intervention

- To report on the achievements of the intervention

(accountability) (European Commission Evaluation Guidance)

Click to edit Master title style What is a formative evaluation?

- Formative evaluation is generally takes place before or during the

implementation phase of a programme with the aim of improving design and performance

- Complements a summative evaluation and is essential for trying to

understand why a program works or doesn’t, and what other factors (internal and external) are or likely to impact on the programme’s progress

- Valuable investment that improves the likelihood of achieving a

successful outcome through better program design

- You may also want to undertake a formative evaluation as part

- f the business case/case for change development or to

understand the potential benefits of a proposed programme

Click to edit Master title style When to evaluate?

Click to edit Master title style Types of evaluation

Click to edit Master title style Designing evaluation questions

- Depending on the type of evaluation (i.e. formative, outcome, etc.) and

the purpose of the evaluation your evaluation might focus on one or more of the key issues below

Click to edit Master title style

Developing evaluation questions (cont’d)

- Relevance: Does the intervention pursue the “right” objectives?

- What are the needs and expectations of the target population?

- Have these changed over time?

- Are there additional problems that the intervention should address?

- Effectiveness: Has the intervention achieved what it was meant to?

- To what extent have the originally set objectives been achieved?

- What have been the (positive/negative, intended/unintended) results?

- What were the reasons for any shortcomings?

- Efficiency: Were the results achieved at a reasonable cost?

- What costs (financial, opportunity costs, etc.) were incurred?

- Can the costs and benefits of the intervention be compared?

- Could the same or better results have been achieved at a lower cost?

- Asking the right questions is contingent upon the evaluation objectives!

Click to edit Master title style Mapping out your evaluation

Click to edit Master title style Useful secondary data sources

- Official statistics

- BCF data

- QoF data

- SUS data

- HES data

- Public health data

- Prevalence

- Unmet need

- A complete list of collected health and social care data can be found on the

Health and Social Care Information Centre (HSCIC): http://www.hscic.gov.uk/searchcatalogue

- If there isn’t appropriate data available yowl have to collect your own

- Programme monitoring data, surveys, interviews, focus groups, observations, etc.

Thank You.

Any Questions?

Dr Nena Foster Nena.Foster@optimityadvisors.com 02075534809