Chapter 13 Backward analysis

NEW CS 473: Theory II, Fall 2015 October 8, 2015

13.1 Some more probability

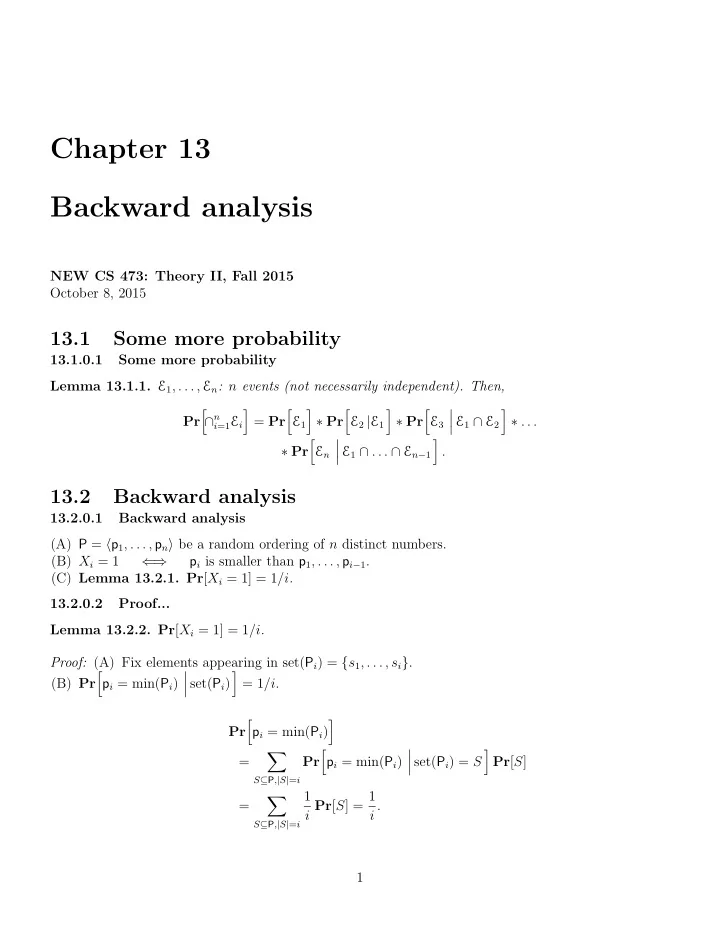

13.1.0.1 Some more probability Lemma 13.1.1. E1, . . . , En: n events (not necessarily independent). Then, Pr

- ∩n

i=1Ei

- = Pr

- E1

- ∗ Pr

- E2 |E1

- ∗ Pr

- E3

- E1 ∩ E2

- ∗ . . .

∗ Pr

- En

- E1 ∩ . . . ∩ En−1

- .

13.2 Backward analysis

13.2.0.1 Backward analysis (A) P = p1, . . . , pn be a random ordering of n distinct numbers. (B) Xi = 1 ⇐ ⇒ pi is smaller than p1, . . . , pi−1. (C) Lemma 13.2.1. Pr[Xi = 1] = 1/i. 13.2.0.2 Proof... Lemma 13.2.2. Pr[Xi = 1] = 1/i. Proof: (A) Fix elements appearing in set(Pi) = {s1, . . . , si}. (B) Pr

- pi = min(Pi)

- set(Pi)

- = 1/i.

Pr

- pi = min(Pi)

- =

- S⊆P,|S|=i

Pr

- pi = min(Pi)

- set(Pi) = S

- Pr[S]

=

- S⊆P,|S|=i

1 i Pr[S] = 1 i . 1