(Big) Data Storage Systems

Macroarea di Ingegneria Dipartimento di Ingegneria Civile e Ingegneria Informatica

Corso di Sistemi e Architetture per Big Data A.A. 2019/2020 Valeria Cardellini Laurea Magistrale in Ingegneria Informatica

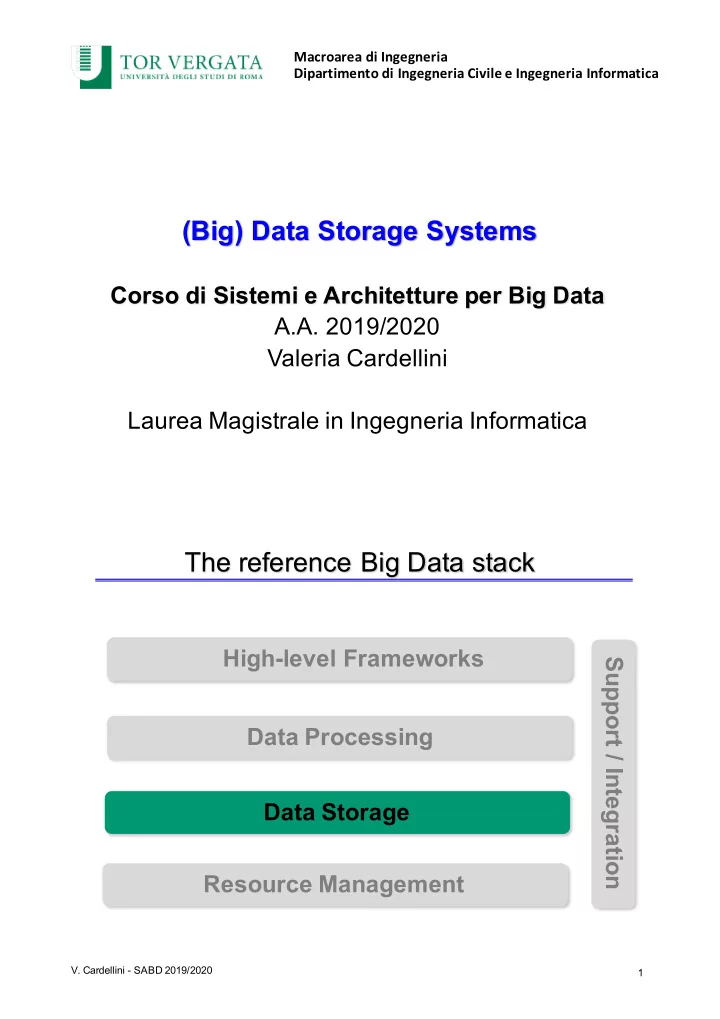

The reference Big Data stack

- V. Cardellini - SABD 2019/2020

1