10/6/2011 1

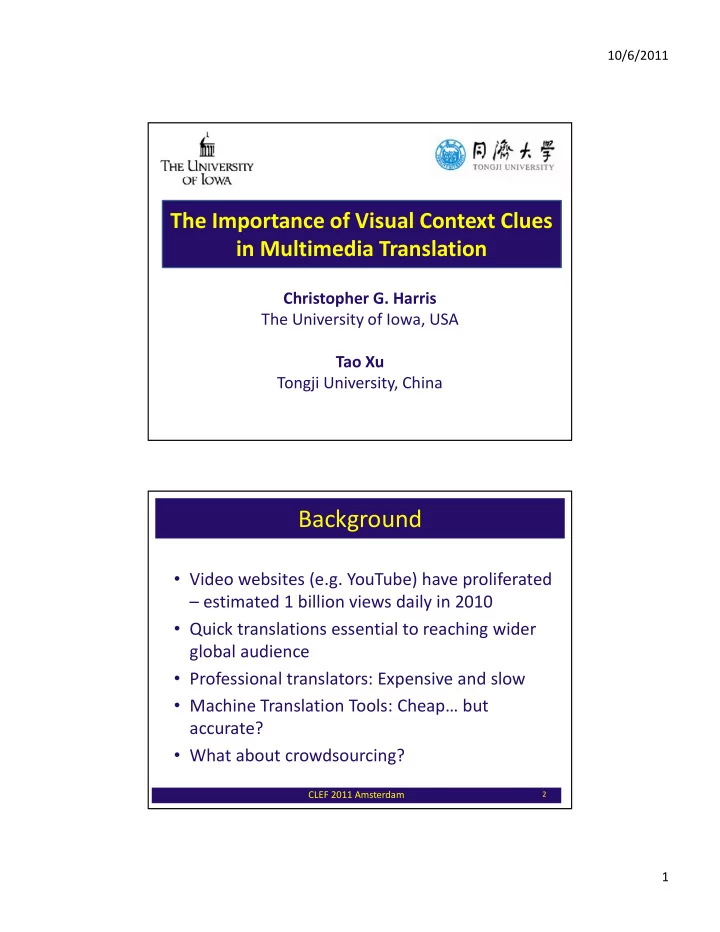

The Importance of Visual Context Clues in Multimedia Translation

Christopher G. Harris The University of Iowa, USA Tao Xu Tongji University, China

Background

- Video websites (e.g. YouTube) have proliferated

– estimated 1 billion views daily in 2010

- Quick translations essential to reaching wider

global audience

- Professional translators: Expensive and slow

- Machine Translation Tools: Cheap… but

accurate?

- What about crowdsourcing?

CLEF 2011 Amsterdam

2