Announcements

- Homework

k 3: Game Trees s (lead TA: Zhaoqing)

- Due Mon 30 Sep at 11:59pm

- Pr

Project 2 t 2: Multi-Agent Search (lead TA: Zhaoqing)

- Due Thu 10 Oct at 11:59pm (and Thursdays thereafter)

- Offi

Office Ho Hours

- Iris:

s: Mon 10.00am-noon, RI 237

- JW

JW: Tue 1.40pm-2.40pm, DG 111

- El

Eli: Fri 10.00am-noon, RY 207

- Zh

Zhaoqi qing: : Thu 9.00am-11.00am, HS 202

CS 4100: Artificial Intelligence

Uncertainty and Utilities

Ja Jan-Wi Willem van de Meent Northeastern University

[These slides were created by Dan Klein, Pieter Abbeel for CS188 Intro to AI at UC Berkeley (ai.berkeley.edu).]

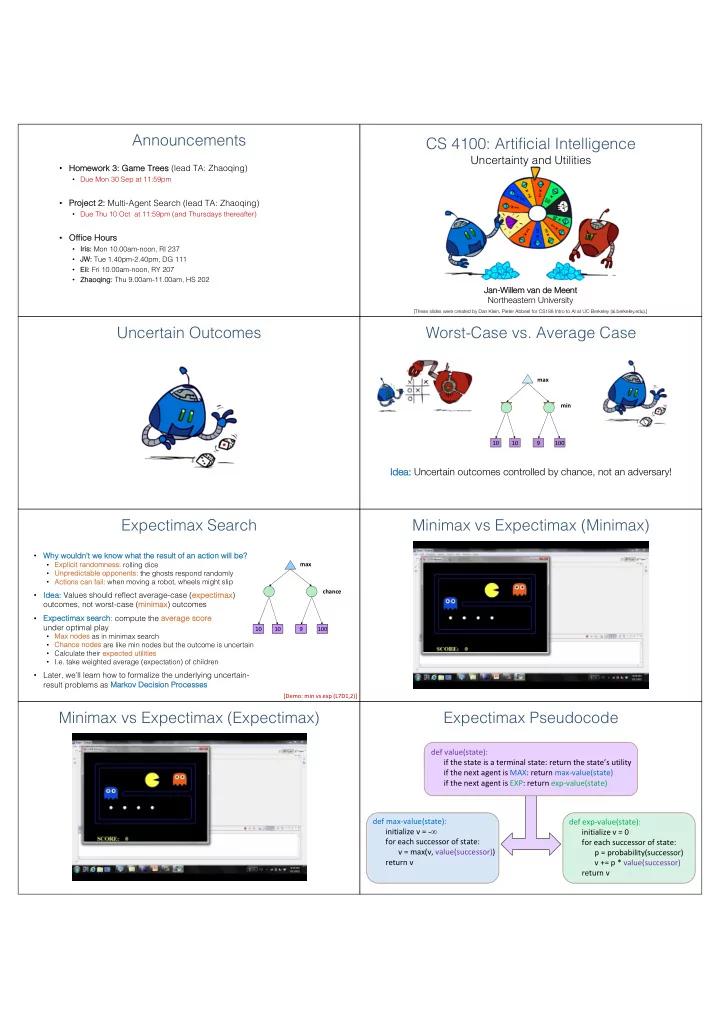

Uncertain Outcomes Worst-Case vs. Average Case

10 10 9 100 max min

Id Idea: Uncertain outcomes controlled by chance, not an adversary!

Expectimax Search

- Why

y wouldn’t we kn know what the resu sult of an action will be?

- Exp

xplicit randomness: ss: rolling dice

- Unpredictable opponents:

s: the ghosts respond randomly

- Actions

s can fail: when moving a robot, wheels might slip

- Id

Idea: ea: Values should reflect average-case (exp xpectimax)

- utcomes, not worst-case (mi

minima max) outcomes

- Exp

xpectimax se search: compute the ave verage sc score under optimal play

- Max

x nodes s as in minimax search

- Ch

Chance n nodes are like min nodes but the outcome is uncertain

- Calculate their exp

xpected utilities

- I.e. take weighted average (expectation) of children

- Later, we’ll learn how to formalize the underlying uncertain-

result problems as Marko kov v Decisi sion Processe sses

10 4 5 7 max chance 10 10 9 100 [Demo: min vs exp (L7D1,2)]