Announcements

- Homework

k 3: Game Trees s (lead TA: Zhaoqing)

- Due Tue 1 Oct at 11:59pm (deadline extended)

- Homework

k 4: MDPs s (lead TA: Iris)

- Due Mon 7 Oct at 11:59pm

- Pr

Project 2 t 2: Mu Multi-Ag Agent Search (lead TA: Zhaoqing)

- Due Thu 10 Oct at 11:59pm

- Offi

Office H Hours

- Iris:

s: Mon 10.00am-noon, RI 237

- JW

JW: Tue 1.40pm-2.40pm, DG 111

- Zh

Zhaoqi qing: : Thu 9.00am-11.00am, HS 202

- El

Eli: Fri 10.00am-noon, RY 207

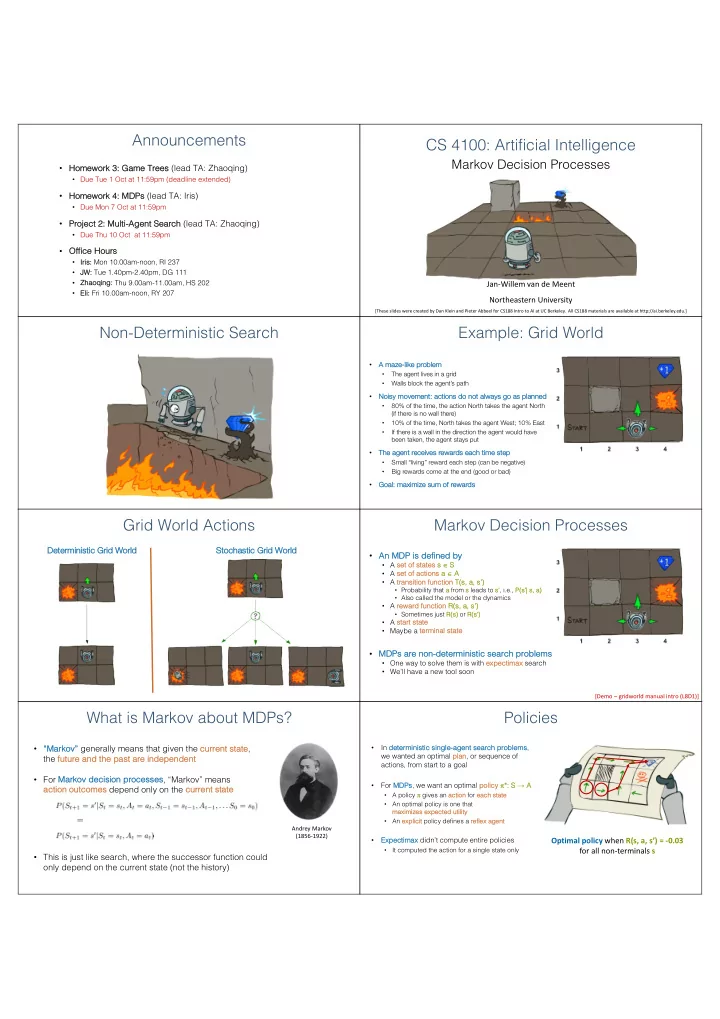

CS 4100: Artificial Intelligence

Markov Decision Processes

Jan-Willem van de Meent Northeastern University

[These slides were created by Dan Klein and Pieter Abbeel for CS188 Intro to AI at UC Berkeley. All CS188 materials are available at http://ai.berkeley.edu.]

Non-Deterministic Search Example: Grid World

- A

A maze-like ke problem

- The agent lives in a grid

- Walls block the agent’s path

- No

Nois isy movement: act actions s do

- not

- t al

always ays go as as plan anned ed

- 80% of the time, the action North takes the agent North

(if there is no wall there)

- 10% of the time, North takes the agent West; 10% East

- If there is a wall in the direction the agent would have

been taken, the agent stays put

- The

The age gent nt receives s rewards s each h time st step

- Small “living” reward each step (can be negative)

- Big rewards come at the end (good or bad)

- Go

Goal: l: maxim imiz ize sum of rewa wards

Grid World Actions

De Determ rmin inis istic ic Grid rid World rld St Stochastic Grid World

Markov Decision Processes

- An MDP is

s defined by

- A se

set of st states s s Î S

- A se

set of actions s a a Î A

- A transi

sition function T(s, s, a, s’) ’)

- Probability that a

a from s leads to s’ s’, i.e., P(s P(s’| s, s, a)

- Also called the model or the dynamics

- A re

reward rd function R(s, s, a, s’) ’)

- Sometimes just R(s)

s) or R( R(s’) ’)

- A st

start st state

- Maybe a terminal st

state

- MDPs

s are non-determinist stic se search problems

- One way to solve them is with exp

xpectimax search

- We’ll have a new tool soon

[Demo – gridworld manual intro (L8D1)]

What is Markov about MDPs?

- “Marko

kov” v” generally means that given the current st state, the future and the past st are independent

- For Marko

kov v decisi sion processe sses, “Markov” means action outcomes s depend only on the current st state

- This is just like search, where the successor function could

- nly depend on the current state (not the history)

Andrey Markov (1856-1922)

Policies

- In determinist

stic si single-agent se search problems, we wanted an optimal pl plan, or sequence of actions, from start to a goal

- For MD

MDPs, we want an optimal policy y p*: *: S → A

- A policy p gives an acti

action

- n for each st

state

- An optimal policy is one that

maxi ximize zes s exp xpected utility y

- An exp

xplicit policy defines a reflex x agent

- Exp

xpectimax didn’t compute entire policies

- It computed the action for a single state only