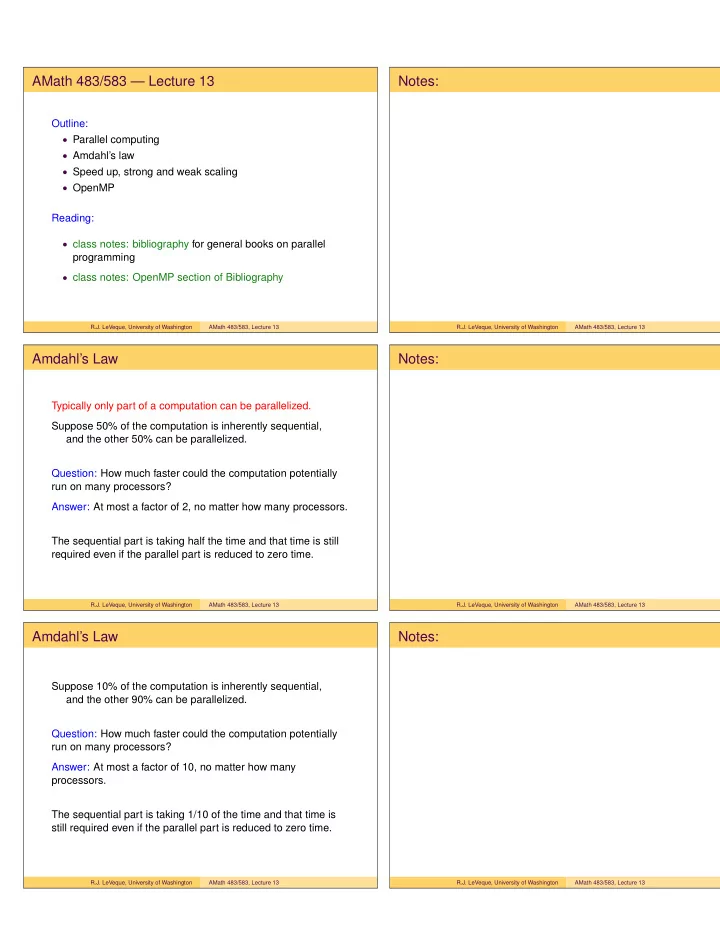

AMath 483/583 — Lecture 13

Outline:

- Parallel computing

- Amdahl’s law

- Speed up, strong and weak scaling

- OpenMP

Reading:

- class notes: bibliography for general books on parallel

programming

- class notes: OpenMP section of Bibliography

R.J. LeVeque, University of Washington AMath 483/583, Lecture 13

Notes:

R.J. LeVeque, University of Washington AMath 483/583, Lecture 13

Amdahl’s Law

Typically only part of a computation can be parallelized. Suppose 50% of the computation is inherently sequential, and the other 50% can be parallelized. Question: How much faster could the computation potentially run on many processors? Answer: At most a factor of 2, no matter how many processors. The sequential part is taking half the time and that time is still required even if the parallel part is reduced to zero time.

R.J. LeVeque, University of Washington AMath 483/583, Lecture 13

Notes:

R.J. LeVeque, University of Washington AMath 483/583, Lecture 13

Amdahl’s Law

Suppose 10% of the computation is inherently sequential, and the other 90% can be parallelized. Question: How much faster could the computation potentially run on many processors? Answer: At most a factor of 10, no matter how many processors. The sequential part is taking 1/10 of the time and that time is still required even if the parallel part is reduced to zero time.

R.J. LeVeque, University of Washington AMath 483/583, Lecture 13

Notes:

R.J. LeVeque, University of Washington AMath 483/583, Lecture 13