1

1

School of Computer Science

State Space Models

Probabilistic Graphical Models (10 Probabilistic Graphical Models (10-

- 708)

708)

Lecture 13, part II Nov 5th, 2007

Eric Xing Eric Xing

Receptor A Kinase C TF F Gene G Gene H Kinase E Kinase D Receptor B X1 X2 X3 X4 X5 X6 X7 X8 Receptor A Kinase C TF F Gene G Gene H Kinase E Kinase D Receptor B X1 X2 X3 X4 X5 X6 X7 X8 X1 X2 X3 X4 X5 X6 X7 X8

Reading: J-Chap. 15, K&F chapter 19.1 -19.3

Eric Xing 2

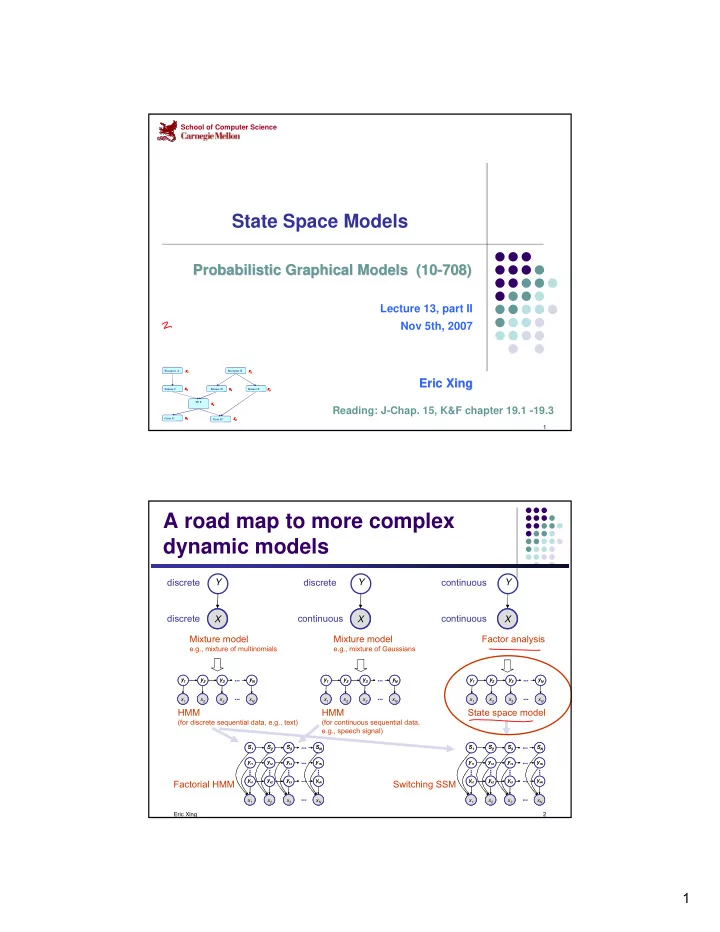

A road map to more complex dynamic models

A

X Y

A

X Y

A

X Y discrete discrete discrete continuous continuous continuous Mixture model

e.g., mixture of multinomials

Mixture model

e.g., mixture of Gaussians

Factor analysis

A A A A

x2 x3 x1 xN y2 y3 y1 yN

... ... A A A A

x2 x3 x1 xN y2 y3 y1 yN

... ... A A A A

x2 x3 x1 xN y2 y3 y1 yN

... ... A A A A

x2 x3 x1 xN y2 y3 y1 yN

... ... A A A A

x2 x3 x1 xN y2 y3 y1 yN

... ... A A A A

x2 x3 x1 xN y2 y3 y1 yN

... ...

HMM

(for discrete sequential data, e.g., text)

HMM

(for continuous sequential data, e.g., speech signal)

State space model

... ... ... ... A A A A

x2 x3 x1 xN yk2 yk3 yk1 ykN

... ...

y12 y13 y11 y1N

...

S2 S3 S1 SN

... ... ... ... ... A A A A

x2 x3 x1 xN yk2 yk3 yk1 ykN

... ...

y12 y13 y11 y1N

...

S2 S3 S1 SN

... ... ... ... ... A A A A

x2 x3 x1 xN yk2 yk3 yk1 ykN

... ...

y12 y13 y11 y1N

...

S2 S3 S1 SN

... ... ... ... ... A A A A

x2 x3 x1 xN yk2 yk3 yk1 ykN

... ...

y12 y13 y11 y1N

...

S2 S3 S1 SN

...

Factorial HMM Switching SSM