SLIDE 2 2

ntcir4-ov 2004-06-02 7

NTCI Rテストコレクション

C t : T r a d i t i

a l C h i n e s e 、 C s : S i m p l i f i e d C h i n e s e 、 K : K

e a n 、 J : J a p a n e s e 、 E : E n g l i s h J ( E ) M u l t i p l e 1 G B W E B I R N T C I R

W E B - J 3 s e t s N e w s S u m m N T C I R

S u m m 4 J ( E ) J 7 7 6 M B N e w s Q A N T C I R

Q A 3 C s C t K J E J ( J E ) 4 5 G B P a t e n t I R N T C I R

P A T E N T 4 C t K J E C t K J E c a 3 G B N e w s I R N T C I R

C L I R 4 + r e l a t i v e J ( E ) M u l t i p l e 1 G B W E B I R N T C I R

W E B - J 6 d

s +5 s e t s N e w s S u m m N T C I R

S u m m e x a c t J ( E ) J 2 8 2 M B N e w s Q A N T C I R

Q A 3 C s C t K J E J ( J E ) 1 8 G B ( + 5 G B ) P a t e n t I R N T C I R

P A T E N T 4 C t K J E C t K J E 8 8 4 M B N e w s I R N T C I R

C L I R J J 1 8 d

s N e w s S u m m N T C I R

S u m m 4 J E J E 8 M B A c a d e m i c I R N T C I R

4 C t E C t 1 3 2 M B N e w s I R C I R B 1 3 J J E 5 7 7 M B A c a d e m i c I R N T C I R

L a n g u a g e L a n g u a g e S i z e G e n r e R e l e v a n c e / A n s w e r t

i c . / Q D

u m e n t s t a s k C

l e c t i

ntcir4-ov 2004-06-02 8

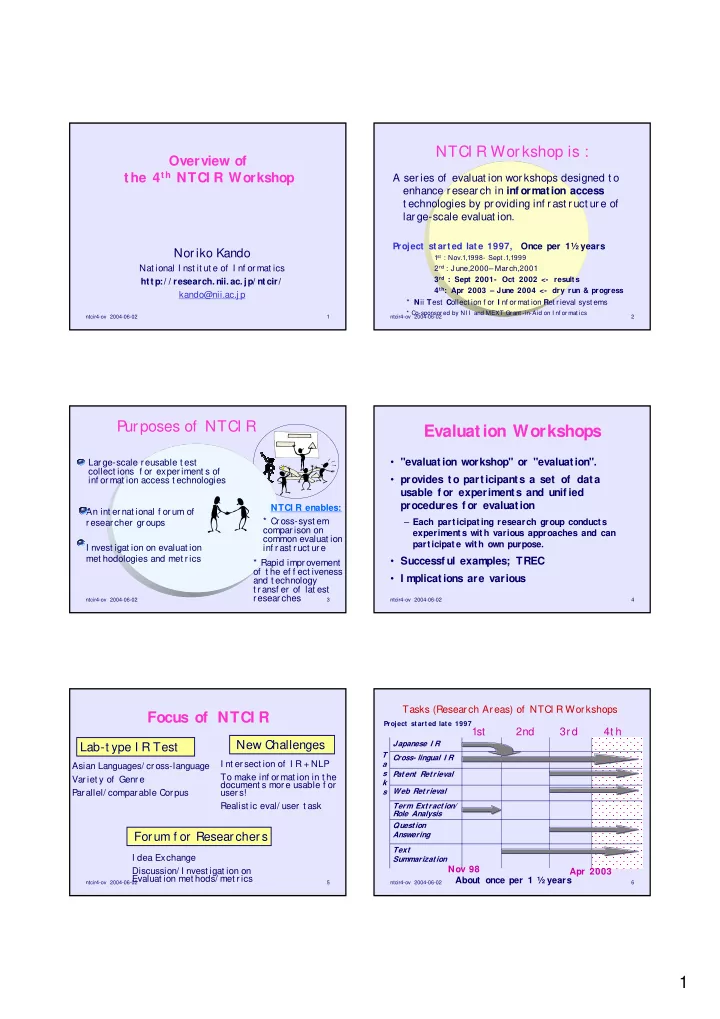

Tasks of NTCI R- 4

CLI R: Chinese, Korean, J apanese, English

- Single Language I R; Bilingual CLI R; Mult ilingual CLI R;

Pivot CLI R

Pat ent Ret rieval

- Main : I nvalidit y Search (Search pat ent by pat ent )

- Feasibilit y: Aut omat ic Pat ent Map Gener at ion

Quest ion Answering

- 5 possible answers; 1 set of all t he answers; series of Qs

Text Summarizat ion

- Mult iple document summarizat ion --Aut omat ic evaluat ion!

Web

- I nf ormat ional; Navigat ional; Geographic; Clust ering

ntcir4-ov 2004-06-02 9

NTCI R Workshop 4 (2003- 2004) Organizers

+CLI R Kuang-hua Chen, NTU Sukhoon Lee, NCU Kazuaki Kishida, Surugadai U Hsin-Hsi Chen, NTU Sung Hyon Myaeng, I I U Kazuko Kur iyama, Shir ayur i U Noriko Kando, NI I +PATENT At sushi Fuj ii, Tsukuba U Makot o I wayama, Hit achi Noriko Kando, NI I +Question Answering J unichi Fukumot o, Rit sumeikan U Tsuneaki Kat o, U Tokyo Fumit o Masui, Mie U +Text Summarization Tsut omu Hirao, NTT-CS Takahir o Fukusima, Ot emongakuin U Hidet sugu Nanba, Hiroshima C U Manabu Okumur a, TI TEC +WEB Koj i Eguchi, NI I Keizo Oyama, NI I General chair: J un Adachi, NI I Program chair: Nor iko Kando, NI I

ntcir4-ov 2004-06-02 10

NTCI R workshop: Number of Part icipat ing Groups

2 4 6 8 1st workshop 2st workshop 3rd workshop 4t h workshop # of groups # of count ries

74 groups f rom 10 countries

74 65 36 28 10 9 8 6

ntcir4-ov 2004-06-02 11

20 40 60 80 100 120

1st (1998-9) 2nd(2000-1) 3r d (2001-2) 4t h(2003-4)

# of ParticipatingGroups QA Summarizat ion Term Ext ract ion Web Ret rieval Pat ent Ret rieval NonJ apanese I R CLI R J apanese I R 20 40 60 80 100 120

1st (1998-9) 2nd(2000-1) 3r d (2001-2) 4t h(2003-4)

# of ParticipatingGroups QA Summarizat ion Term Ext ract ion Web Ret rieval Pat ent Ret rieval NonJ apanese I R CLI R J apanese I R

Chinese chinese . Korean JE

JE, EJ、 EC xCJEK

Number of Participants by Tasks

ntcir4-ov 2004-06-02 12

[CLIR] Chinese Academy of Sciences (China PRC) Clairvoyance Corporation and Justsystem (USA) Communications Research Laboratory-1 (Japan) Fu Jen Catholic University (Taiwan ROC) Hong Kong Polytechnic University (Hong Kong, China PRC) Hummingbird (Canada) Institute of Inforcomm Research (Singapore) Korea University (Korea) Nara Institute of Science and Technology-1(Japan) National Institute of Informatics-1 (Japan) National Taiwan University (Taiwan ROC) Oki Electric-1 (Japan) PATOLIS (Japan) Pohang University of Science and Technology (Korea) Queens College City Univiersity of New York (USA) Ricoh-1 (Japan) Royal Melbourn Intitute of Technology (Australia) Thomson Legal and Regulatory (USA) Tianjin University (China PRC) Toshiba (Japan) University of Arizona (USA) University of California Berkeley (USA) University of Chicago (USA) University of Neuchatel (Switzerland) University of Tsukuba (Japan) Yokohama National University (Japan) [PATENT] Fujitsu Laboratories (Japan) IBM Research (Japan) Japan Patent Information Organization / Hitachi (Japan) Nagaoka University of Technology (Japan) NTT DATA (Japan) Osaka Kyoiku University (Japan) PATOLIS (Japan) Ricoh-2 (Japan) Tokyo Institute of Technology (Japan) University of Tsukuba (Japan) [QAC] AIST/University of Nagoya/Univeristy of Tsukuba (Japan) Communications Research Laboratory-1 (Japan) Iwate Prefectural University (Japan) Keio University (Japan) Matsushita Electoric Industiral-1 (Japan) Mie University (Japan) Nagaoka University of Technology (Japan) Nara Institute of Science and Technology-2 (Japan) New York University (USA)/Communication Research Lobaratory-2 (Japan) NTT Communication Science Laboratories-1 (Japan) NTT DATA (Japan) Oki Electric-2(Japan) Pohang University of Science and Technology (Korea) Ritsumeikan University (Japan) Toshiba (Japan) Toyohashi University of Technology-1 (Japan) University of Tokyo-1 (Japan) Yokohama National University (Japan) [TSC] Communications Research Laboratory-2 (Japan) / New York University (USA) Graduate University for Advanced Studies (Japan) Hokkaido University (Japan) Pohang University of Science and Technology (Korea) Ritsumeikan University (Japan) Toyohashi University of Technology-1 (Japan) University of Electro-Communications (Japan) University of Tokyo-1 (Japan) Yokohama National University (Japan) [WEB] Hokkaido University (Japan) Ibaraki University (Japan) Matsushita Electoric Industiral-2 (Japan) NEC (Japan) NII-2/Univ. of Tokyo-2/KYA Group (Japan) NTT Communication Science Laboratories-2 (Japan) Osaka Kyoiku University (Japan) Tokyo Metropolitan University (Japan) Toyohashi University of Technology-1 (Japan) Toyohashi University of Technology-2 (Japan) University of Tsukuba/University of Nagoya 74 groups from 10 countries & areas

Number of Participants