1

DL - 2004 Compression – Beeri/Feitelson 1

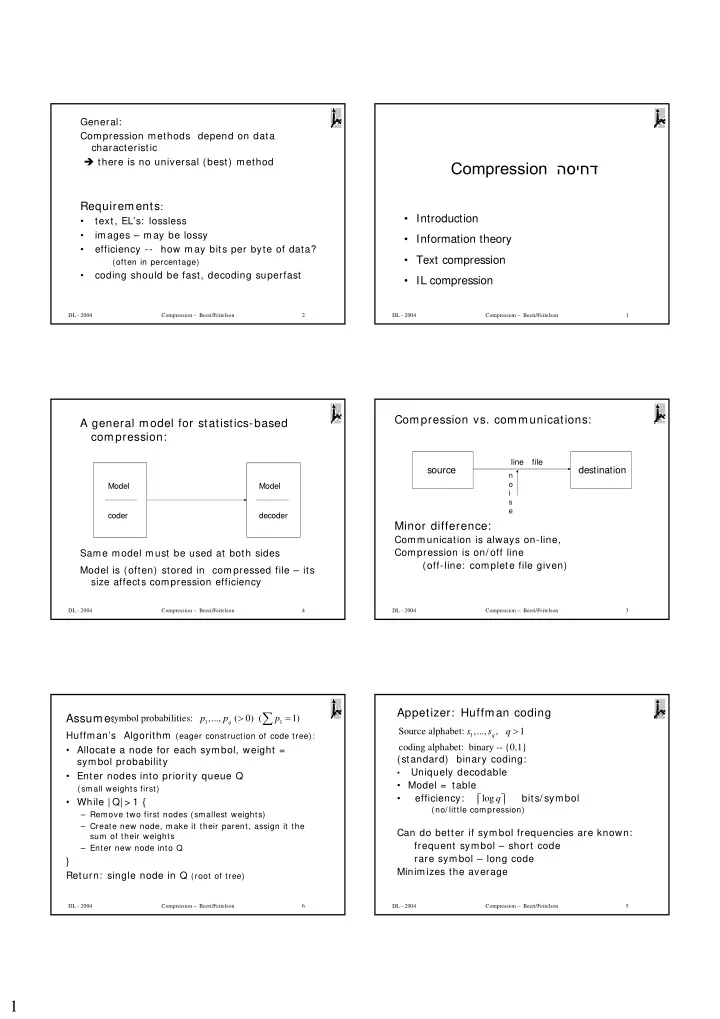

Compression הסיחד

- Introduction

- Information theory

- Text compression

- IL compression

DL - 2004 Compression – Beeri/Feitelson 2

General: Compression methods depend on data characteristic there is no universal (best) method

Requirements:

- text, EL’s: lossless

- images – may be lossy

- efficiency --

how may bits per byte of data?

(often in percentage)

- coding should be fast, decoding superfast

DL - 2004 Compression – Beeri/Feitelson 3

Compression vs. communications: Minor difference:

Communication is always on-line, Compression is on/ off line (off-line: complete file given) source destination

file line

n

- i

s e

DL - 2004 Compression – Beeri/Feitelson 4

A general model for statistics-based compression:

Same model must be used at both sides Model is (often) stored in compressed file – its size affects compression efficiency

Model coder Model decoder

DL - 2004 Compression – Beeri/Feitelson 5

Appetizer: Huffman coding

(standard) binary coding:

- Uniquely decodable

- Model = table

- efficiency: bits/ symbol

(no/ little compression)

Can do better if symbol frequencies are known: frequent symbol – short code rare symbol – long code Minimizes the average

1

Source alphabet: ,..., , 1 coding alphabet: binary -- {0,1}

q

s s q > logq

DL - 2004 Compression – Beeri/Feitelson 6

Assume:

Huffman’s Algorithm (eager construction of code tree):

- Allocate a node for each symbol, weight =

symbol probability

- Enter nodes into priority queue Q

(small weights first)

- While | Q| > 1 {

– Remove two first nodes (smallest weights) – Create new node, make it their parent, assign it the sum of their weights – Enter new node into Q

} Return: single node in Q (root of tree)

1 1

symbol probabilities: ,..., ( 0) ( 1)

q