1

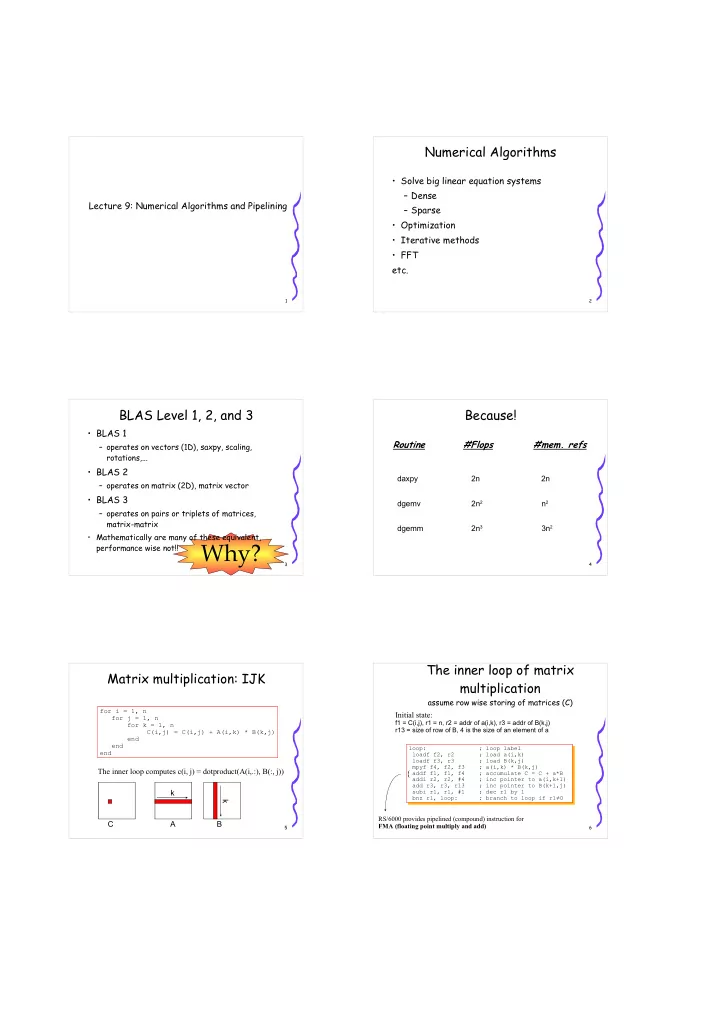

Lecture 9: Numerical Algorithms and Pipelining

2

Numerical Algorithms

- Solve big linear equation systems

– Dense – Sparse

- Optimization

- Iterative methods

- FFT

etc.

3

BLAS Level 1, 2, and 3

- BLAS 1

– operates on vectors (1D), saxpy, scaling, rotations,...

- BLAS 2

– operates on matrix (2D), matrix vector

- BLAS 3

– operates on pairs or triplets of matrices, matrix-matrix

- Mathematically are many of these equivalent,

performance wise not!!

Why?

4

Because!

Routine #Flops #mem. refs

daxpy 2n 2n dgemv 2n2 n2 dgemm 2n3 3n2

5

Matrix multiplication: IJK

for i = 1, n for j = 1, n for k = 1, n C(i,j) = C(i,j) + A(i,k) * B(k,j) end end end

C A B k k The inner loop computes c(i, j) = dotproduct(A(i,.:), B(:, j))

6

The inner loop of matrix multiplication

assume row wise storing of matrices (C)

loop: ; loop label loadf f2, r2 ; load a(i,k) loadf f3, r3 ; load B(k,j) mpyf f4, f2, f3 ; a(i,k) * B(k,j) addf f1, f1, f4 ; accumulate C = C + a*B addi r2, r2, #4 ; inc pointer to a(i,k+1) add r3, r3, r13 ; inc pointer to B(k+1,j) subi r1, r1, #1 ; dec r1 by 1 bnz r1, loop: ; branch to loop if r1≠0

Initial state:

f1 = C(i,j), r1 = n, r2 = addr of a(i,k), r3 = addr of B(k,j) r13 = size of row of B, 4 is the size of an element of a RS/6000 provides pipelined (compound) instruction for FMA (floating point multiply and add)

{