dt10 2011 11.1

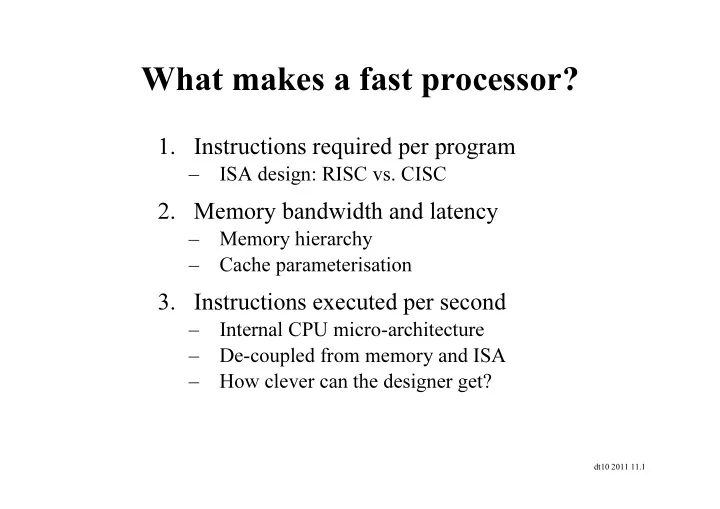

What makes a fast processor?

- 1. Instructions required per program

– ISA design: RISC vs. CISC

- 2. Memory bandwidth and latency

– Memory hierarchy – Cache parameterisation

- 3. Instructions executed per second

What makes a fast processor? 1. Instructions required per program - - PowerPoint PPT Presentation

What makes a fast processor? 1. Instructions required per program ISA design: RISC vs. CISC 2. Memory bandwidth and latency Memory hierarchy Cache parameterisation 3. Instructions executed per second Internal CPU

dt10 2011 11.1

dt10 2011 11.2

dt10 2011 11.3

Source: Microprocessor Report: Feb 14, 2005

dt10 2011 11.4

dt10 2011 11.5

dt10 2011 11.6

Icache PC ALU Sign extend

16 32

Mux Regfile Mux Dcache Mux

∗ 4

Mux

dt10 2011 11.7

Icache PC ALU Sign extend

16 32

Mux Regfile Mux Dcache Mux

∗ 4

Mux

dt10 2011 11.8

Icache PC ALU Sign extend

16 32

Mux Regfile Mux Dcache Mux

∗ 4

Mux

dt10 2011 11.9

Icache PC ALU Sign extend

16 32

Mux Regfile Mux Dcache Mux

∗ 4

Mux

dt10 2011 11.10

Icache PC ALU Sign extend

16 32

Mux Regfile Mux Dcache Mux

∗ 4

Mux

dt10 2011 11.11

Icache PC ALU Sign extend

16 32

Mux Regfile Mux Dcache Mux

∗ 4

Mux

dt10 2011 11.12

Icache PC ALU Sign extend

16 32

Mux Regfile Mux Dcache Mux

∗ 4

Mux

dt10 2011 11.13

Icache PC

16 32

∗ 4

Mux Regfile Mux Dcache Mux Mux ALU

dt10 2011 11.14

Icache PC

16 32

∗ 4

Mux Mux

RegWrite PCSrc RegDst MemRead MemWrite ALU Dcache Regfile Mux Mux ALUSrc ALUFunc MemtoReg

dt10 2011 11.15

Icache PC

16 32

∗ 4

Mux Regfile Mux Dcache Mux Mux ALU

dt10 2011 11.16

dt10 2011 11.17

dt10 2011 11.18

dt10 2011 11.19

(IF)

(ID) ALU op. (EX) Data mem. (MEM)

(WB) Total time lw 200ps 100 ps 200ps 200ps 100 ps 800ps sw 200ps 100 ps 200ps 200ps 700ps R-format 200ps 100 ps 200ps 100 ps 600ps beq 200ps 100 ps 200ps 500ps

dt10 2011 11.20

Single-cycle (Tc= 800ps) Pipelined (Tc= 200ps)

dt10 2011 11.21

dt10 2011 11.22