ST 430/514 Introduction to Regression Analysis/Statistics for Management and the Social Sciences II

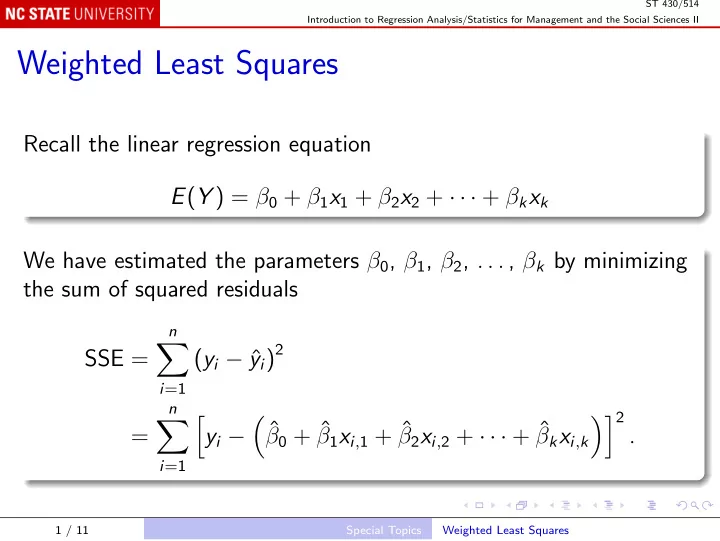

Weighted Least Squares

Recall the linear regression equation E(Y ) = β0 + β1x1 + β2x2 + · · · + βkxk We have estimated the parameters β0, β1, β2, . . . , βk by minimizing the sum of squared residuals SSE =

n

- i=1

(yi − ˆ yi)2 =

n

- i=1

- yi −

- ˆ

β0 + ˆ β1xi,1 + ˆ β2xi,2 + · · · + ˆ βkxi,k 2 .

1 / 11 Special Topics Weighted Least Squares