SLIDE 1

Verifying Electronic Voting Protocols in the Applied Pi Calculus - - PowerPoint PPT Presentation

Verifying Electronic Voting Protocols in the Applied Pi Calculus - - PowerPoint PPT Presentation

Verifying Electronic Voting Protocols in the Applied Pi Calculus Mark Ryan University of Birmingham joint work with St ephanie Delaune and Steve Kremer LSV Cachan, France SoCS Seminar November 2007 Outline Electronic voting Electronic

SLIDE 2

SLIDE 3

Outline

SLIDE 4

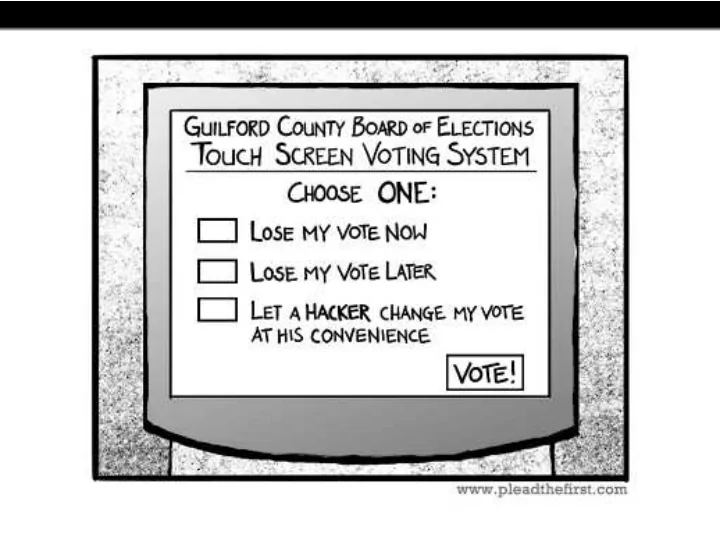

Electronic voting

Electronic voting has the potential to provide more efficient elections with higher voter participation, greater accuracy and lower costs compared to manual methods. better security than manual methods, such as

vote-privacy even in presence of corrupt election authorities voter verification, i.e. the ability of voters and observers to check the declared outcome against the votes cast.

Governments world over have been trialling e-voting, e.g. USA, UK, Canada, Brasil, the Netherlands and Estonia.

SLIDE 5

Current situation

The potential benefits have turned out to be hard to realise. In UK May 2007 elections included 5 local authorities that piloted a range

- f electronic voting machines.

Electoral Commission report concluded that the implementation and security risk was significant and unacceptable and recommends that no further e-voting take place until a sufficiently secure and transparent system is available. In USA: Diebold controversy since 2003 when code leaked on internet.

Kohno/Stubblefield/Rubin/Wallach analysis concluded Diebold system far below even most minimal security standards. Voters without insider privileges can cast unlimited votes without being detected.

SLIDE 6

Current situation in USA, continued

In 2007, Secr. of State for California commissioned “top-to-bottom” review by computer science academics of the four machines certified for use in the state. Result is a catalogue of vulnerabilities, including appalling software engineering practices, such as hardcoding crypto keys in source code; bypassing OS protection mechanisms, . . . susceptibility of voting machines to viruses that propogate from machine to machine, and that could maliciously cause votes to be recorded incorrectly or miscounted “weakness-in-depth”, architecturally unsound systems in which even as known flaws are fixed, new ones are discovered. In response to these reports, she decertified all four types of voting machine for regular use in California, on 3 August 2007.

SLIDE 7

Voting system: desired properties

Eligibility: only legitimate voters can vote, and only once (This also

implies that the voting authorities cannot insert votes)

Fairness no early results can be obtained which could influence the

remaining voters

Privacy: the fact that a particular voted in a particular way is not

revealed to anyone

Receipt-freeness: a voter cannot later prove to a coercer that she voted

in a certain way

Coercion-resistance: a voter cannot interactively cooperate with a

coercer to prove that she voted in a certain way

Individual verifiability: a voter can verify that her vote was really counted Universal verifiability: a voter can verify that the published outcome

really is the sum of all the votes

. . . and all this even in the presence of corrupt election authorities!

SLIDE 8

Are these properties even simultaneously satisfiable?

Contradiction? Eligibility: only legitimate

voters can vote, and only once

Effectiveness: the number of

votes for each candidate is published after the election

Privacy: the fact that a

particular voted in a particular way is not revealed to anyone (not even the election authorities)

Contradiction? Receipt-freeness: a voter

cannot later prove to a coercer that she voted in a certain way

Individual verifiability: a

voter can verify that her vote was really counted

Individual verifiability (stronger): . . . , and if her

vote wasn’t counted, she can prove that.

SLIDE 9

How could it be secure?

SLIDE 10

Security by trusted client software

→ → → → → → → → → → trusted by user does not need to be trusted by authorities

- r other voters

not trusted by user doesn’t need to be trusted by anyone

SLIDE 11

FOO 92 protocol

[FujiokaOkamotoOhta92] Alice aDministrator Collector

{ }

1

) ), , ( (

−

A

b c v commit blind

{ }

1

) ), , ( (

−

D

b c v commit blind

{ }

1

) , ( (...)

−

=

D

c v commit unblind

{ }

1

) , (

−

D

c v commit v publ. )) , ( , ( . c v commit l publ ) , ( c l

I III II

v

- pen

= (...)

SLIDE 12

LBDKYY’03 protocol

[LeeBoydDawsonKimYangYoo] Alice Administrator Collector

{ }

( ) Alice v Sign

c Coll,

1

reencrypt

{ }

( ) Admin v Sign

c Coll,

2

{ }

( ) Admin v Sign

c Coll,

2

{ } { }

( )

2 1 ,

DVP

c Coll c Coll

v v =

SLIDE 13

Attacker model

Ideally, we want to model a very powerful attacker: It has “Dolev-Yao” capabilities, i.e.

it completely controls the communication channels, so it is able to record, alter, delete, insert, redirect, reorder, and reuse past or current messages, and inject new messages (The network is the attacker) manipulate data in arbitrary ways, including applying crypto operations provided has the necessary keys

It includes the election authorities. It includes the other voters.

SLIDE 14

Where are we?

SLIDE 15

The applied π-calculus

Applied pi-calculus: [Abadi & Fournet, 01] basic programming language with constructs for concurrency and communication based on the π-calculus [Milner et al., 92] in some ways similar to the spi-calculus [Abadi & Gordon, 98], but more general w.r.t. cryptography Advantages: naturally models a Dolev-Yao attacker allows us to model less classical cryptographic primitives both reachability and equivalence-based specification of properties automated proofs using ProVerif tool [Blanchet] powerful proof techniques for hand proofs successfully used to analyze a variety of security protocols

SLIDE 16

How to verify protocols in the applied-pi framework

- 1. Create equations to model the cryptography.

Examples

1

Encryption and signatures

decrypt( encrypt(m,pk(k)), k ) = m checksign( sign(m,k), m, pk(k) ) =

- k

SLIDE 17

How to verify protocols in the applied-pi framework

- 1. Create equations to model the cryptography.

Examples

1

Encryption and signatures

decrypt( encrypt(m,pk(k)), k ) = m checksign( sign(m,k), m, pk(k) ) =

- k

2

Blind signatures

unblind( sign( blind(m,r), sk ), r ) = sign(m,sk)

SLIDE 18

How to verify protocols in the applied-pi framework

- 1. Create equations to model the cryptography.

Examples

1

Encryption and signatures

decrypt( encrypt(m,pk(k)), k ) = m checksign( sign(m,k), m, pk(k) ) =

- k

2

Blind signatures

unblind( sign( blind(m,r), sk ), r ) = sign(m,sk)

3

Designated verifier proof of re-encryption The term dvp(x,rencrypt(x,r),r,pkv) represents a proof designated for the owner of pkv that x and rencrypt(x,r) have the same plaintext.

checkdvp(dvp(x,rencrypt(x,r),r,pkv),x,rencrypt(x,r),pkv) = ok checkdvp( dvp(x,y,z,skv), x, y, pk(skv) ) = ok.

SLIDE 19

How to verify protocols in the applied-pi framework

- 1. Create equations to model the cryptography.

- 2. For each property to be verified, decide who

is protected, i.e. for whom the property will be verified; must be honest in order to guarantee the property; may be dishonest, i.e. may be controlled by the DY attacker. Examples:

Protocol property protected must be honest may be dishonest FOO eligibility voters

- ther voters

admin, collector fairness voters

- ther voters

admin, collector privacy voters same voters admin, collector Lee et al. privacy voters same voters, admin collector receipt voters same voters, admin,

- ther voters

- freeness

collector

SLIDE 20

How to verify protocols in the applied-pi framework

- 1. Create equations to model the cryptography.

- 2. For each property to be verified, decide who

is protected / must be honest / may be dishonest

- 3. Code the honest parties as processes.

Example ([FOO’92]):

processV = new b; new c; let bcv = blind(commit(v,c),b) in

- ut(ch, (sign(bcv, skv)));

in(ch,m2); if getMess(m2,pka)=bcv then let scv = unblind(m2,b) in phase 1;

- ut(ch, scv);

in(ch,(l, =scv)); phase 2;

- ut(ch,(l,c)).

Alice aDministrator Collector

{ }

1

) ), , ( (

−

A

b c v commit blind

{ }

1

) ), , ( (

−

D

b c v commit blind

{ }

1

) , ( (...)

−

=

D

c v commit unblind

{ }

1

) , (

−

D

c v commit v publ. )) , ( , ( . c v commit l publ ) , ( c l v

- pen

= (...)

SLIDE 21

How to verify protocols in the applied-pi framework

- 1. Create equations to model the cryptography.

- 2. For each property to be verified, decide who

is protected / must be honest / may be dishonest

- 3. Code the honest parties as processes.

- 4. Code the intended property, as a

reachability property, or an

- bservational equivalence property.

Examples: Property type intuition Eligibility reachab. ineligible vote not published Fairness reachab. without last phase, no votes published Privacy

- bs. eq.

undetectable whether A,B swap votes Receipt-freeness

- bs. eq.

even if A cooperates with attacker, unde- tectable whether A,B swap votes

SLIDE 22

How to verify protocols in the applied-pi framework

- 1. Create equations to model the cryptography.

- 2. For each property to be verified, decide who

is protected / must be honest / may be dishonest

- 3. Code the honest parties as processes.

- 4. Code the intended property, as a

reachability property, or an

- bservational equivalence property.

The framework automatically considers all possible behaviours of the dishonest parties. If one can prove in the framework that the property holds, then there are no attacks of the kind which are considered. If one cannot prove the property in the framework, then there may be an attack of the kind considered. ProVerif is an incomplete decision tool for the framework.

SLIDE 23

Formalisation of vote-privacy

Classically modeled as observational equivalences between two slightly different processes P1 and P2, but changing the identity does not work, as identities are revealed changing the vote does not work, as the votes are revealed at the end ֒ → consider two honest voters and swap their votes Definition (Privacy) A voting protocol respects privacy if S[VA{a/v} | VB{b/v}] ≈ℓ S[VA{b/v} | VB{a/v}].

SLIDE 24

Leaking secrets to the coercer

To model receipt-freeness we need to specify that a coerced voter cooperates with the coercer by leaking secrets on a channel ch P ::= P | P νn.P in(u, x).P

- ut(u, M).P

if M = N then P else P !P . . . Pch in terms of P 0ch = 0 (P | Q)ch = Pch | Qch (νn.P)ch = νn.out(ch, n).Pch (in(u, x).P)ch = in(u, x).out(ch, x).Pch (out(u, M).P)ch = out(u, M).Pch . . . We denote by P\out(chc,·) the process νchc.(P |!in(chc, x)). Lemma: (Pch)\out(chc,·) ≈ℓ P

SLIDE 25

Receipt-freeness

Intuition There exists a process V ′ which votes a, leaks (possibly fake) secrets to the coercer, and makes the coercer believe she voted c Definition (Receipt-freeness) A voting protocol is receipt-free if there exists a process V ′, satisfying V ′\out(chc,·) ≈ℓ VA{a/v}, S[VA{c/v}chc | VB{a/v}] ≈ℓ S[V ′ | VB{c/v}]. Case study: Lee et al. protocol We prove receipt-freeness by exhibiting V ′ showing that V ′\out(chc,·) ≈ℓ VA{a/v} showing that S[VA{c/v}chc | VB{a/v}] ≈ℓ S[V ′ | VB{c/v}]

SLIDE 26

Coercion resistance

Like receipt-freeness, but: voter interacts with the coercer during the protocol (instead of just supplying data at the end). The voting booth makes coercion resistance possible. Interactively communicating with the coercer: Pc1,c2 in terms of P 0c1,c2 = 0, (P | Q)c1,c2 = Pc1,c2 | Qc1,c2 (νn.P)c1,c2 = νn.out(c1, n).Pc1,c2 (in(u, x).P)c1,c2 = in(u, x).out(c1, x).Pc1,c2 (out(u, M).P)c1,c2 = in(c2, x).out(u, x).Pc1,c2 (!P)c1,c2 = !Pc1,c2, (if M = N then P else Q)c1,c2 = in(c2, x). if x = true then Pc1,c2 else Qc1,c2

SLIDE 27

Coercion resistance

Definition (Coercion resistance) VP is coercion resistant if there exists a process V ′ such that for any C = νc1.νc2.( | P) satisfying ˜ n ∩ fn(C) = ∅ S[C[VA{?/v}c1,c2] | VB{a/v}] ≈ℓ S[VA{c/v}chc | VB{a/v}] we have C[V ′]\out(chc,·) ≈ℓ VA{a/v}, S[C[VA{?/v}c1,c2] | VB{a/v}] ≈ℓ S[C[V ′] | VB{c/v}]. Intuitively, C together with the environment represent the coercer. The definition says there’s a strategy V ′ for the voter such that if the coercer is trying to force A to vote c then A can do V ′, which will result in an a vote, but will satisfy the coercer.

SLIDE 28

Privacy properties

Proposition Let VP be a voting protocol. Then VP is receipt-free ⇓ VP is coercion-resistant ⇓ VP respects privacy

SLIDE 29

Where are we?

SLIDE 30

How to decide observational equivalence

V [ska/skv, a/v] | V [skb/skv, b/v] ≈ V [ska/skv, b/v] | V [skb/skv, a/v]

Labeled bisimilarity: A ≈ℓ B

- Lrgst. symm. rel. R on procs s.t. A R B impl.

1

A ≈E B (static equivalence)

2

if A → A′, then ∃B′ B →∗ B′ and A′ R B′

3

if A

α

→ A′, then ∃B′ B →∗ α →→∗ B′ and A′ R B′. Static equivalence: A ≈E B Procs A and B are statically equivalent if dom(A) = dom(A), and for all terms M, N we have that (M =E N)φ(A) if and only if (M =E N)φ(B). ProVerif is a tool which partly automates checking labelled bisimilarity. To prove this case, one has to match: up to the first phase command, process 1 with 1, and 2 with 2; thereafter, 1 with 2, and 2 with 1. . . . and then do reasoning about static equivalence. ProVerif is not able to swap the process being matched.

SLIDE 31

Manually proving privacy

P = V [ska/skv, a/v] | V [skb/skv, b/v] P →∗ out − − →

- ut

− − → νbA.νcA.νbB.νcB.( P1 | {sign(blind(commit(a,cA),bA),skva)/x1} | {sign(blind(commit(b,cB),bB),skvb)/x2}) →∗

- ut

− − →

- ut

− − → νbA.νcA.νbB.νcB.( P2 | {sign(blind(commit(a,cA),bA),skva)/x1} | {sign(blind(commit(b,cB),bB),skvb)/x2} | {sign(commit(a,cA),skD)/x3} | {sign(commit(b,cB),skD)/x4}) →∗

- ut

− − →

- ut

− − → νbA.νcA.νbB.νcB.( P3 | {sign(blind(commit(a,cA),bA),skva)/x1} | {sign(blind(commit(b,cB),bB),skvb)/x2} | {sign(commit(a,cA),skD)/x3} | {sign(commit(b,cB),skD)/x4} | {(lA,cA)/x5} | {(lB,cB)/x6}) Q = V [ska/skv, b/v] | V [skb/skv, a/v] Q →∗ out − − →

- ut

− − → νbA.νcA.νbB.νcB.( Q1 | {sign(blind(commit(b,cA),bA),skva)/x1} | {sign(blind(commit(a,cB),bB),skvb)/x2}) →∗

- ut

− − →

- ut

− − → νbA.νcA.νbB.νcB.( Q2 | {sign(blind(commit(b,cA),bA),skva)/x1} | {sign(blind(commit(a,cB),bB),skvb)/x2} | {sign(commit(b,cA),skD)/x3} | {sign(commit(a,cB),skD)/x4}) →∗

- ut

− − →

- ut

− − → νbA.νcA.νbB.νcB.( Q3 | {sign(blind(commit(b,cA),bA),skva)/x1} | {sign(blind(commit(a,cB),bB),skvb)/x2} | {sign(commit(b,cA),skD)/x3} | {sign(commit(a,cB),skD)/x4} | {(lA,cB)/x5} | {(lB,cA)/x6})

SLIDE 32

Summary of properties we have shown

Property FOO’92

- Oka. et al.’97

Lee et al.’03 Eligibility

- trusted authorities

none Fairness

- trusted authorities

none Vote-privacy

- trusted authorities

none

- timelin. mbr.

administrator Receipt-freeness ×

- trusted authorities

n/a

- timelin. mbr.

- admin. & collector

Coercion-resistance × ×

- trusted authorities

n/a n/a

- admin. & collector

SLIDE 33

Conclusions and future work

Conclusions Non-trivial crypto primitives in the “formal methods” approach to protocol verification First formal definitions of receipt-freeness and coercion-resistance coercion-resistance ⇒ receipt-freeness ⇒ privacy Future work Decision procedure for

- bservational equivalence for