Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

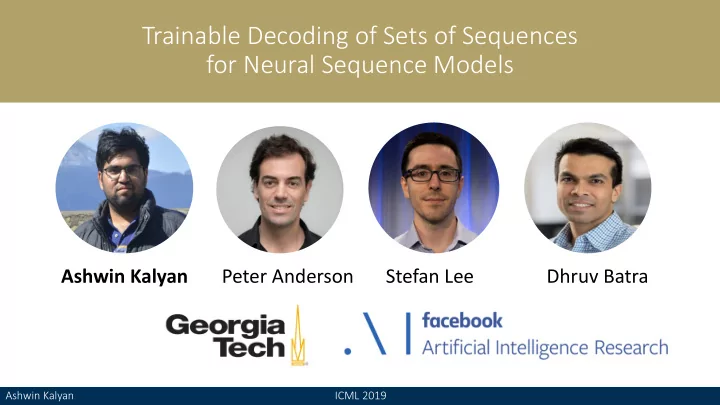

Trainable Decoding of Sets of Sequences for Neural Sequence Models

Ashwin Kalyan Dhruv Batra Stefan Lee Peter Anderson

Ashwin Kalyan ICML 2019

Trainable Decoding of Sets of Sequences for Neural Sequence Models - - PowerPoint PPT Presentation

Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models Trainable Decoding of Sets of Sequences for Neural Sequence Models Ashwin Kalyan Peter Anderson Stefan Lee Dhruv Batra Ashwin Kalyan Ashwin Kalyan ICML 2019 ICML 2019

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

Ashwin Kalyan ICML 2019

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

ht ht−1

yt yt+1

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

ht ht−1

yt yt+1

is picture a the is shows This

B = 2

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

ht ht−1

yt yt+1

is picture a the is shows This

B = 2

> A kitchen with a stove. > A kitchen with a stove and a sink. > A kitchen with a stove and a microwave. > A kitchen with a stove and a refrigerator.

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

ht ht−1

yt yt+1

is picture a the is shows This

B = 2

> A kitchen with a stove. > A kitchen with a stove and a sink. > A kitchen with a stove and a microwave. > A kitchen with a stove and a refrigerator.

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

ht ht−1

yt yt+1

is picture a the is shows This

B = 2

> A kitchen with a stove. > A kitchen with a stove and a sink. > A kitchen with a stove and a microwave. > A kitchen with a stove and a refrigerator.

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models

Ashwin Kalyan ICML 2019 Tr Trainable Decoding of Sets of Sequences for Neural Sequence Models