SLIDE 1

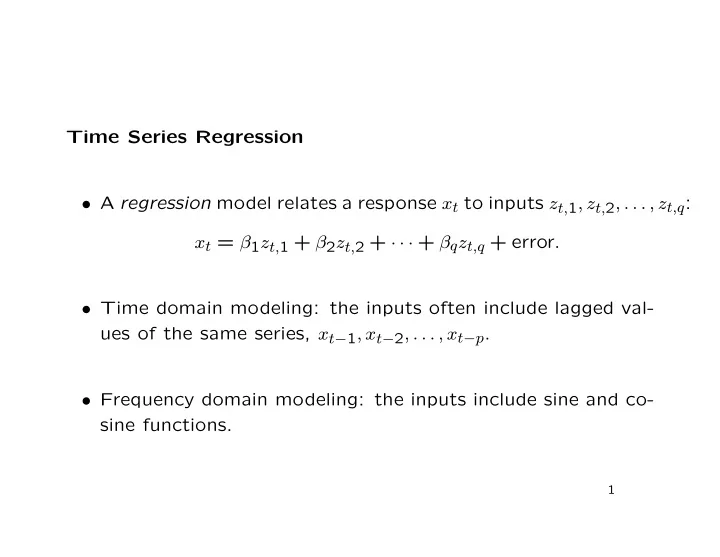

Time Series Regression

- A regression model relates a response xt to inputs zt,1, zt,2, . . . , zt,q:

xt = β1zt,1 + β2zt,2 + · · · + βqzt,q + error.

- Time domain modeling: the inputs often include lagged val-

ues of the same series, xt−1, xt−2, . . . , xt−p.

- Frequency domain modeling: the inputs include sine and co-