Mathematics for Informatics 4a

Jos´ e Figueroa-O’Farrill Lecture 14 9 March 2012

Jos´ e Figueroa-O’Farrill mi4a (Probability) Lecture 14 1 / 23

The story of the film so far...

X, Y independent random variables and Z = X + Y: fZ = fX ⋆ fY, where ⋆ is the convolution X, Y with joint density f(x, y) and Z = g(X, Y): E(Z) =

- g(x, y)f(x, y)dx dy

X, Y independent:

E(XY) = E(X)E(Y)

Var(X + Y) = Var(X) + Var(Y)

MX+Y(t) = MX(t)MY(t), where MX(t) = E(etX)

We defined covariance and correlation of two r.v.s Proved Markov’s and Chebyshev’s inequalities Proved the (weak) law of large numbers and the Chernoff bound Waiting times of Poisson processes are exponentially distributed

Jos´ e Figueroa-O’Farrill mi4a (Probability) Lecture 14 2 / 23

More approximations

In Lecture 7 we saw that the binomial distribution with parameters n, p can be approximated by a Poisson distribution with parameter λ in the limit as n → ∞, p → 0 but np → λ:

n k

- pk(1 − p)n−k ∼ e−λ λk

k!

But what about if n → ∞ but p → 0? For example, consider flipping a fair coin n times and let X denote the discrete random variable which counts the number

- f heads. Then

P(X = k) = n k 1

2n Lectures 6 and 7: this distribution has µ = n/2 and σ2 = n/4.

Jos´ e Figueroa-O’Farrill mi4a (Probability) Lecture 14 3 / 23

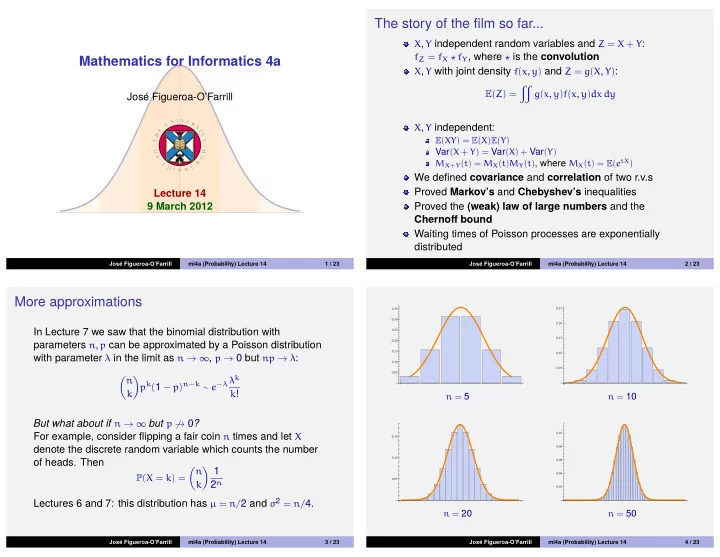

0.05 0.10 0.15 0.20 0.25 0.30 0.35

n = 5

0.05 0.10 0.15 0.20 0.25

n = 10

0.05 0.10 0.15

n = 20

0.02 0.04 0.06 0.08 0.10

n = 50

Jos´ e Figueroa-O’Farrill mi4a (Probability) Lecture 14 4 / 23