9/20/19 1

The Quest for the Perfect Image Representation

Tinne Tuytelaars KU Leuven ECML-PKDD 2019

What’s wrong with current image representations ?

- They’re 2D instead of 3D

- They’re pixel-focused instead of object-focused

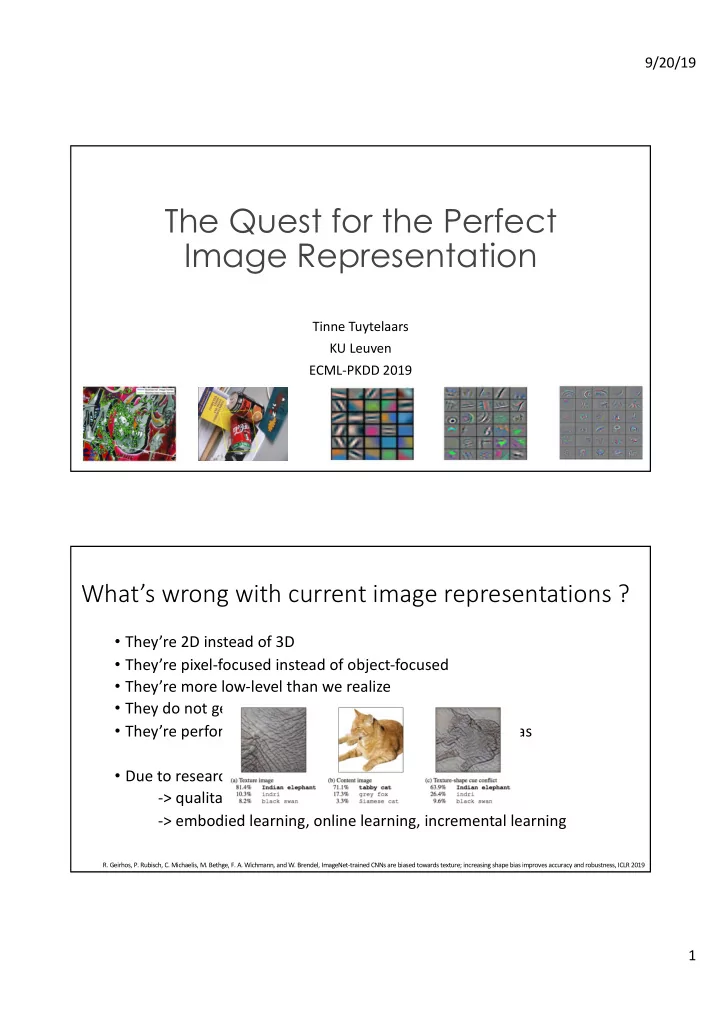

- They’re more low-level than we realize

- They do not generalize well

- They’re performant because they (over)exploit dataset bias

- Due to research being too dataset-focused ?

- > qualitative evaluation, network interpretation

- > embodied learning, online learning, incremental learning

- R. Geirhos, P. Rubisch, C. Michaelis, M. Bethge, F. A. Wichmann, and W. Brendel, ImageNet-trained CNNs are biased towards texture; increasing shape bias improves accuracy and robustness, ICLR 2019