Liang Huang

The City University of New York (CUNY) includes joint work with S. Phayong,

- Y. Guo, and K. Zhao

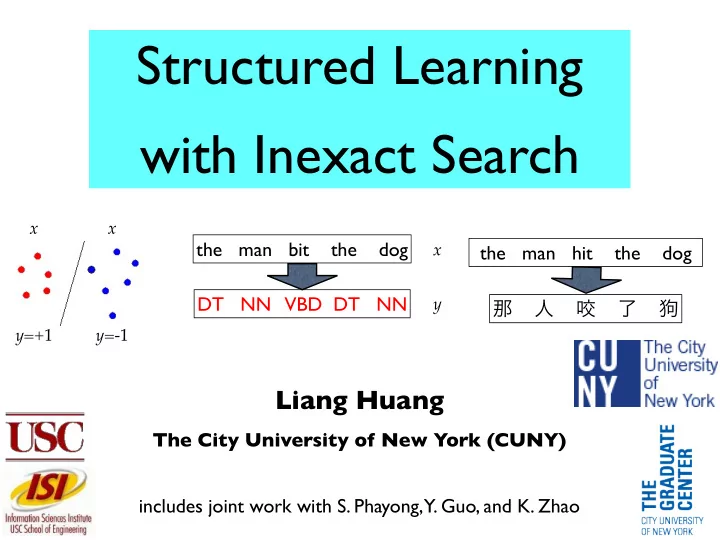

Structured Learning with Inexact Search

the man bit the dog DT NN VBD DT NN

x y x y=-1 y=+1 x

the man hit the dog 那 人 咬 了 狗

Structured Learning with Inexact Search x x the man bit - - PowerPoint PPT Presentation

Structured Learning with Inexact Search x x the man bit the dog x the man hit the dog DT NN VBD DT NN y y=+ 1 y=- 1 Liang Huang The City University of New York (CUNY) includes joint work with

Liang Huang

The City University of New York (CUNY) includes joint work with S. Phayong,

the man bit the dog DT NN VBD DT NN

x y x y=-1 y=+1 x

the man hit the dog 那 人 咬 了 狗

2

the man bit the dog DT NN VBD DT NN

x y y

update weights if y ≠ z

w

x z

exact inference

x y=-1 y=+1 x y

update weights if y ≠ z

w

x z

exact inference

trivial hard

constant # of classes exponential # of classes

binary classification structured classification

3

the man bit the dog DT NN VBD DT NN

x y x z

inexact inference

y

update weights if y ≠ z

w

does it still work???

beam search greedy search

Liang Huang (CUNY)

4

x z

inexact inference

y

update weights if y ≠ z

w

x z

inexact inference

training testing w

5

x z

greedy

y

early update on prefixes y’, z’

w

6

7

the man bit the dog DT NN VBD DT NN

x y y

update weights if y ≠ z

w

x z

exact inference

x y=-1 y=+1 x y

update weights if y ≠ z

w

x z

exact inference

trivial hard

constant classes exponential classes

8

y=+1 y=-1 y100 z ≠ y100 x100 x100 x111 x2000 x3012

R: diameter R: diameter

δ δ

Rosenblatt => Collins 1957 2002

9

w(k)

V V N N V N V V

training example

time flies N V

{N,V} x {N, V}

standard perceptron converges with exact search

correct label

current model

w(k+1)

u p d a t e

10

w(k)

∆Φ(x, y, z)

V V N N V N V V

training example

time flies N V

{N,V} x {N, V}

N V V V N N V V

w(k)

w(k+1)

standard perceptron does not converge with greedy search

correct label

u p d a t e

current model new model

11

w(k)

∆Φ(x, y, z)

V V N N V N V V

training example

time flies N V

{N,V} x {N, V}

N V V V N

w(k)

w(k+1)

∆Φ(x, y, z)

w(k+1)

w(k)

stop and update at the first mistake

V N

standard perceptron does not converge with greedy search

correct label

current model new model

u p d a t e

new model

12

V V N N V N V V

∆Φ(x, y, z)

w(k+1)

w(k)

13

y

update weights if y ≠ z

w

x z

exact inference

y

w(k)

w(k+1)

correct label

∆Φ(x, y, z)

update current model update new model

perceptron update:

z

exact 1-best

(by induction)

δ separation

unit oracle vector u

margin

≥ δ

(part 1: upperbound)

<90˚

14

y

update weights if y ≠ z

w

x z

exact inference

y

w(k)

w(k+1)

violation: incorrect label scored higher

parts 1+2 => update bounds:

correct label

∆Φ(x, y, z)

update current model update new model

perceptron update: violation

by induction:

R: max diameter

z

exact 1-best

diameter ≤ R2 k ≤ R2/δ2 (part 2: upperbound)

<90˚

15

y

violation: incorrect label scored higher

correct label

∆Φ(x, y, z)

update

w(k)

w(k+1)

current model update new model

R: max diameter

all violations

z

exact 1-best

the proof only uses 3 facts:

y

16

y

update weights if y ≠ z

w

x z

exact inference

y

update weights if y’ ≠ z

w

x z

find violation

y ’

same mistake bound as before!

standard perceptron violation-fixing perceptron

all violations

all possible updates

because it doesn’t fix any mistake

17

beam

b a d u p d a t e current model

18

w(k)

∆Φ(x, y, z)

V V N N V N V V

training example

time flies N V

{N,V} x {N, V}

N V V V N N V V

w(k)

w(k+1)

standard update doesn’t converge b/c it doesn’t guarantee violation

correct label scores higher. non-violation: bad update!

correct label

19

w(k)

∆Φ(x, y, z)

V V N N V N V V

training example

time flies N V

{N,V} x {N, V}

N V V V N N V V

w(k)

w(k+1)

∆Φ(x, y, z)

w(k+1)

w(k)

early update: incorrect prefix scores higher: a violation!

V N

standard update doesn’t converge b/c it doesn’t guarantee violation

correct label

20

early update

correct label falls off beam (pruned)

correct incorrect violation guaranteed: incorrect prefix scores higher up to this point

standard update (no guarantee!)

21

beam

correct label falls off beam (pruned)

standard update (bad!)

y

update weights if y’ ≠ z

w

x z

find violation

y’

prefix violations

y’ z y

also new definition of “beam separability”: a correct prefix should score higher than any incorrect prefix

(maybe too strong)

early update

22

beam

early standard (bad!) max-violation latest

the man bit the dog DT NN VBD DT NN

x y

the man bit the dog

x y

bit man the dog the

trigram part-of-speech tagging incremental dependency parsing

local features only, exact search tractable (proof of concept) non-local features, exact search intractable (real impact)

24

% of bad (non-violation) standard updates

53% 10% 1.5% 0.5%

25

beam iter time test

standard early

max-violation

4 6 37m 97.27 2 3 26m 97.27

Shen et Shen et al (2007) (2007) 2007)

97.33

26

12 iterations, 5.5 hours (92.32)

27

28

test

standard early max- violation

79.1

92.1 92.2

% of bad standard updates

take-home message:

for harder search problems!

29

30

% of bad updates in standard perceptron

liang.huang.sh@gmail.com

parsing accuracy

my parser with max-violation update is available at:

http://acl.cs.qc.edu/~lhuang/#software

(K. Zhao and L. Huang, NAACL 2013)

Liang Huang (CUNY)

because

times to converge

learning (e.g. CRF)

33

Liang Huang (CUNY)

each minibach

update after each minibatch

if minibatch size is the whole set

34

Liang Huang (CUNY)

35

4x constrains in each update

Liang Huang (CUNY)

36

Liang Huang (CUNY)

37

Liang Huang (CUNY)

38

Liang Huang (CUNY)

39

Liang Huang (CUNY)

40

41