SLIDE 4

separation = 2.02

1

separation = 2.67

2

separation = 3.81

3

separation = 2.46

4

separation = 2.55

5

separation = 2.36

6

separation = 2.02

7

separation = 2.11

8

separation = 2.4

9 Ag Al As B Ba Be Bi Ca Cd Co Cr Cu Fe Hg K La Mg Mn Mo Na Ni P Pb Rb S Sb Sc Si Sr Th Tl U V Y Zn C H N LO pH Co

1

Ag Al As B Ba Be Bi Ca Cd Co Cr Cu Fe Hg K La Mg Mn Mo Na Ni P Pb Rb S Sb Sc Si Sr Th Tl U V Y Zn C H N LO pH Co

2

Ag Al As B Ba Be Bi Ca Cd Co Cr Cu Fe Hg K La Mg Mn Mo Na Ni P Pb Rb S Sb Sc Si Sr Th Tl U V Y Zn C H N LO pH Co

3

Ag Al As B Ba Be Bi Ca Cd Co Cr Cu Fe Hg K La Mg Mn Mo Na Ni P Pb Rb S Sb Sc Si Sr Th Tl U V Y Zn C H N LO pH Co

4

Ag Al As B Ba Be Bi Ca Cd Co Cr Cu Fe Hg K La Mg Mn Mo Na Ni P Pb Rb S Sb Sc Si Sr Th Tl U V Y Zn C H N LO pH Co

5

Ag Al As B Ba Be Bi Ca Cd Co Cr Cu Fe Hg K La Mg Mn Mo Na Ni P Pb Rb S Sb Sc Si Sr Th Tl U V Y Zn C H N LO pH Co

6

Ag Al As B Ba Be Bi Ca Cd Co Cr Cu Fe Hg K La Mg Mn Mo Na Ni P Pb Rb S Sb Sc Si Sr Th Tl U V Y Zn C H N LO pH Co

7

Ag Al As B Ba Be Bi Ca Cd Co Cr Cu Fe Hg K La Mg Mn Mo Na Ni P Pb Rb S Sb Sc Si Sr Th Tl U V Y Zn C H N LO pH Co

8

Ag Al As B Ba Be Bi Ca Cd Co Cr Cu Fe Hg K La Mg Mn Mo Na Ni P Pb Rb S Sb Sc Si Sr Th Tl U V Y Zn C H N LO pH Co

9

separation = 2.02

1

separation = 2.67

2

separation = 3.81

3

separation = 2.46

4

separation = 2.55

5

separation = 2.36

6

separation = 2.02

7

separation = 2.11

8

separation = 2.4

9 Ag Al As B Ba Be Bi Ca Cd Co Cr Cu Fe Hg K La Mg Mn Mo Na Ni P Pb Rb S Sb Sc Si Sr Th Tl U V Y Zn C H N LO pH Co

1

Ag Al As B Ba Be Bi Ca Cd Co Cr Cu Fe Hg K La Mg Mn Mo Na Ni P Pb Rb S Sb Sc Si Sr Th Tl U V Y Zn C H N LO pH Co

2

Ag Al As B Ba Be Bi Ca Cd Co Cr Cu Fe Hg K La Mg Mn Mo Na Ni P Pb Rb S Sb Sc Si Sr Th Tl U V Y Zn C H N LO pH Co

3

Ag Al As B Ba Be Bi Ca Cd Co Cr Cu Fe Hg K La Mg Mn Mo Na Ni P Pb Rb S Sb Sc Si Sr Th Tl U V Y Zn C H N LO pH Co

4

Ag Al As B Ba Be Bi Ca Cd Co Cr Cu Fe Hg K La Mg Mn Mo Na Ni P Pb Rb S Sb Sc Si Sr Th Tl U V Y Zn C H N LO pH Co

5

Ag Al As B Ba Be Bi Ca Cd Co Cr Cu Fe Hg K La Mg Mn Mo Na Ni P Pb Rb S Sb Sc Si Sr Th Tl U V Y Zn C H N LO pH Co

6

Ag Al As B Ba Be Bi Ca Cd Co Cr Cu Fe Hg K La Mg Mn Mo Na Ni P Pb Rb S Sb Sc Si Sr Th Tl U V Y Zn C H N LO pH Co

7

Ag Al As B Ba Be Bi Ca Cd Co Cr Cu Fe Hg K La Mg Mn Mo Na Ni P Pb Rb S Sb Sc Si Sr Th Tl U V Y Zn C H N LO pH Co

8

Ag Al As B Ba Be Bi Ca Cd Co Cr Cu Fe Hg K La Mg Mn Mo Na Ni P Pb Rb S Sb Sc Si Sr Th Tl U V Y Zn C H N LO pH Co

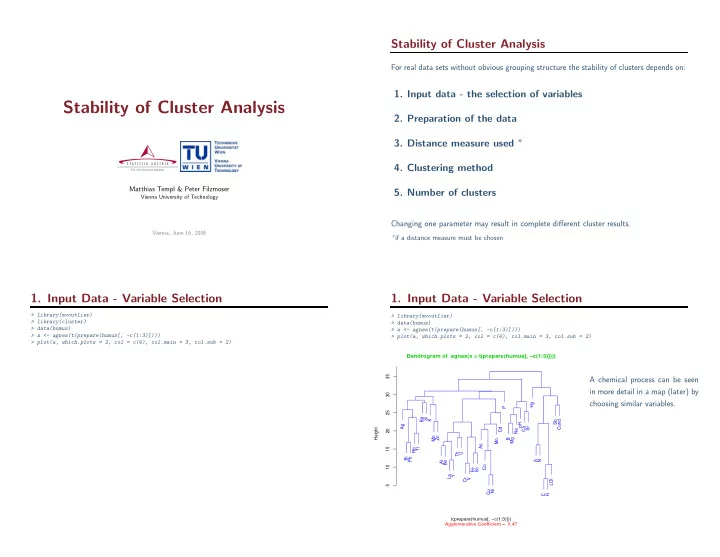

9 Pollution Highest pollution visualised by cluster 9 This can be seen in the graphic on the right e.g. Co, Cu, Ni typical elements for reflecting pollution Mclust on scaled and transformed humus data Validity measure on each cluster cluster size Visualising all clusters each in an own map Seaspray Cluster 5 (Greyscale depends on validity measure in each cluster)

Conclusions

- Applying cluster analysis on real data results in highly non-stable results for many

reasons

- The selection of variables and the selection of the optimal number of clusters on real

data is a non-trivial task.

- Cluster analysis can be seen as explorative data analysis to get ideas about your data

- Interactive tools which allow for various methods are very helpful