Solution 2: TLBs

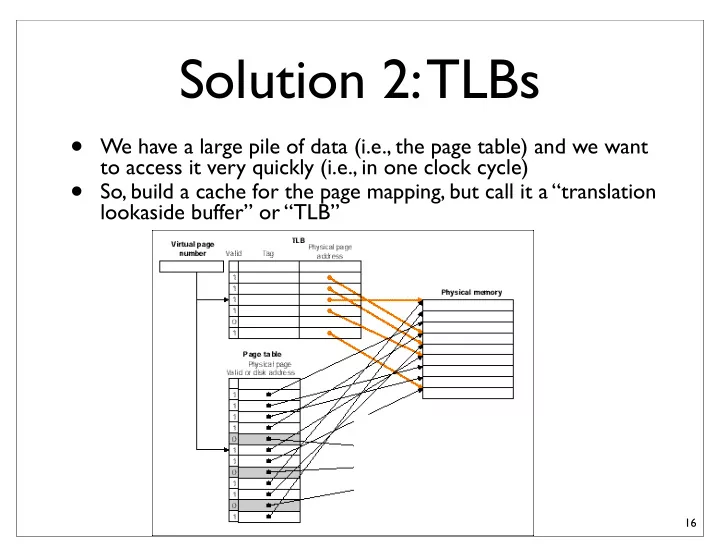

- We have a large pile of data (i.e., the page table) and we want

to access it very quickly (i.e., in one clock cycle)

- So, build a cache for the page mapping, but call it a “translation

lookaside buffer” or “TLB”

16

Solution 2: TLBs We have a large pile of data (i.e., the page table) - - PowerPoint PPT Presentation

Solution 2: TLBs We have a large pile of data (i.e., the page table) and we want to access it very quickly (i.e., in one clock cycle) So, build a cache for the page mapping, but call it a translation lookaside buffer or TLB 16

to access it very quickly (i.e., in one clock cycle)

lookaside buffer” or “TLB”

16

walkers” -- specialized state machines that can load page table entries into the TLB without OS intervention

big-A architecture.

its own format.

17

CPU Physical Cache TLB Primary Memory VA PA CPU VA Virtual Cache PA TLB Primary Memory

maps to a different physical address.

20

A A 0x1000 0x2000 Address Data Cache 0x1000 0xfff0000 0x2000 0xfff0000 Page Table B A 0x1000 0x2000 Address Data Cache 0x1000 0xfff0000 0x2000 0xfff0000 Page Table

write to the copy, and do the actual copy lazily.

21

Virtual address space char * A My Big Data memcpy(A, B, 100000) Physical address space My Big Data memcpy(A, B, 100000) char * B; My Empty Buffer Virtual address space char * A My Big Data Physical address space My Big Data char * B; Un- writeable copy By Big Empty Buffer

Two virtual addresses pointing the same physical address

VA1 and VA2 are aliases,

VA2 mod (cache size)

22

Solution (4): Virtually indexed physically tagged

Index L is available without consulting the TLB ⇒ cache and TLB accesses can begin simultaneously Critical path = max(cache time, TLB time)!!! Tag comparison is made after both accesses are completed Work if Cache Size ≤ Page Size ( C ≤ P) because then none of the cache inputs need to be translated (i.e., the index bits in physical and virtual addresses are the same)

VPN L = C-b b

TLB

Direct-map Cache Size 2C = 2L+b PPN Page Offset

hit? Data Physical Tag Tag VA PA “Virtual Index”

P

key idea: page offset bits are not translated and thus can be presented to the cache immediately

Stack Heap

1GB

Stack Heap

1GB

Stack Heap

1GB

Stack Heap

1GB

Stack Heap

1GB

Stack Heap

1GB

Stack Heap

1GB

Stack Heap

1GB

Stack Heap

1GB

Stack Heap

1GB

8GB Stack Heap (Physical) Memory

there is in a system

– Allow many programs to be “running” or at least “ready to run” at

– Absorb memory leaks (sometimes... if you are programming in C or C ++)

Level 1 Page Table Level 2 Page Tables

Data Pages

page in primary memory page on disk Root of the Current Page Table

p1

p2

Virtual Address (Processor Register)

PTE of a nonexistent page p1 p2 offset

11 12 21 22 31

10-bit L1 index 10-bit L2 index

Adapted from Arvind and Krste’s MIT Course 6.823 Fall 05

27

apps

28

disk

29

data in physical ram.

data in physical ram.

31