1

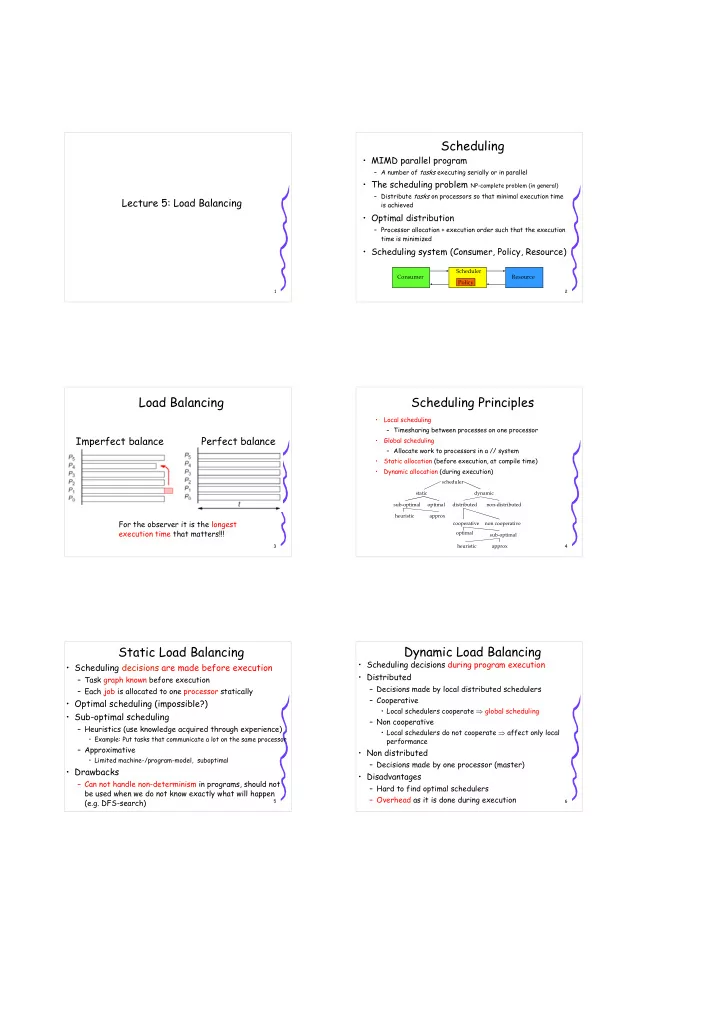

Lecture 5: Load Balancing

2

Scheduling

- MIMD parallel program

– A number of tasks executing serially or in parallel

- The scheduling problem NP-complete problem (in general)

– Distribute tasks on processors so that minimal execution time is achieved

- Optimal distribution

– Processor allocation + execution order such that the execution time is minimized

- Scheduling system (Consumer, Policy, Resource)

Consumer Resource Scheduler Policy

3

Load Balancing

Imperfect balance Perfect balance

For the observer it is the longest execution time that matters!!!

4

Scheduling Principles

- Local scheduling

– Timesharing between processes on one processor

- Global scheduling

– Allocate work to processors in a // system

- Static allocation (before execution, at compile time)

- Dynamic allocation (during execution)

scheduler static dynamic sub-optimal

- ptimal

heuristic approx distributed non-distributed cooperative non cooperative

- ptimal

sub-optimal approx heuristic 5

Static Load Balancing

- Scheduling decisions are made before execution

– Task graph known before execution – Each job is allocated to one processor statically

- Optimal scheduling (impossible?)

- Sub-optimal scheduling

– Heuristics (use knowledge acquired through experience)

- Example: Put tasks that communicate a lot on the same processor

– Approximative

- Limited machine-/program-model, suboptimal

- Drawbacks

– Can not handle non-determinism in programs, should not be used when we do not know exactly what will happen (e.g. DFS-search)

6

Dynamic Load Balancing

- Scheduling decisions during program execution

- Distributed

– Decisions made by local distributed schedulers – Cooperative

- Local schedulers cooperate ⇒ global scheduling

– Non cooperative

- Local schedulers do not cooperate ⇒ affect only local

performance

- Non distributed

– Decisions made by one processor (master)

- Disadvantages