Laboratory for Perceptual Robotics – Department of Computer Science

Rod Grupen Department of Computer Science University of Massachusetts Amherst

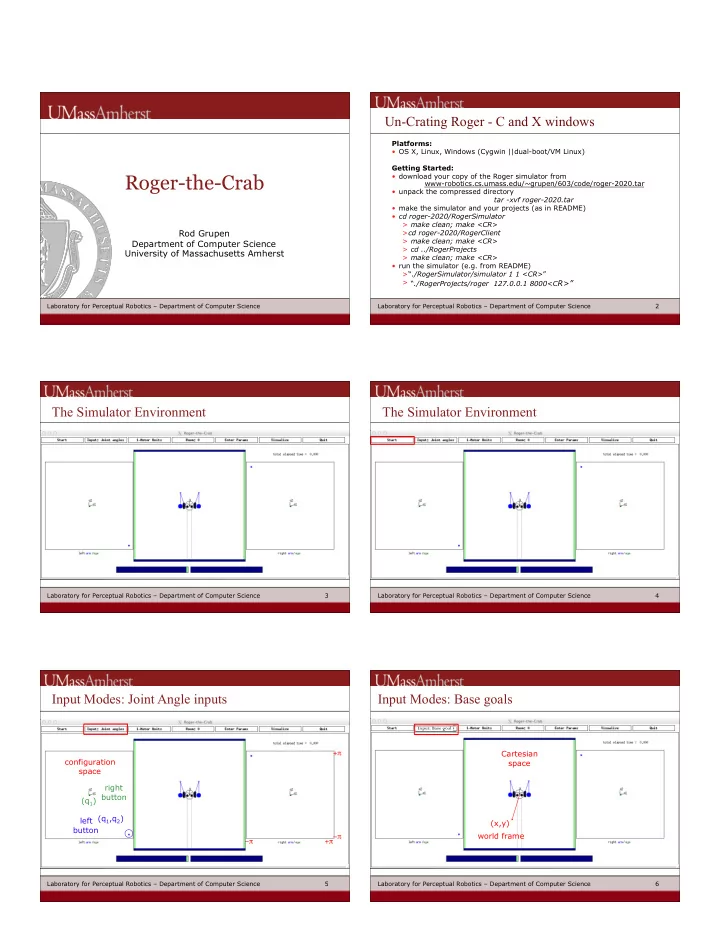

Roger-the-Crab

Laboratory for Perceptual Robotics – Department of Computer Science 2

Un-Crating Roger - C and X windows

Platforms:

- OS X, Linux, Windows (Cygwin ||dual-boot/VM Linux)

Getting Started:

- download your copy of the Roger simulator from

www-robotics.cs.umass.edu/~grupen/603/code/roger-2020.tar

- unpack the compressed directory

tar -xvf roger-2020.tar

- make the simulator and your projects (as in README)

- cd roger-2020/RogerSimulator

> make clean; make <CR> >cd roger-2020/RogerClient > make clean; make <CR> > cd ../RogerProjects > make clean; make <CR>

- run the simulator (e.g. from README)

>“./RogerSimulator/simulator 1 1 <CR>” > “./RogerProjects/roger 127.0.0.1 8000<CR>”

Laboratory for Perceptual Robotics – Department of Computer Science 3

The Simulator Environment

Laboratory for Perceptual Robotics – Department of Computer Science 4

The Simulator Environment

Laboratory for Perceptual Robotics – Department of Computer Science 5

configuration space left button (q1,q2) (q1) right button −π +π +π −π

Input Modes: Joint Angle inputs

Laboratory for Perceptual Robotics – Department of Computer Science 6

Input Modes: Base goals

Cartesian space (x,y) world frame

Input: Base goal