2013‐11‐22 1

Question Answering (continued) Lecture 22: November 22, 2013

CS886‐2 Natural Language Understanding University of Waterloo

CS886 Lecture Slides (c) 2013 P. Poupart 1

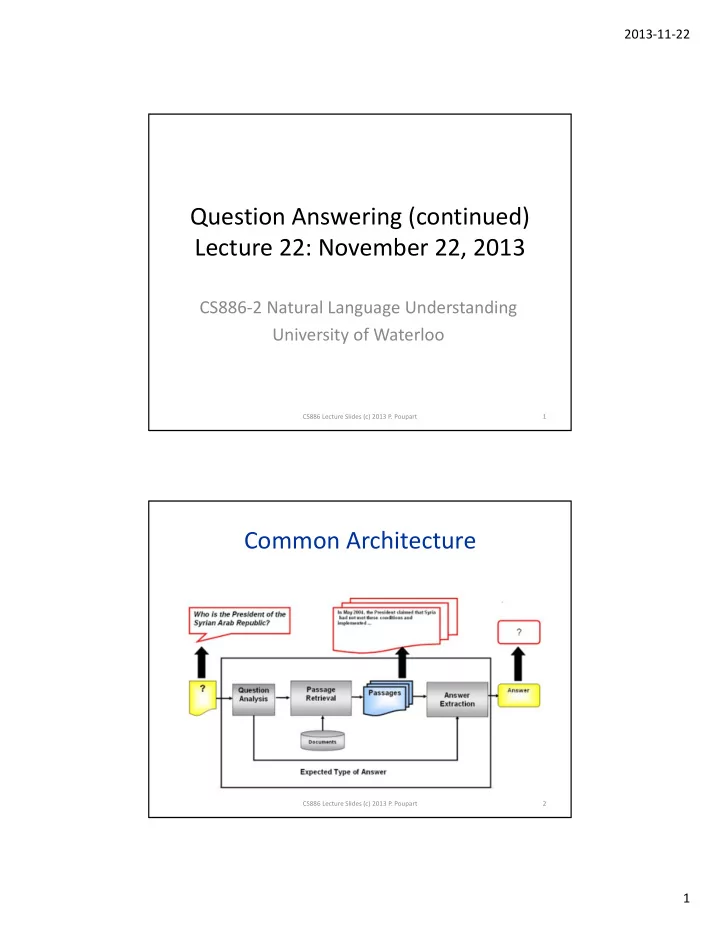

Common Architecture

CS886 Lecture Slides (c) 2013 P. Poupart 2

Question Answering (continued) Lecture 22: November 22, 2013 CS886 2 - - PDF document

2013 11 22 Question Answering (continued) Lecture 22: November 22, 2013 CS886 2 Natural Language Understanding University of Waterloo CS886 Lecture Slides (c) 2013 P. Poupart 1 Common Architecture CS886 Lecture Slides (c) 2013 P.

CS886 Lecture Slides (c) 2013 P. Poupart 1

CS886 Lecture Slides (c) 2013 P. Poupart 2

CS886 Lecture Slides (c) 2013 P. Poupart 3

CS886 Lecture Slides (c) 2013 P. Poupart 4

CS886 Lecture Slides (c) 2013 P. Poupart 5

E.g. {<autism, development disorders>, <caldera, volcanic crater>}

CS886 Lecture Slides (c) 2013 P. Poupart 6

CS886 Lecture Slides (c) 2013 P. Poupart 7

CS886 Lecture Slides (c) 2013 P. Poupart 8

CS886 Lecture Slides (c) 2013 P. Poupart 9

CS886 Lecture Slides (c) 2013 P. Poupart 10

11

Evaluation)

CS886 Lecture Slides (c) 2013 P. Poupart 12

– Context – Refine/disambiguate questions – Refine/disambiguate answers – Feedback

– Conversation – Chatbots – Spoken dialog systems

CS886 Lecture Slides (c) 2013 P. Poupart 13