1

Processes

- What are they? How do we represent

them?

- Scheduling

- Something smaller than a process?

Threads

- Synchronizing and Communicating

- Classic IPC problems

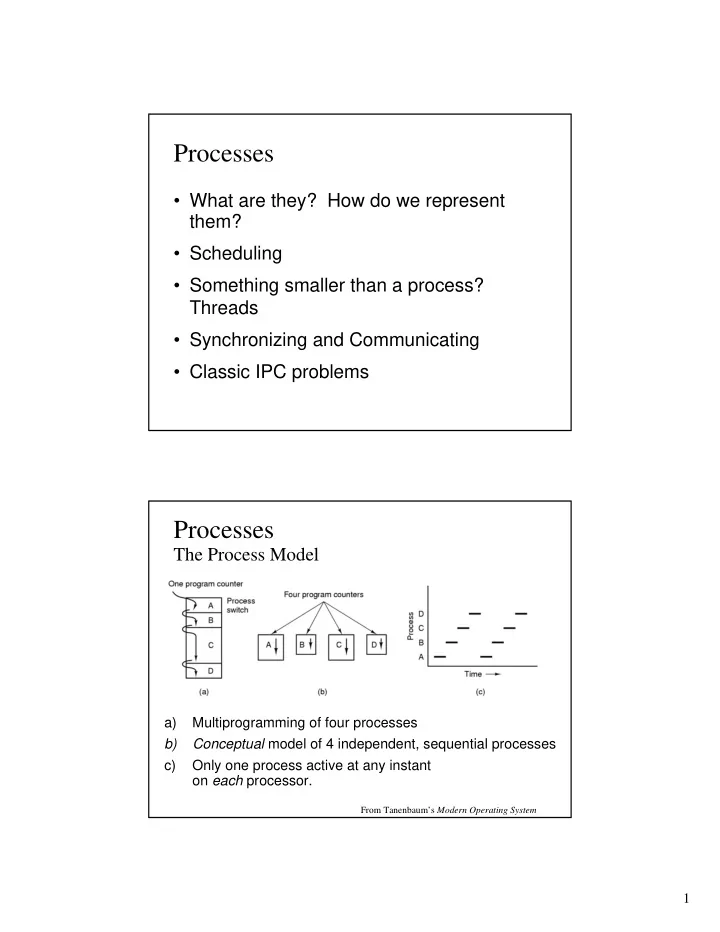

Processes

The Process Model

a) Multiprogramming of four processes b) Conceptual model of 4 independent, sequential processes c) Only one process active at any instant

- n each processor.

From Tanenbaum’s Modern Operating System