SLIDE 1

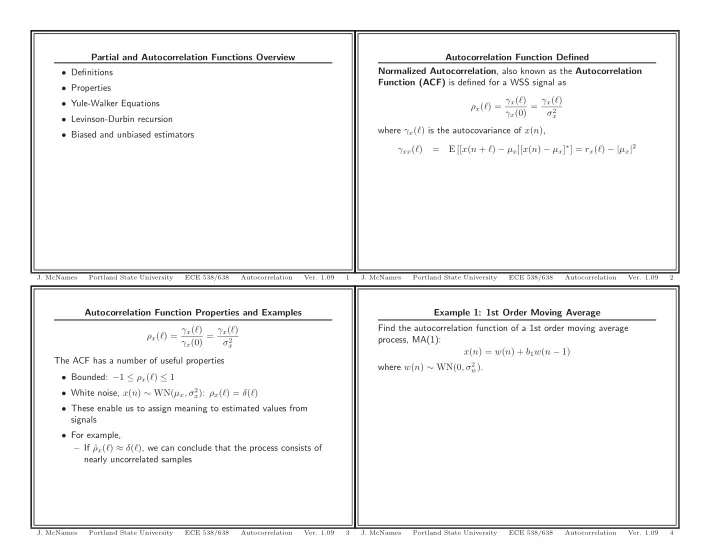

Autocorrelation Function Properties and Examples ρx(ℓ) = γx(ℓ) γx(0) = γx(ℓ) σ2

x

The ACF has a number of useful properties

- Bounded: −1 ≤ ρx(ℓ) ≤ 1

- White noise, x(n) ∼ WN(µx, σ2

x): ρx(ℓ) = δ(ℓ)

- These enable us to assign meaning to estimated values from

signals

- For example,

– If ˆ ρx(ℓ) ≈ δ(ℓ), we can conclude that the process consists of nearly uncorrelated samples

- J. McNames

Portland State University ECE 538/638 Autocorrelation

- Ver. 1.09

3

Partial and Autocorrelation Functions Overview

- Definitions

- Properties

- Yule-Walker Equations

- Levinson-Durbin recursion

- Biased and unbiased estimators

- J. McNames

Portland State University ECE 538/638 Autocorrelation

- Ver. 1.09

1

Example 1: 1st Order Moving Average Find the autocorrelation function of a 1st order moving average process, MA(1): x(n) = w(n) + b1w(n − 1) where w(n) ∼ WN(0, σ2

w).

- J. McNames

Portland State University ECE 538/638 Autocorrelation

- Ver. 1.09

4

Autocorrelation Function Defined Normalized Autocorrelation, also known as the Autocorrelation Function (ACF) is defined for a WSS signal as ρx(ℓ) = γx(ℓ) γx(0) = γx(ℓ) σ2

x

where γx(ℓ) is the autocovariance of x(n), γxx(ℓ) = E [[x(n + ℓ) − µx][x(n) − µx]∗] = rx(ℓ) − |µx|2

- J. McNames

Portland State University ECE 538/638 Autocorrelation

- Ver. 1.09