Model Comparison

Machine Learning and Pattern Recognition Chris Williams

School of Informatics, University of Edinburgh

October 2014

(These slides have been adapted from previous versions by Charles Sutton, Amos Storkey and David Barber

1 / 20

Overview

◮ The model selection problem ◮ Overfitting ◮ Validation set, cross validation ◮ Bayesian Model Comparison ◮ Reading: Murphy 1.4.7, 1.4.8, 6.5.3, 5.3; Barber 12.1-12.4,

13.2 up to end of 13.2.2

2 / 20

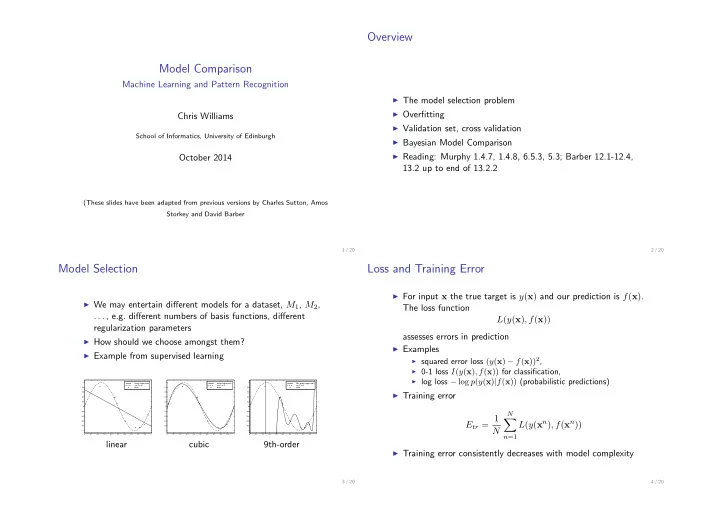

Model Selection

◮ We may entertain different models for a dataset, M1, M2,

. . . , e.g. different numbers of basis functions, different regularization parameters

◮ How should we choose amongst them? ◮ Example from supervised learning

0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 −1 −0.8 −0.6 −0.4 −0.2 0.2 0.4 0.6 0.8 1

linear regression sin(2 π x) data

0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 −1 −0.8 −0.6 −0.4 −0.2 0.2 0.4 0.6 0.8 1

cubic regression sin(2 π x) data

0.1 0.2 0.3 0.4 0.5 0.6 0.7 0.8 0.9 1 −1 −0.8 −0.6 −0.4 −0.2 0.2 0.4 0.6 0.8 1

9th−order regression sin(2 π x) data

linear cubic 9th-order

3 / 20

Loss and Training Error

◮ For input x the true target is y(x) and our prediction is f(x).

The loss function L(y(x), f(x)) assesses errors in prediction

◮ Examples

◮ squared error loss (y(x) − f(x))2, ◮ 0-1 loss I(y(x), f(x)) for classification, ◮ log loss − log p(y(x)|f(x)) (probabilistic predictions)

◮ Training error

Etr = 1 N

N

- n=1

L(y(xn), f(xn))

◮ Training error consistently decreases with model complexity

4 / 20