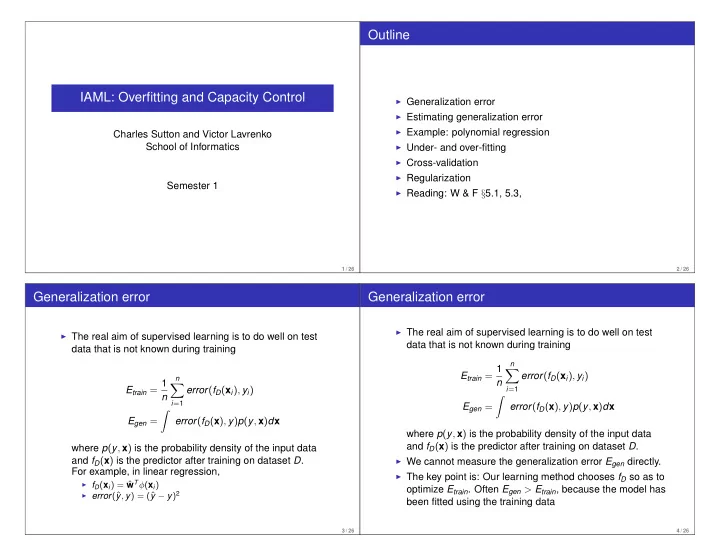

IAML: Overfitting and Capacity Control

Charles Sutton and Victor Lavrenko School of Informatics Semester 1

1 / 26

Outline

◮ Generalization error ◮ Estimating generalization error ◮ Example: polynomial regression ◮ Under- and over-fitting ◮ Cross-validation ◮ Regularization ◮ Reading: W & F §5.1, 5.3,

2 / 26

Generalization error

◮ The real aim of supervised learning is to do well on test

data that is not known during training Etrain = 1 n

n

- i=1

error(fD(xi), yi) Egen =

- error(fD(x), y)p(y, x)dx

where p(y, x) is the probability density of the input data and fD(x) is the predictor after training on dataset D. For example, in linear regression,

◮ fD(xi) = ˆ

wTφ(xi)

◮ error(ˆ

y, y) = (ˆ y − y)2

3 / 26

Generalization error

◮ The real aim of supervised learning is to do well on test

data that is not known during training Etrain = 1 n

n

- i=1

error(fD(xi), yi) Egen =

- error(fD(x), y)p(y, x)dx

where p(y, x) is the probability density of the input data and fD(x) is the predictor after training on dataset D.

◮ We cannot measure the generalization error Egen directly. ◮ The key point is: Our learning method chooses fD so as to

- ptimize Etrain. Often Egen > Etrain, because the model has

been fitted using the training data

4 / 26