1

Lect ure 11

J une 7, 2005 CS 486/ 686

CS486/686 Lecture Slides (c) 2005 C. Boutilier, P. Poupart & K. Larson

2

Out line

- Decision Net works

– Aka I nf luence diagr ams

- Value of inf ormat ion

- Russell and Norvig: Sect 16.5-16.6

CS486/686 Lecture Slides (c) 2005 C. Boutilier, P. Poupart & K. Larson

3

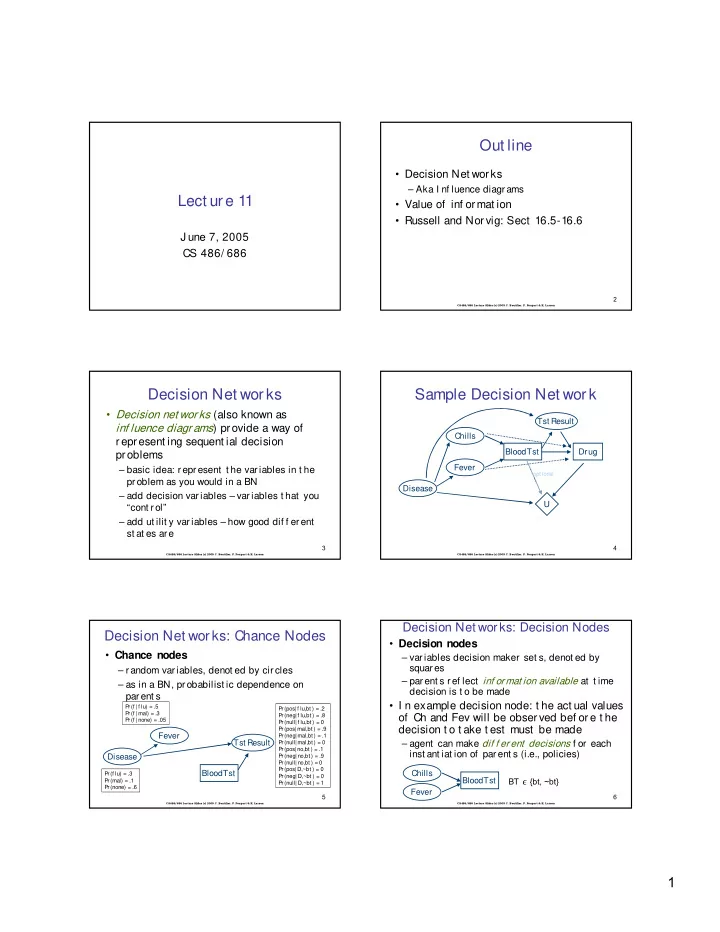

Decision Net works

- Decision net wor ks (also known as

inf luence diagr ams) provide a way of represent ing sequent ial decision problems

– basic idea: represent t he variables in t he problem as you would in a BN – add decision variables – variables t hat you “cont r ol” – add ut ilit y variables – how good dif f erent st at es are

CS486/686 Lecture Slides (c) 2005 C. Boutilier, P. Poupart & K. Larson

4

Sample Decision Net work

Disease Tst Result Chills Fever BloodTst Drug U

- pt ional

CS486/686 Lecture Slides (c) 2005 C. Boutilier, P. Poupart & K. Larson

5

Decision Net wor ks: Chance Nodes

- Chance nodes

– random var iables, denot ed by circles – as in a BN, probabilist ic dependence on parent s

Disease Fever

Pr(f lu) = .3 Pr(mal) = .1 Pr(none) = .6 Pr(f | f lu) = .5 Pr(f | mal) = .3 Pr(f | none) = .05

Tst Result BloodTst

Pr(pos| f lu,bt ) = .2 Pr(neg| f lu,bt ) = .8 Pr(null| f lu,bt ) = 0 Pr(pos| mal,bt ) = .9 Pr(neg| mal,bt ) = .1 Pr(null| mal,bt ) = 0 Pr(pos| no,bt ) = .1 Pr(neg| no,bt ) = .9 Pr(null| no,bt ) = 0 Pr(pos|D,~bt ) = 0 Pr(neg| D,~bt ) = 0 Pr(null| D,~bt ) = 1

CS486/686 Lecture Slides (c) 2005 C. Boutilier, P. Poupart & K. Larson

6

Decision Net works: Decision Nodes

- Decision nodes

– variables decision maker set s, denot ed by squares – parent s ref lect inf ormat ion available at t ime decision is t o be made

- I n example decision node: t he act ual values

- f Ch and Fev will be observed bef ore t he