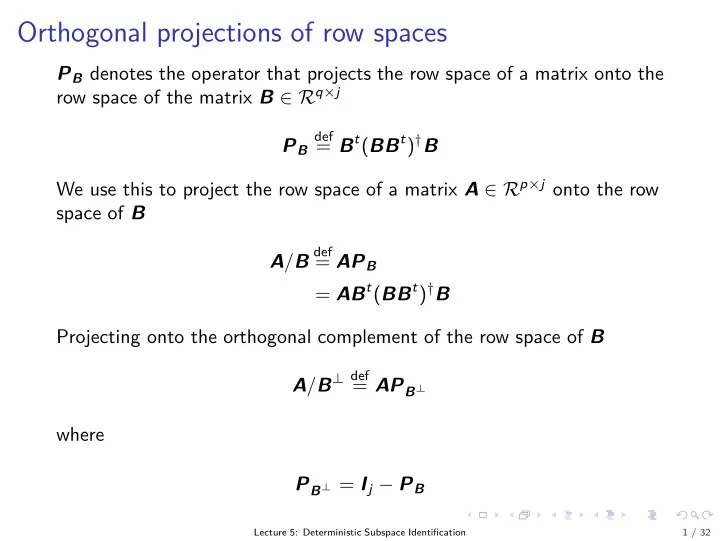

Orthogonal projections of row spaces

PB denotes the operator that projects the row space of a matrix onto the row space of the matrix B ∈ Rq×j PB

def

= Bt(BBt)†B We use this to project the row space of a matrix A ∈ Rp×j onto the row space of B A/B

def

= APB = ABt(BBt)†B Projecting onto the orthogonal complement of the row space of B A/B⊥ def = APB⊥ where PB⊥ = Ij − PB

Lecture 5: Deterministic Subspace Identification 1 / 32