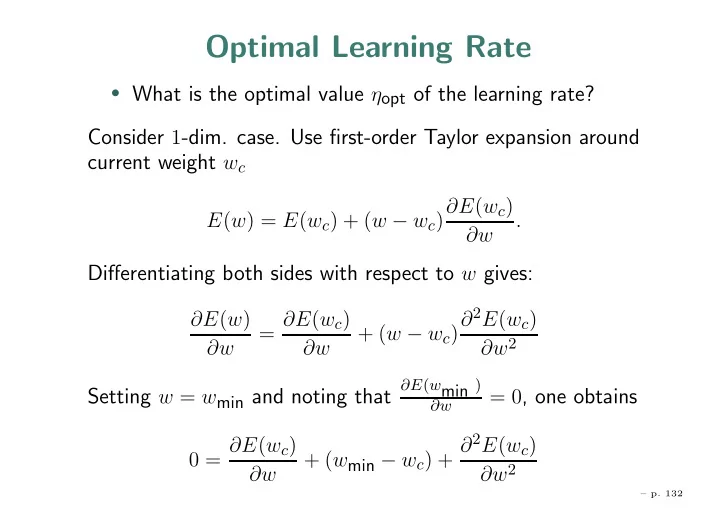

Optimal Learning Rate

- What is the optimal value ηopt of the learning rate?

Consider 1-dim. case. Use first-order Taylor expansion around current weight wc

E(w) = E(wc) + (w − wc)∂E(wc) ∂w .

Differentiating both sides with respect to w gives:

∂E(w) ∂w = ∂E(wc) ∂w + (w − wc)∂2E(wc) ∂w2

Setting w = wmin and noting that

∂E(wmin ) ∂w

= 0, one obtains 0 = ∂E(wc) ∂w + (wmin − wc) + ∂2E(wc) ∂w2

– p. 132